Synthetic Data in Healthcare:

A Focus on EEG Signals

Addressing class imbalance in seizure detection by synthesizing realistic ictal EEG with generative models - GAN, VAE, and Diffusion - trained on the CHB-MIT Scalp EEG Database.

The Problem

Epilepsy affects over 50 million people worldwide. Automated seizure detection from scalp EEG could enable real-time monitoring, but two obstacles make it unreliable: clinical data scarcity (patient recordings are expensive, require expert annotation, and are restricted by privacy and regulatory constraints) and extreme class imbalance (seizure activity accounts for less than 0.4% of recording time). EEG signals are further complicated by strong inter-subject variability, non-stationarity, and noise - making cross-patient generalization particularly difficult.

This thesis investigates whether synthetic data augmentation using generative models (TimeGAN, CVAE, LDM) can address these problems - but augmentation is not guaranteed to help. It could reduce subject-dependent overfitting by increasing training variability, or it could amplify subject-specific patterns if the generator reproduces them. Under a strict LOPO protocol, this work tests which outcome prevails and seeks to distinguish genuine gains from misleading improvements.

Methodology

Research questions, evaluation strategy, and literature gaps

Dataset

CHB-MIT Scalp EEG: patient profiles, recordings, and seizure distribution

Data Pipeline

Cleaning, filtering, windowing, caching, and parameter justifications

Generative Models

TimeGAN, CVAE, LDM: architectures, design decisions, and trade-offs

Roadmap

7 experiments (E1–E7), timeline, and protocol rules

Status

Implementation progress and what's left

Results

LOPO and single-split experiment results (E1-E5)

Glossary

Key terms and acronyms used throughout the thesis

References

All cited works, organized by source type

Methodology

Research framework, questions, and evaluation strategy

Goals

Research Questions

CRISP-DM Framework

This thesis follows the CRISP-DM methodology, chosen for its structured, iterative approach to data-driven research. Unlike purely linear workflows, CRISP-DM explicitly supports feedback loops between phases - for example, evaluation results may reveal the need for different preprocessing, looping back to Data Preparation. The six phases map to thesis chapters as follows:

*Deployment is adapted for academic context: rather than production deployment, Chapter 6 covers conclusions, practical recommendations (RQ5), and directions for future clinical integration.

Evaluation Strategy

All experiments use LOPO cross-validation (23 folds, one patient held out completely per fold) with a frozen 1D-CNN detector. Synthetic data enters training only - never validation or test. The model never sees any test patient data during training, normalisation, or generation.

Systematic Literature Review (26 Articles) RQ1

Conducted following PRISMA guidelines across IEEE Xplore, PubMed, Scopus, and Web of Science. Inclusion criteria: synthetic data generation or augmentation for healthcare time-series, published 2020–2025.

What's Being Evaluated: The Generators, Not the Classifier

The detector is a measuring instrument - it's frozen specifically so it can't be the source of any performance difference. The actual object of study is the synthetic data itself. Four complementary goals (thesis Section 4.4), structured so the data is evaluated directly before it ever touches the downstream classifier:

Fidelity and discriminability evaluate the synthetic data with no dependence on the downstream task. Utility uses the frozen detector as an indirect measurement of data quality. Subject-identity analysis checks for memorisation and privacy risks - whether synthetic data makes patients more identifiable rather than less. Together they answer "is this synthetic data any good?" from multiple angles - the classifier result alone would be insufficient.

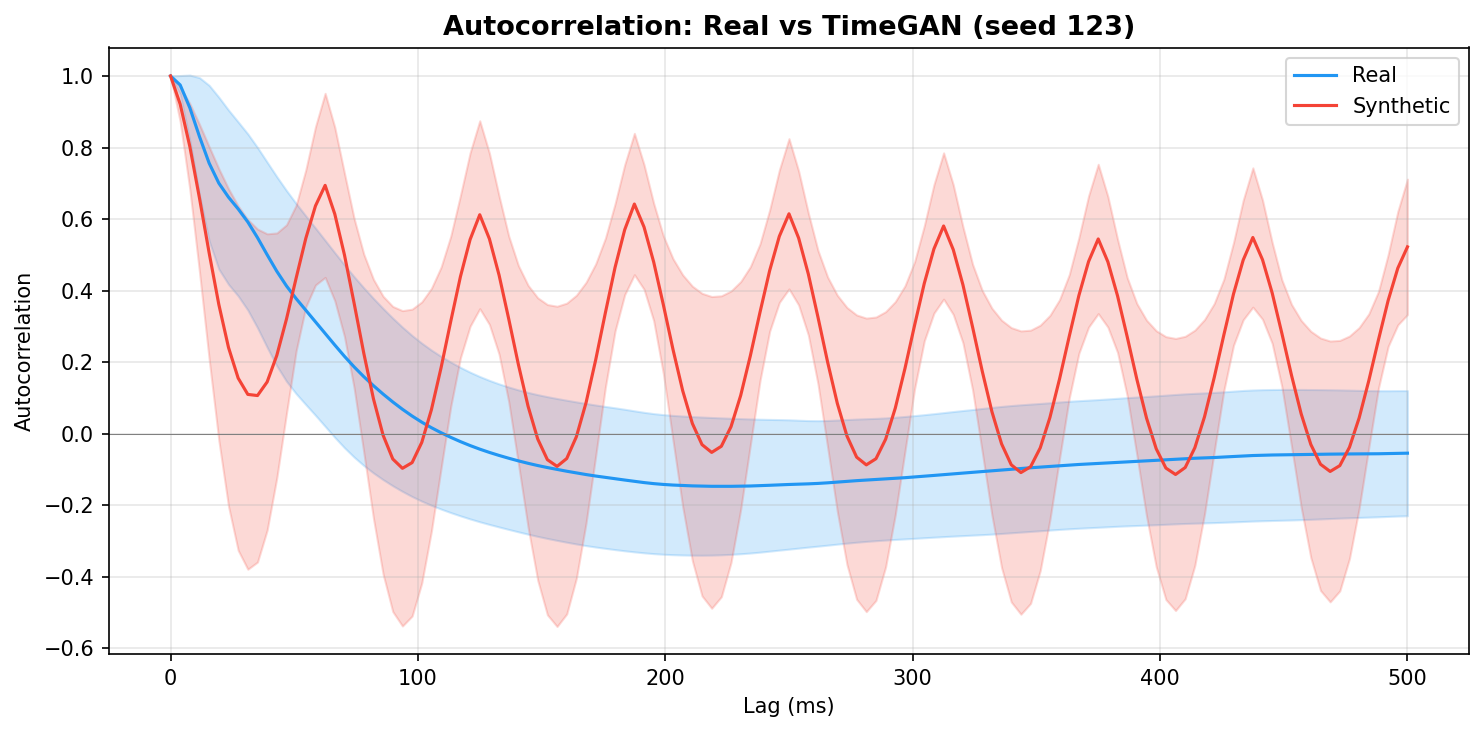

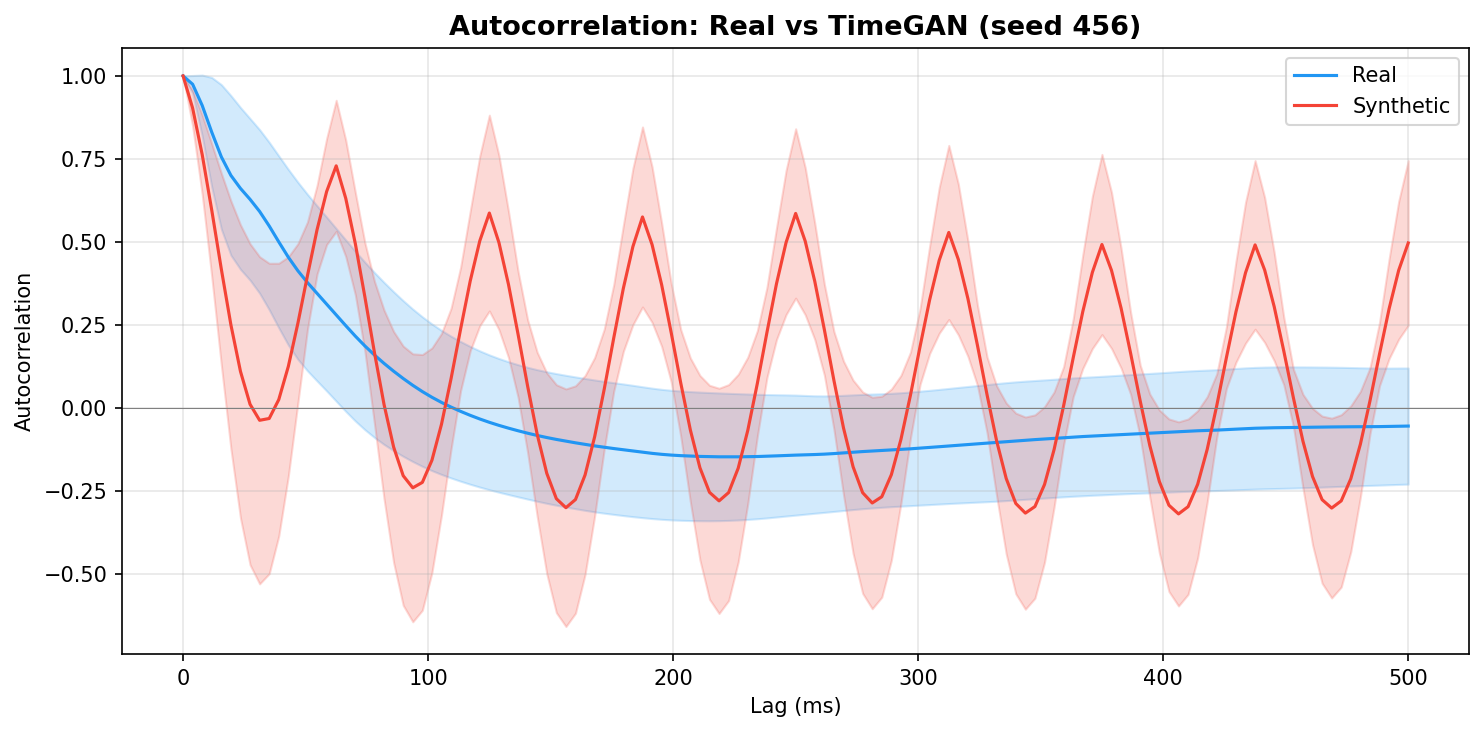

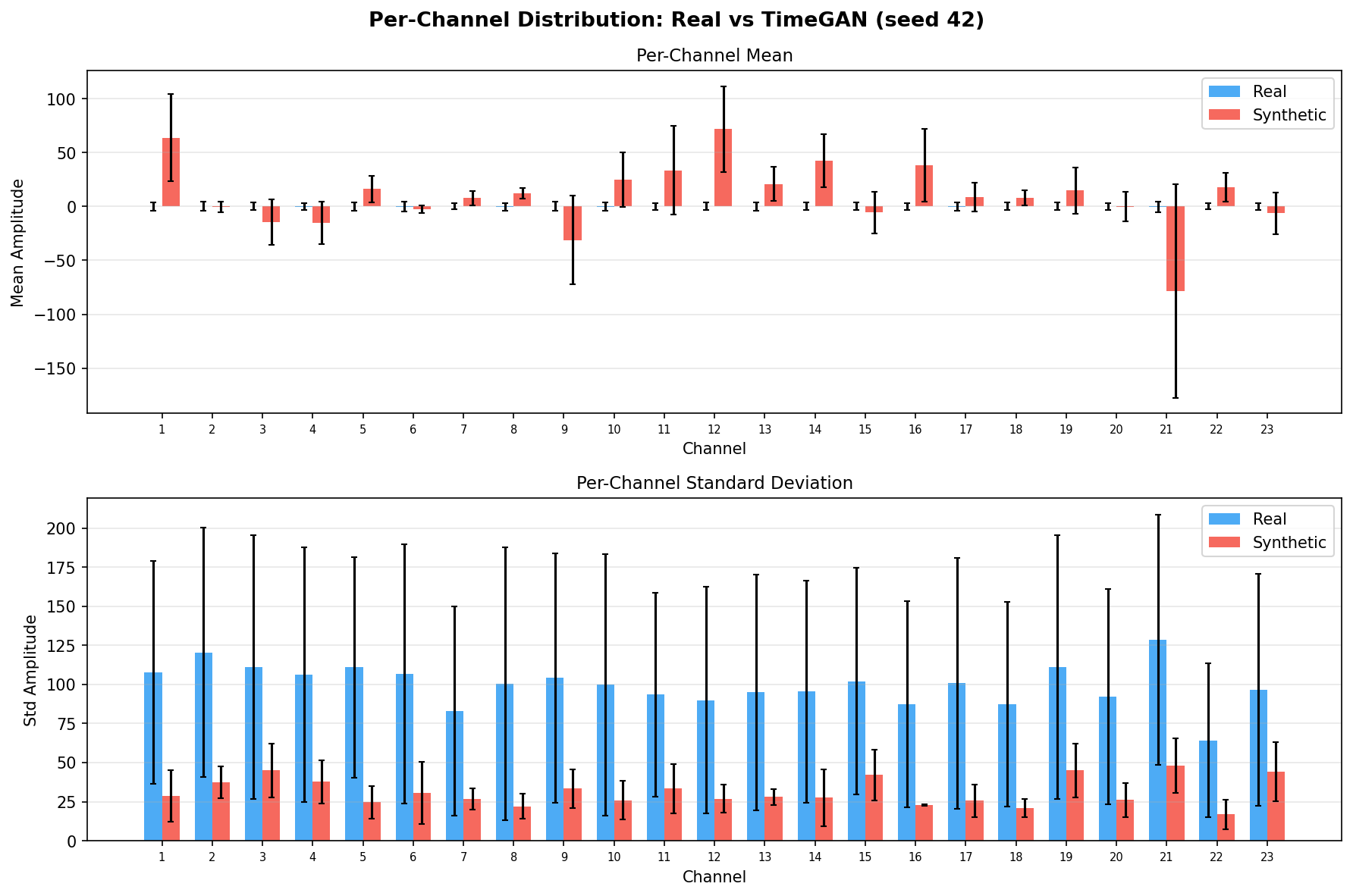

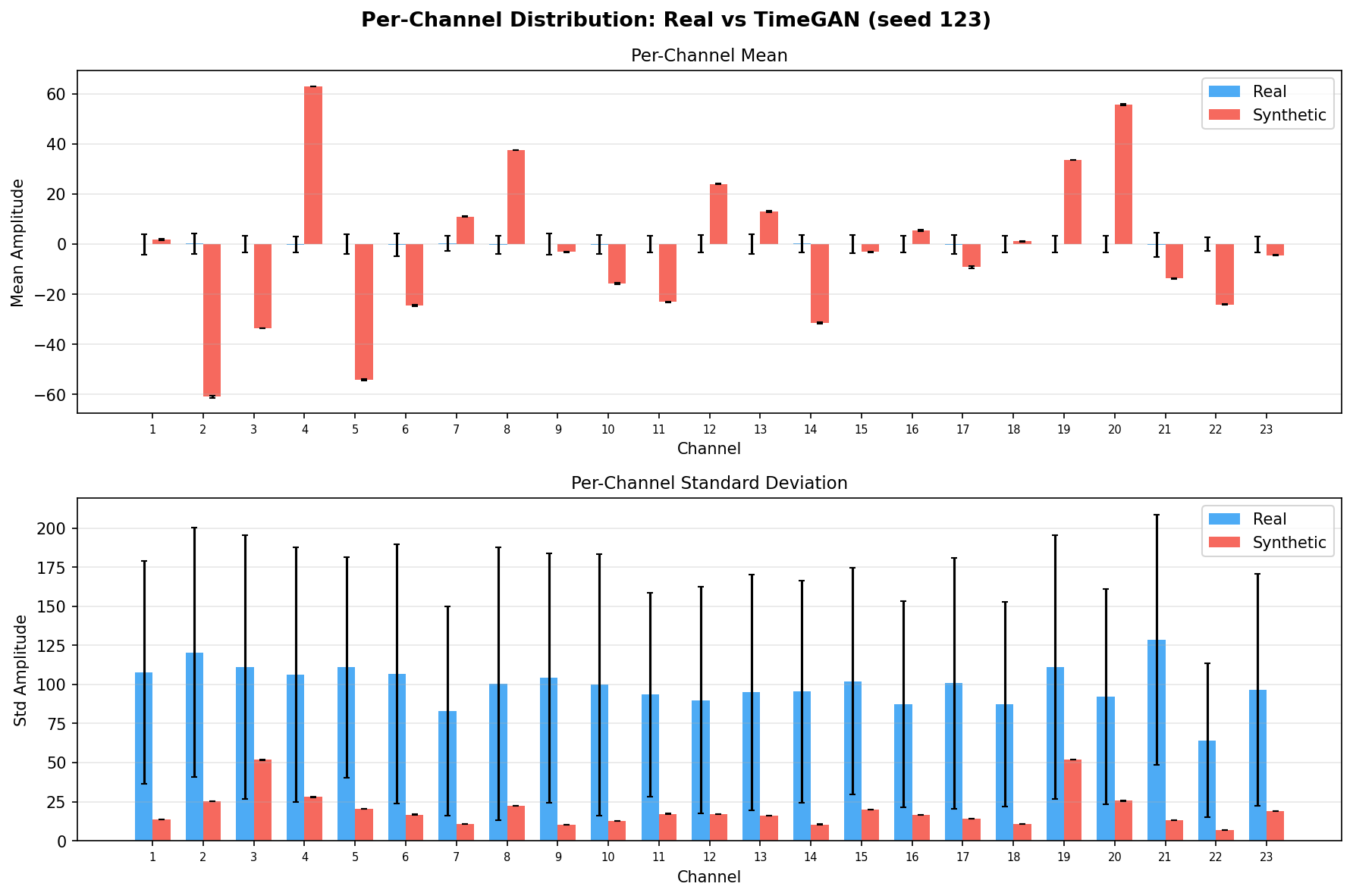

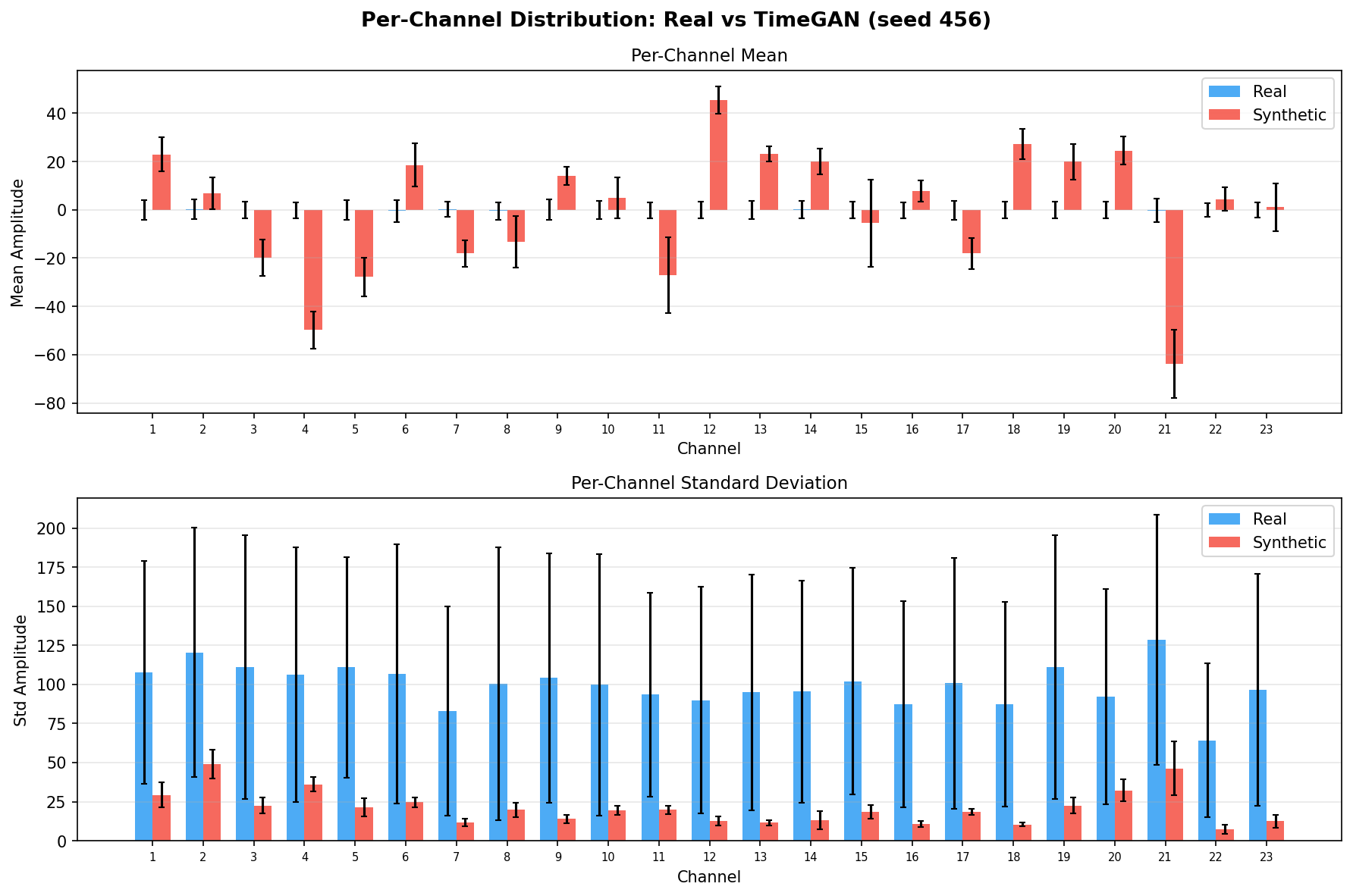

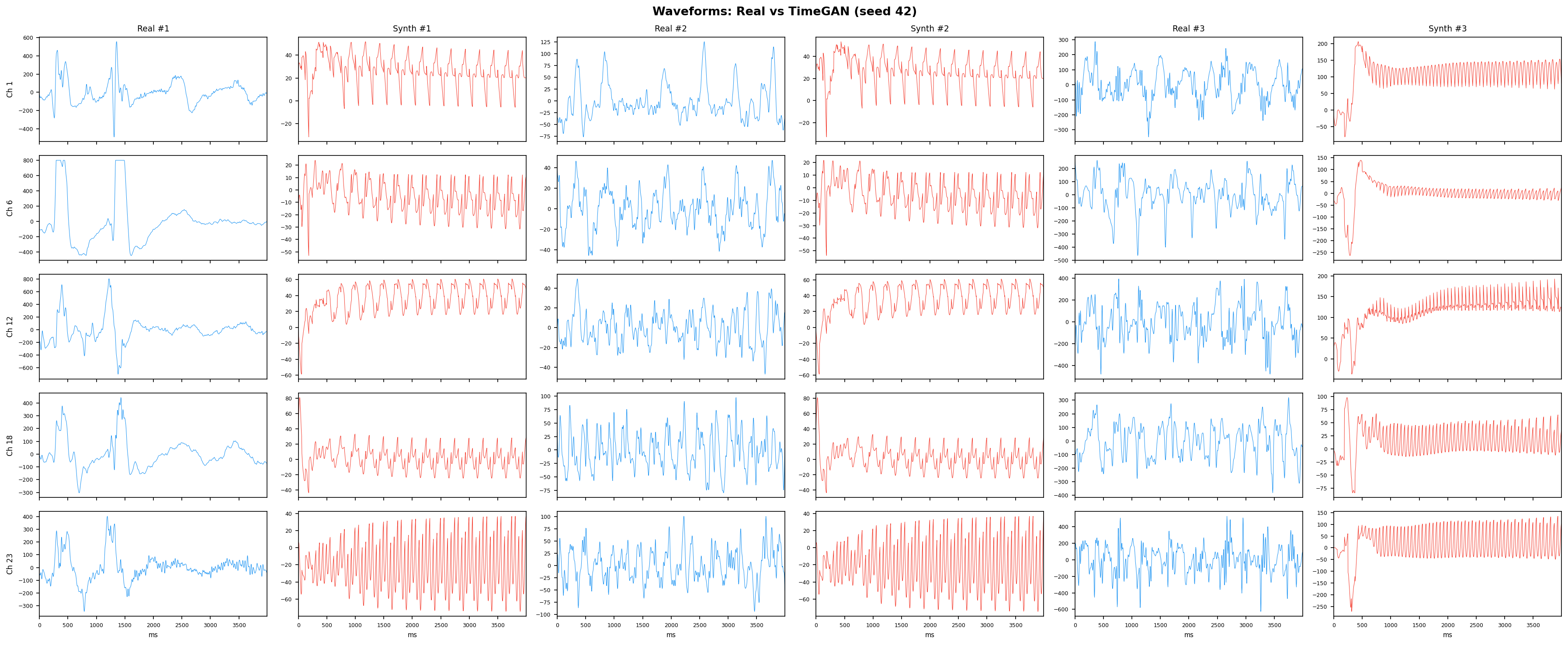

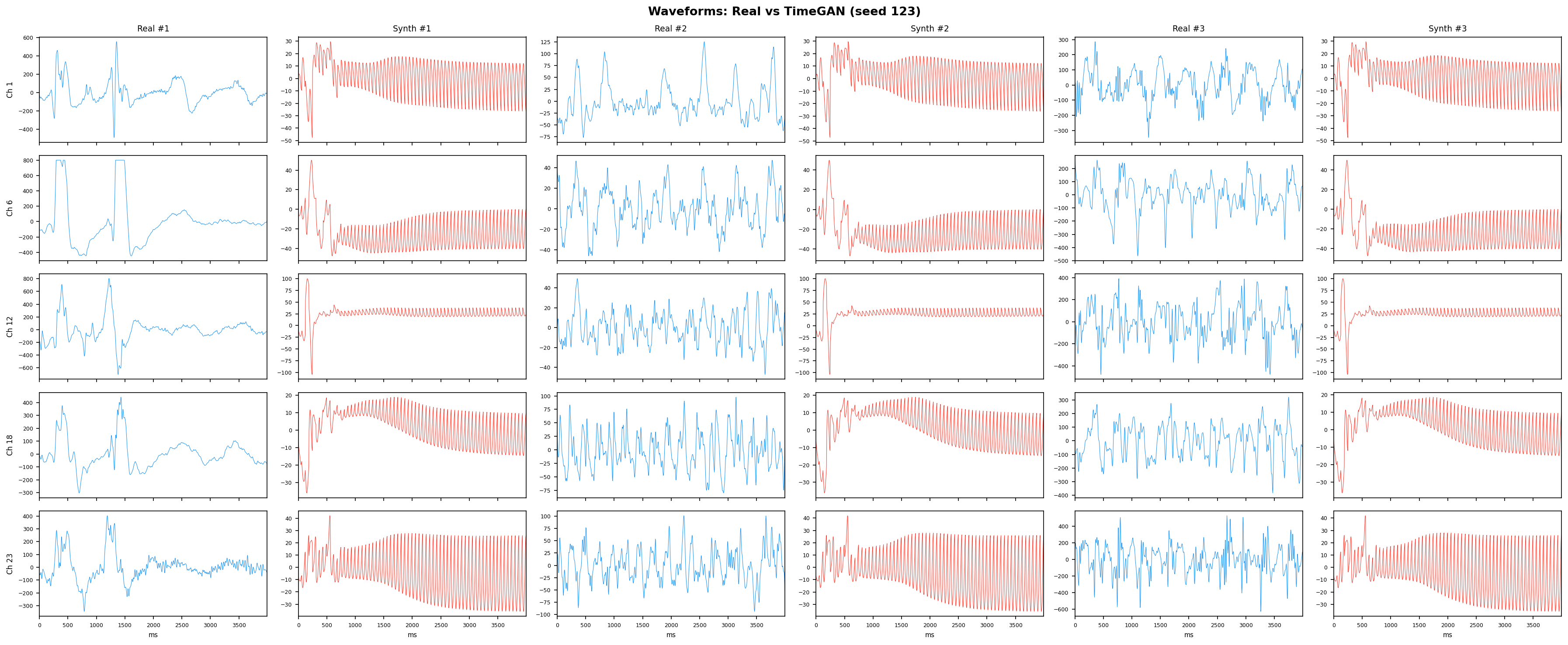

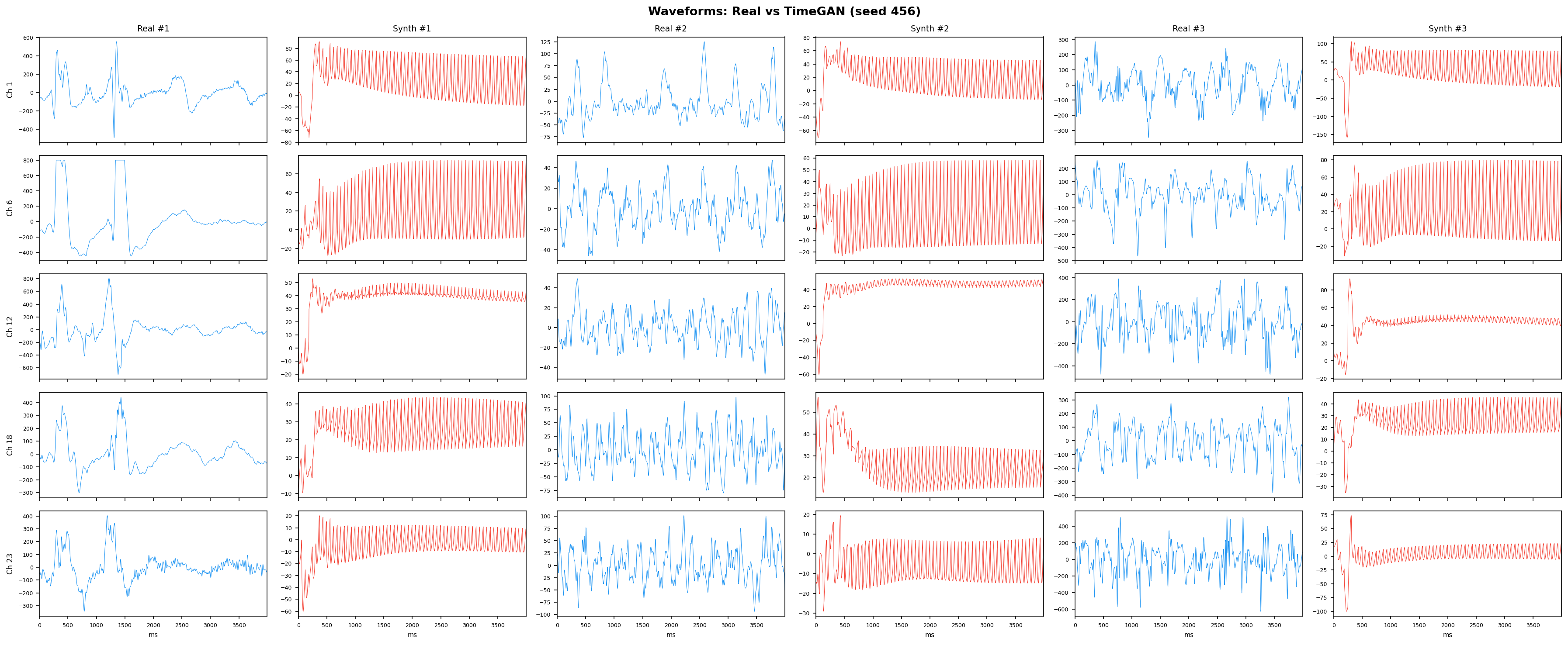

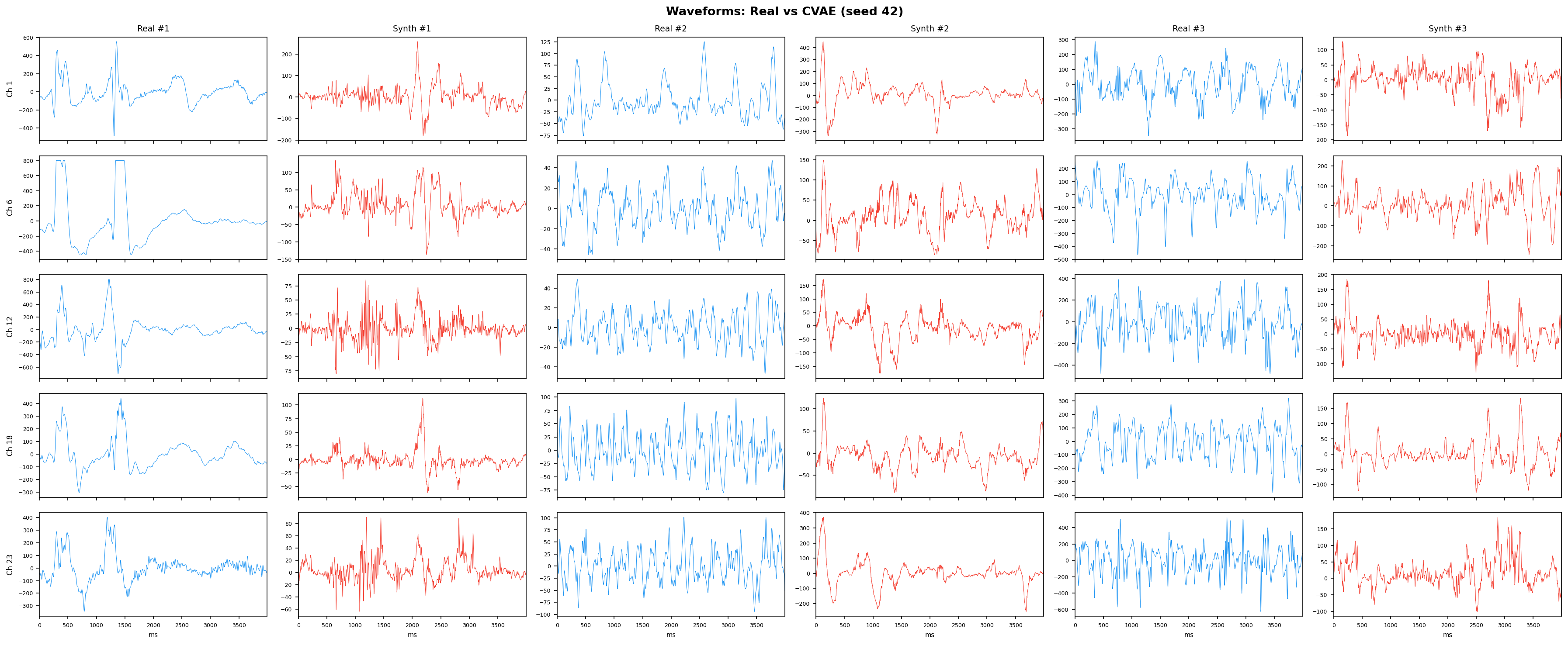

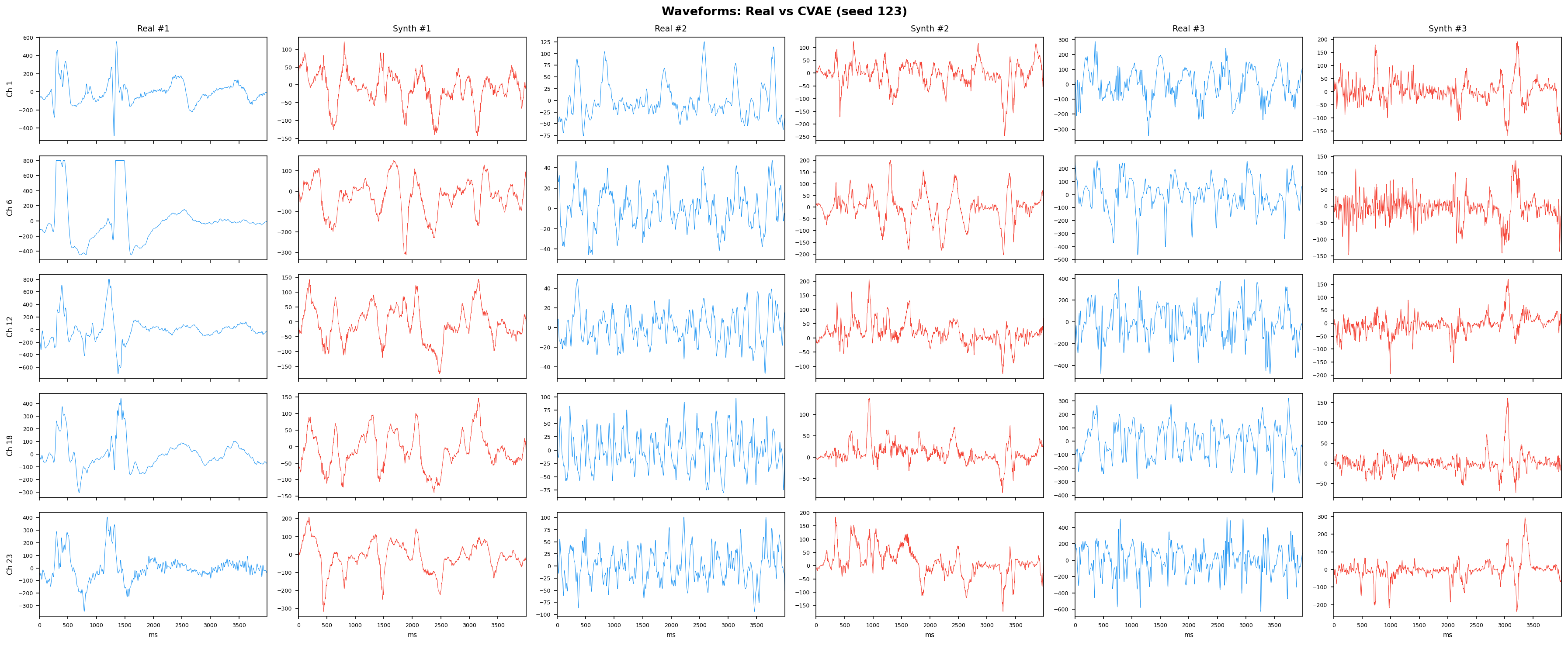

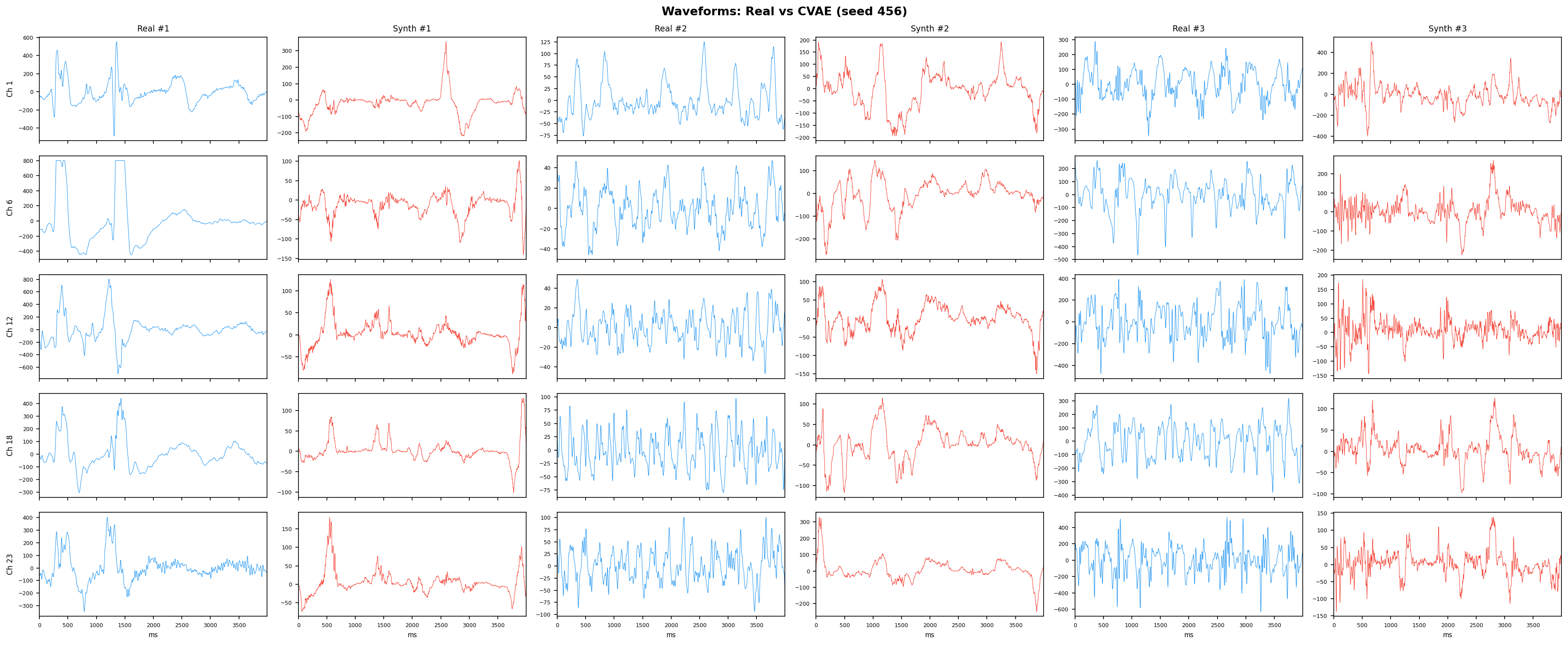

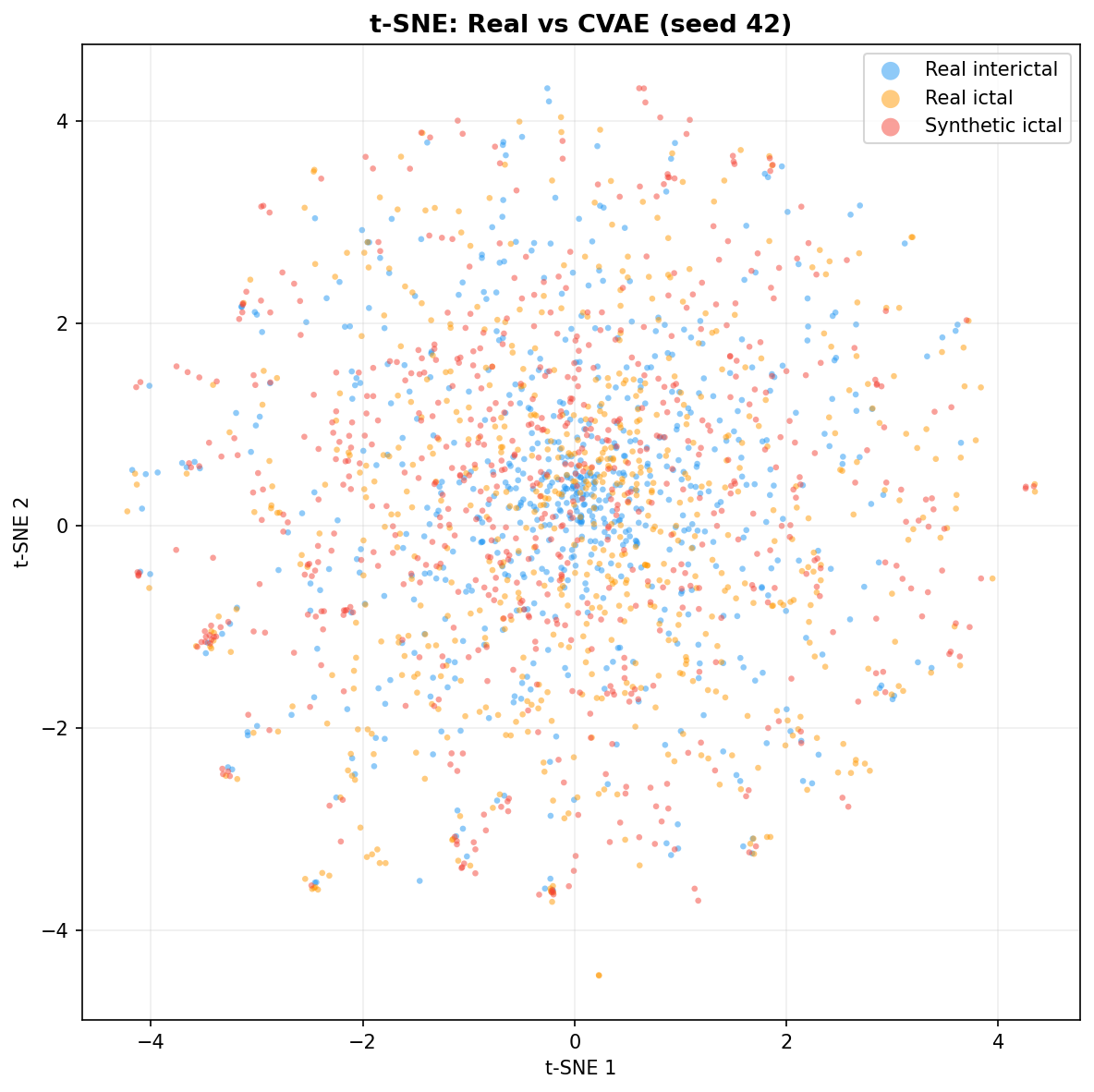

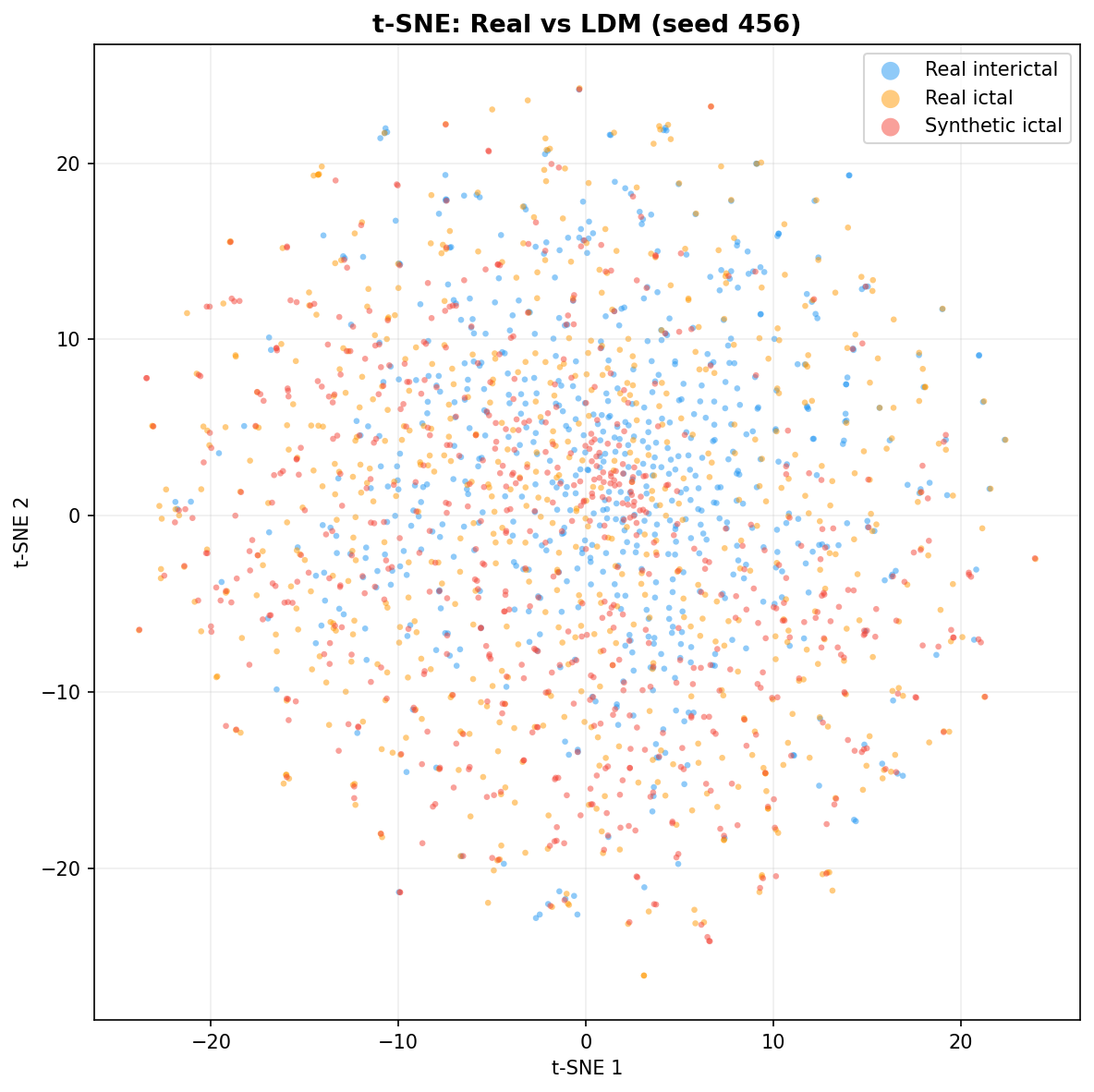

Single-split fidelity results (all generators, 3 seeds) are available on the Results page. Full LOPO fidelity, utility, and subject-identity results follow once E2-E5 LOPO completes.

Complete Metrics Reference

Every metric in the thesis evaluation framework, grouped by what it evaluates. The "Why?" column traces each choice to its source - a literature gap, an SLR finding, or a methodological requirement.

Computed per experiment Computed post-hoc from LOPO results Computed in E7 (after E3-E5 LOPO)

Utility - Does synthetic data improve seizure detection?

| Metric | What it answers | Why this metric? |

|---|---|---|

| AUPRC (primary) | Overall detection quality across all thresholds, sensitive to rare-class performance | A model predicting "no seizure" always gets ~99.6% accuracy and decent AUROC, but terrible AUPRC. Precision-recall curves are more informative than ROC curves for imbalanced problems (Saito & Rehmsmeier, 2015). Already used in seizure detection: Yuan et al., 2019; Constantino et al., 2021; Manzouri et al., 2022; Yamada et al., 2025; Park et al., 2026. |

| AUROC | Overall discrimination (threshold-independent), regardless of class balance | Enables comparison with prior seizure detection literature. Standard metric in Bing et al., 2022 (TSTR evaluation uses AUROC) and commonly reported in clinical EEG studies. Not primary because it's insensitive to class imbalance (Saito & Rehmsmeier, 2015). |

| F1 (optimal threshold) | Best achievable precision-recall balance. Sweeps all thresholds and reports the maximum F1, not F1 at the default 0.5 cutoff | Ghanem et al., 2023: "F1 is particularly useful with imbalanced classes" (harmonic mean penalises both false positives and false negatives). They also recommend threshold optimization for imbalanced classification: "selecting the threshold with the best performance" rather than defaulting to 0.5. With <1% seizure prevalence, the model may output well-calibrated but low probabilities for real seizures - fixed 0.5 would misclassify them all. We report both the max F1 and the threshold that achieves it. F1 is a standard downstream metric for EEG generation (You et al., 2025, Table 2; Zhao et al., 2022). |

| Sensitivity @ 95% Specificity | How many seizures are caught when false alarm rate is clinically acceptable (5%) | Chua et al., 2022 report sensitivity "at >95% specificity" on CHB-MIT as their primary operating-point metric for seizure detection. Baumgartner & Koren, 2018: "Low false-positive alarm rates are of critical importance for acceptance of algorithms in a clinical setting." At 95% specificity, the system fires at most 1 false alarm per 20 interictal windows - a widely adopted clinical threshold in medical binary classification. |

| Per-patient AUPRC (mean/std) | Whether utility is consistent across patients or driven by a few easy cases | Global AUPRC can be inflated by a few high-seizure patients. Per-patient dispersion reveals stability across the cohort (Zhao et al., 2022). |

| TSTR AUPRC | Can a detector trained on only synthetic seizures still detect real ones? | Isolates generator quality from augmentation effects. If TSTR fails, synthetic data lacks seizure-discriminative structure regardless of ratio. Used by Bing et al., 2022. |

| Wilcoxon signed-rank p-value | Is the improvement statistically significant across all 23 patients? | Non-parametric (no normality assumption). 23 paired LOPO observations. Standard for comparing paired fold results when normality cannot be assumed. |

Fidelity - Does synthetic data look like real EEG?

| Metric | What it answers | Why this metric? | ||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

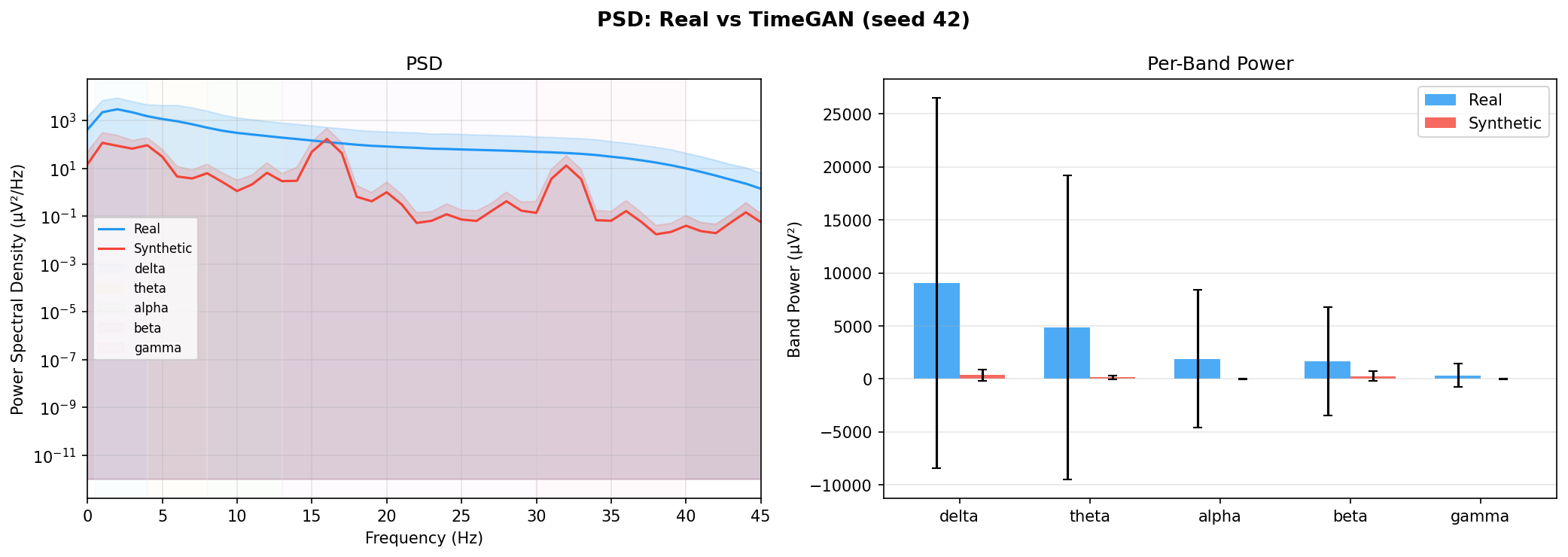

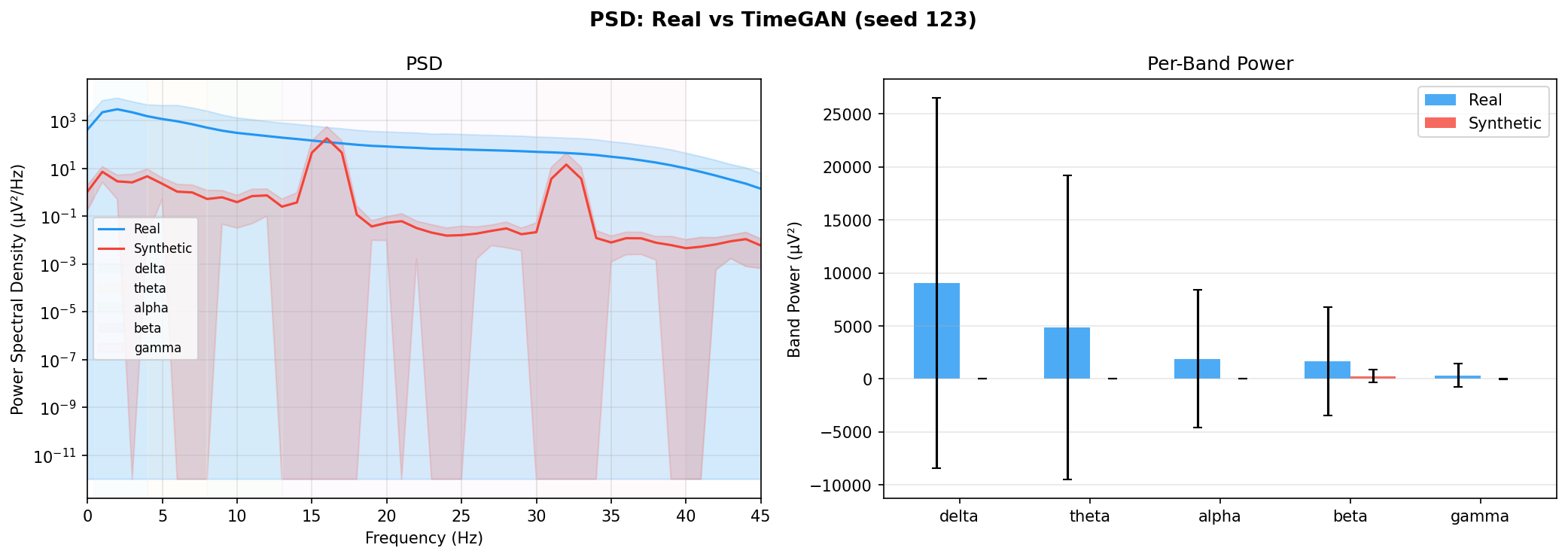

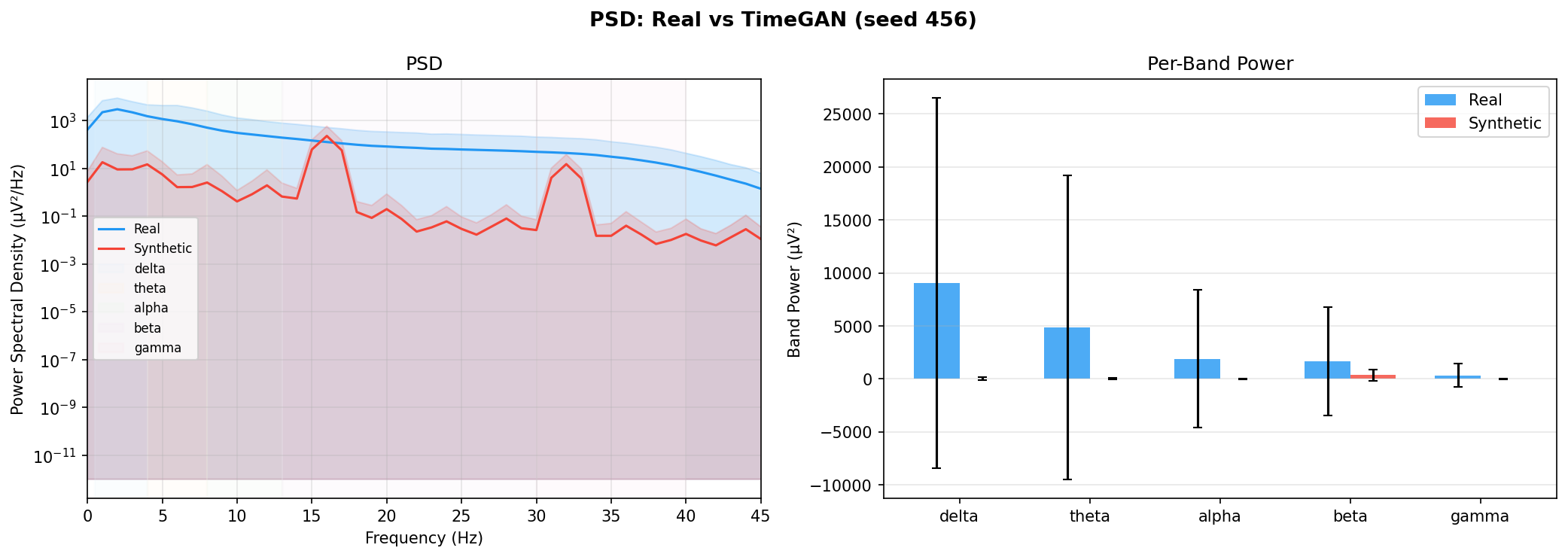

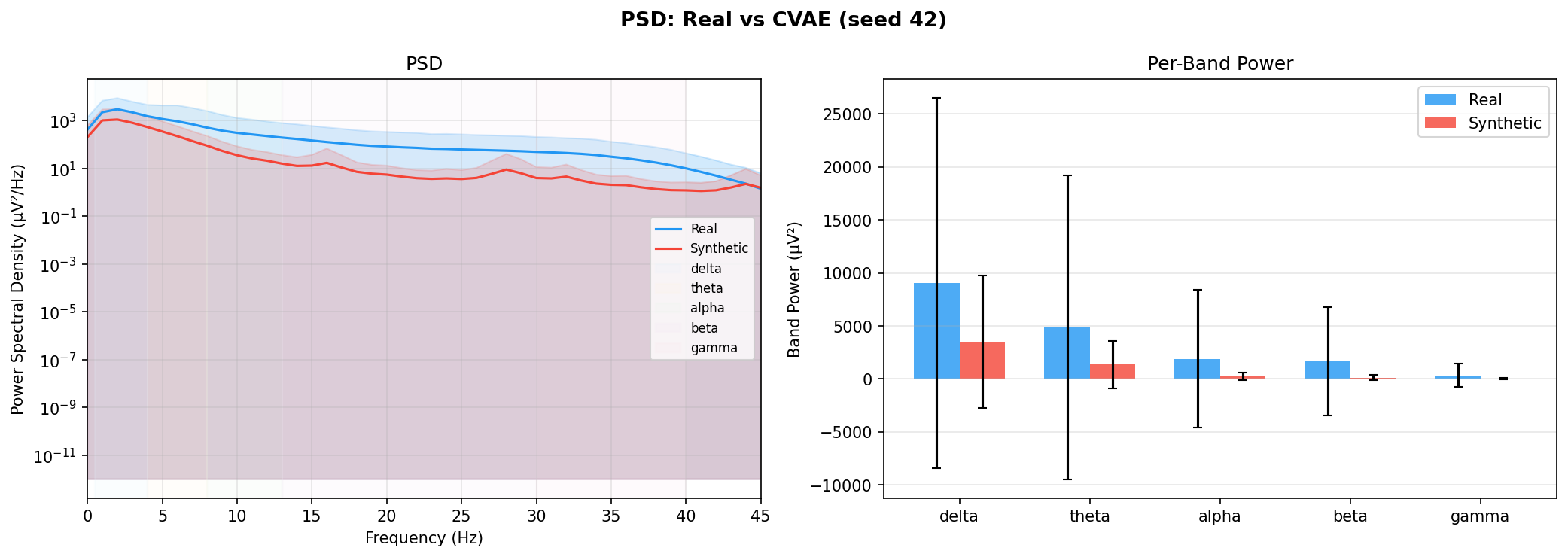

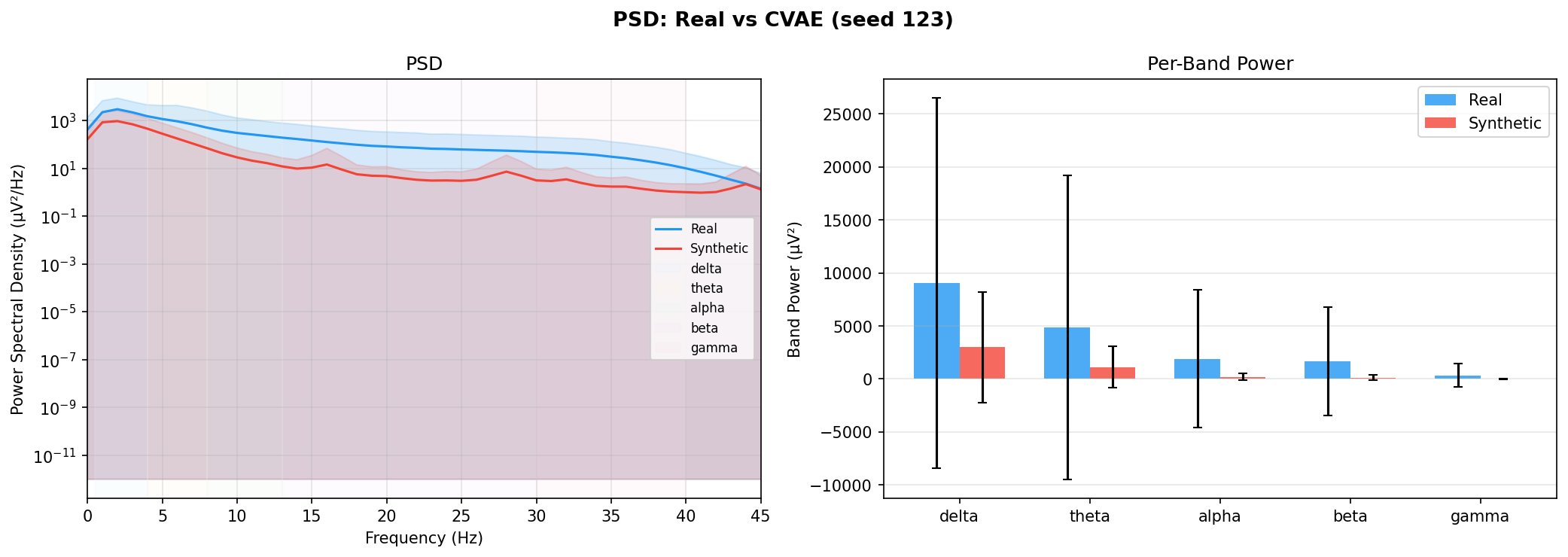

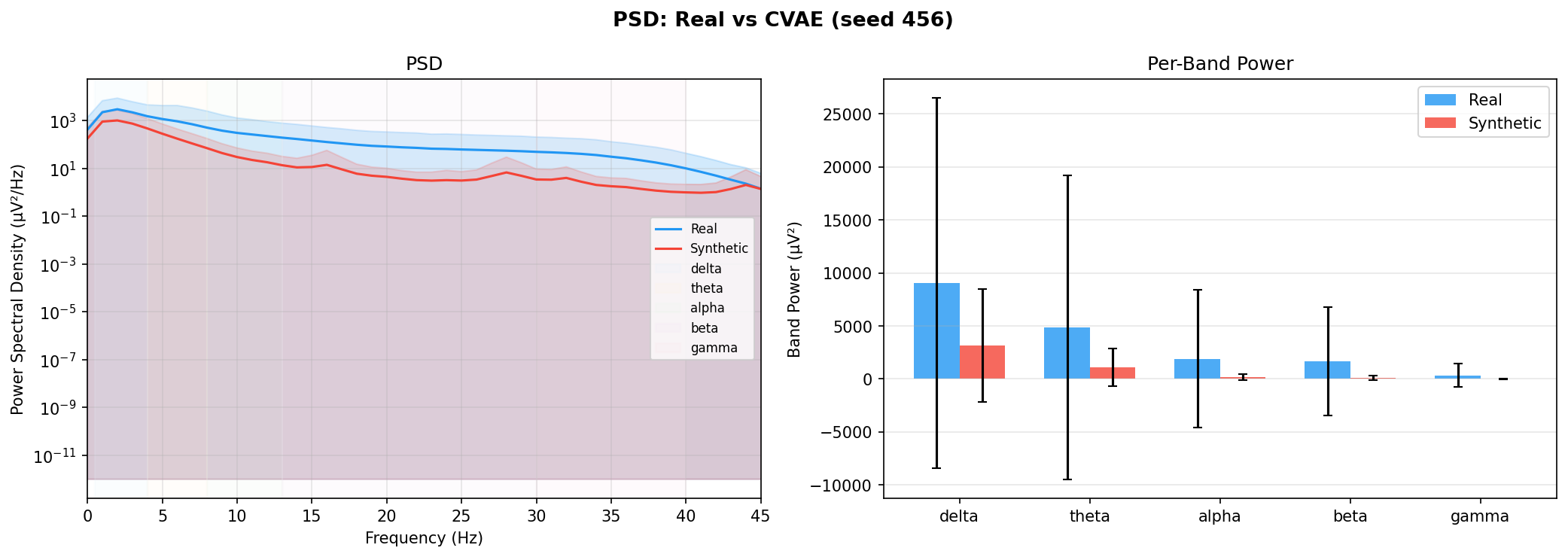

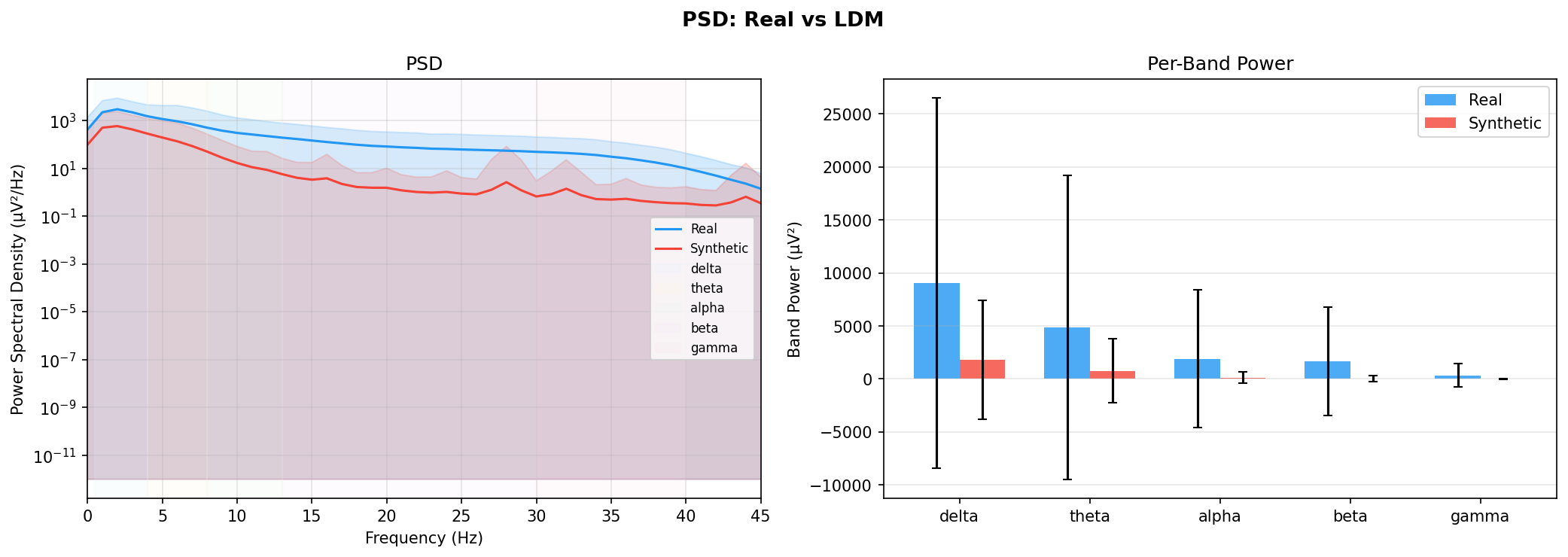

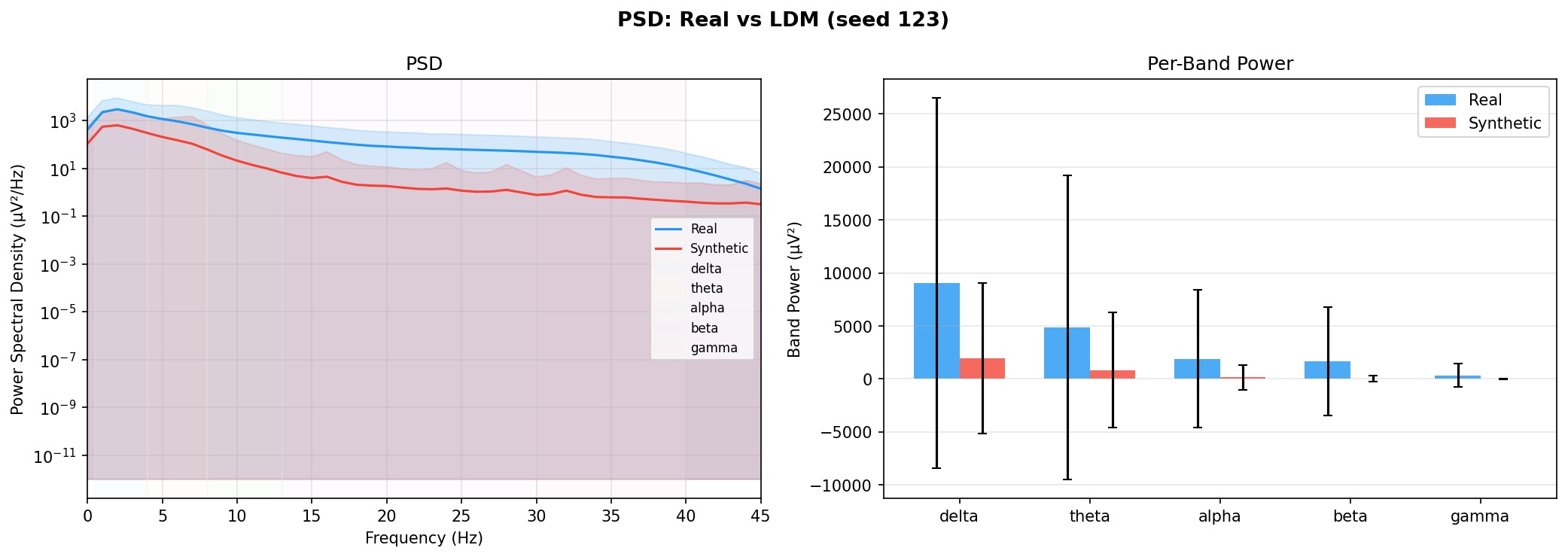

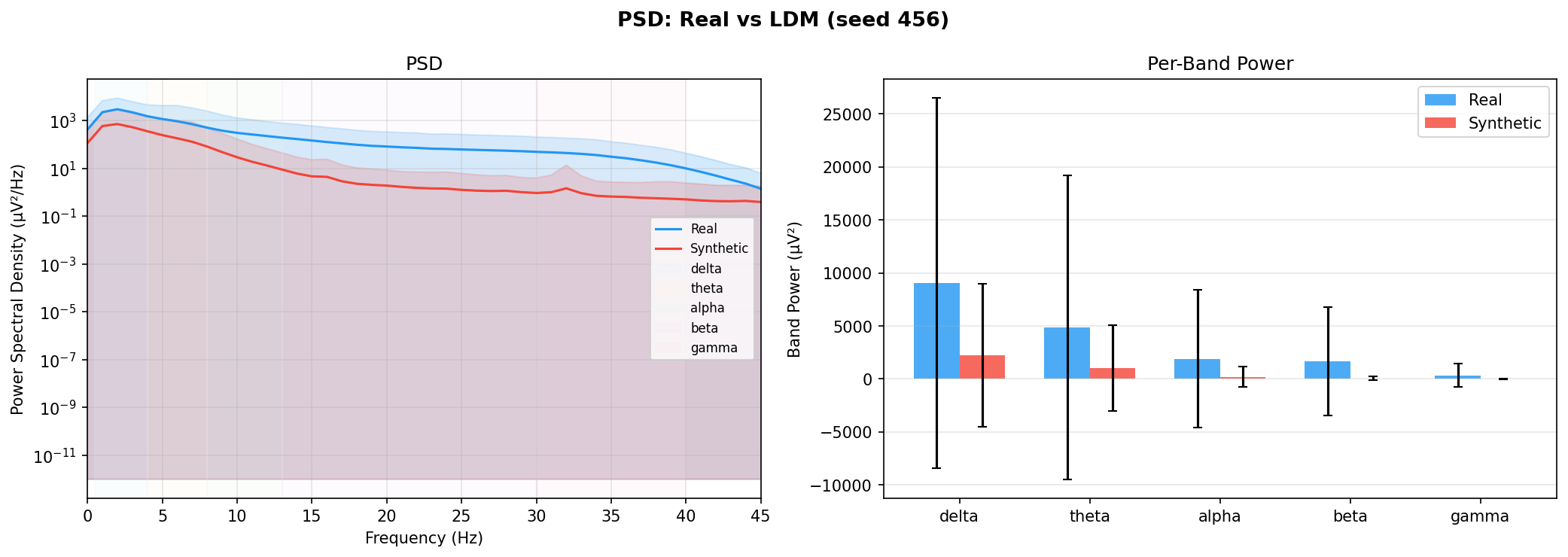

| PSD per frequency band | Does synthetic EEG have the same spectral shape (delta/ theta/ alpha/ beta/ gamma)? | Carrle et al., 2023 showed GANs smooth spectral peaks - spectral fidelity is the first thing to check. You et al., 2025 lists PSD as a standard generative quality metric (Table 3). Addresses RQ1, RQ5 - utility is frequently assessed, but time-series realism is not always tested directly. | ||||||||||||||||||||||||

| KL divergence per band | Quantifies how far synthetic spectral distribution is from real, per band | A scalar summary of PSD difference per band - makes cross-generator comparison possible (PSD plots are visual, KL is a number). Standard in distribution matching. | ||||||||||||||||||||||||

| C2ST (discriminative score) | Can a classifier tell real from synthetic? (50% = indistinguishable) | Tests the joint distribution (all features together), not just marginals. Introduced with TimeGAN (Yoon et al., 2019); applied to health sensors by Lange et al., 2024. | ||||||||||||||||||||||||

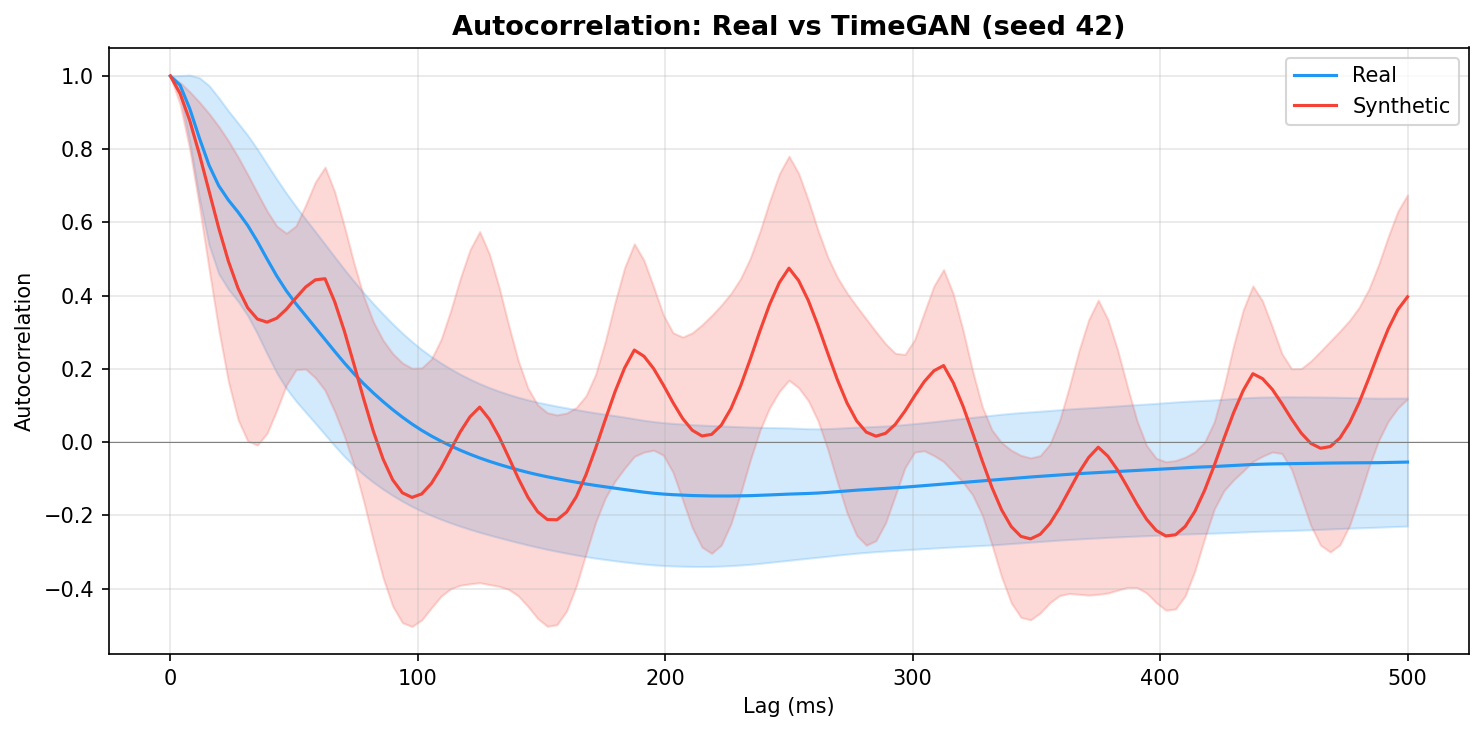

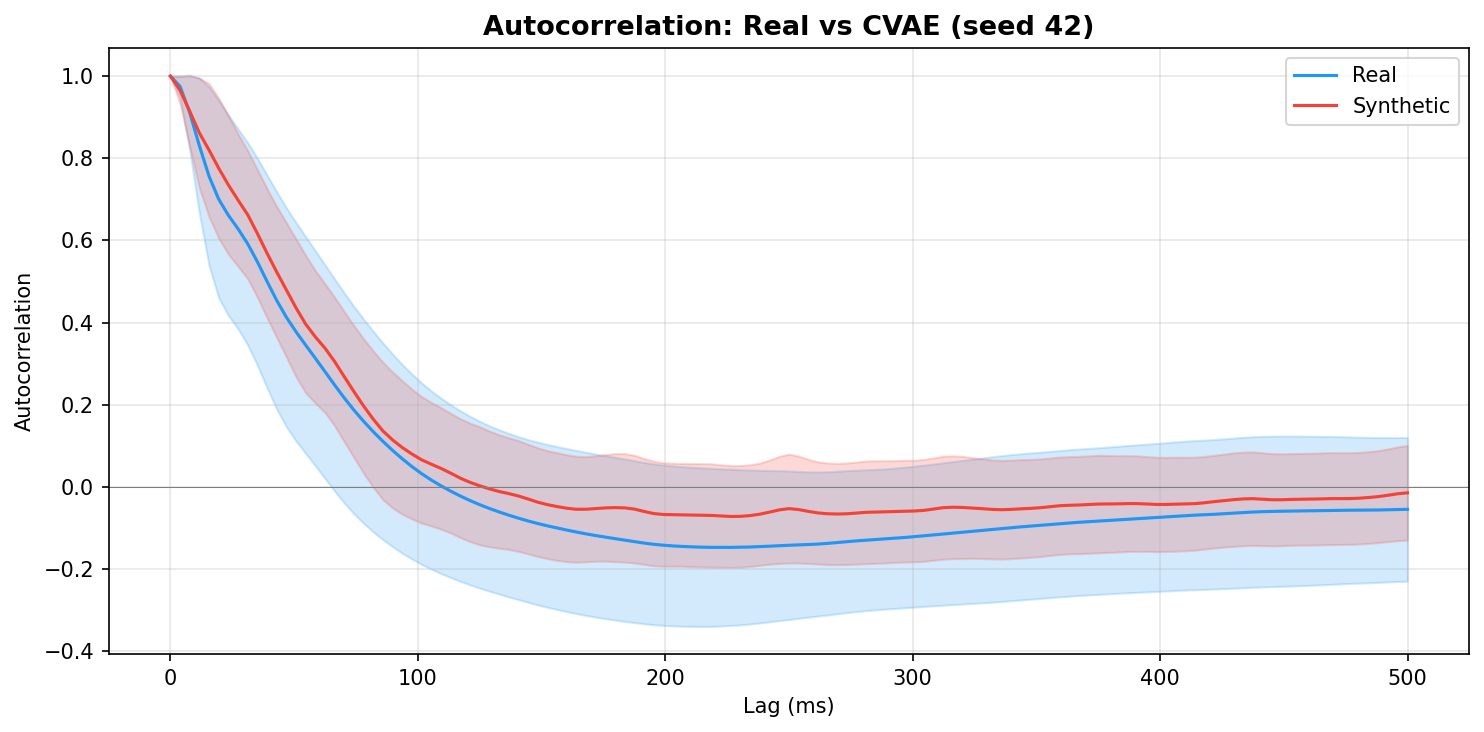

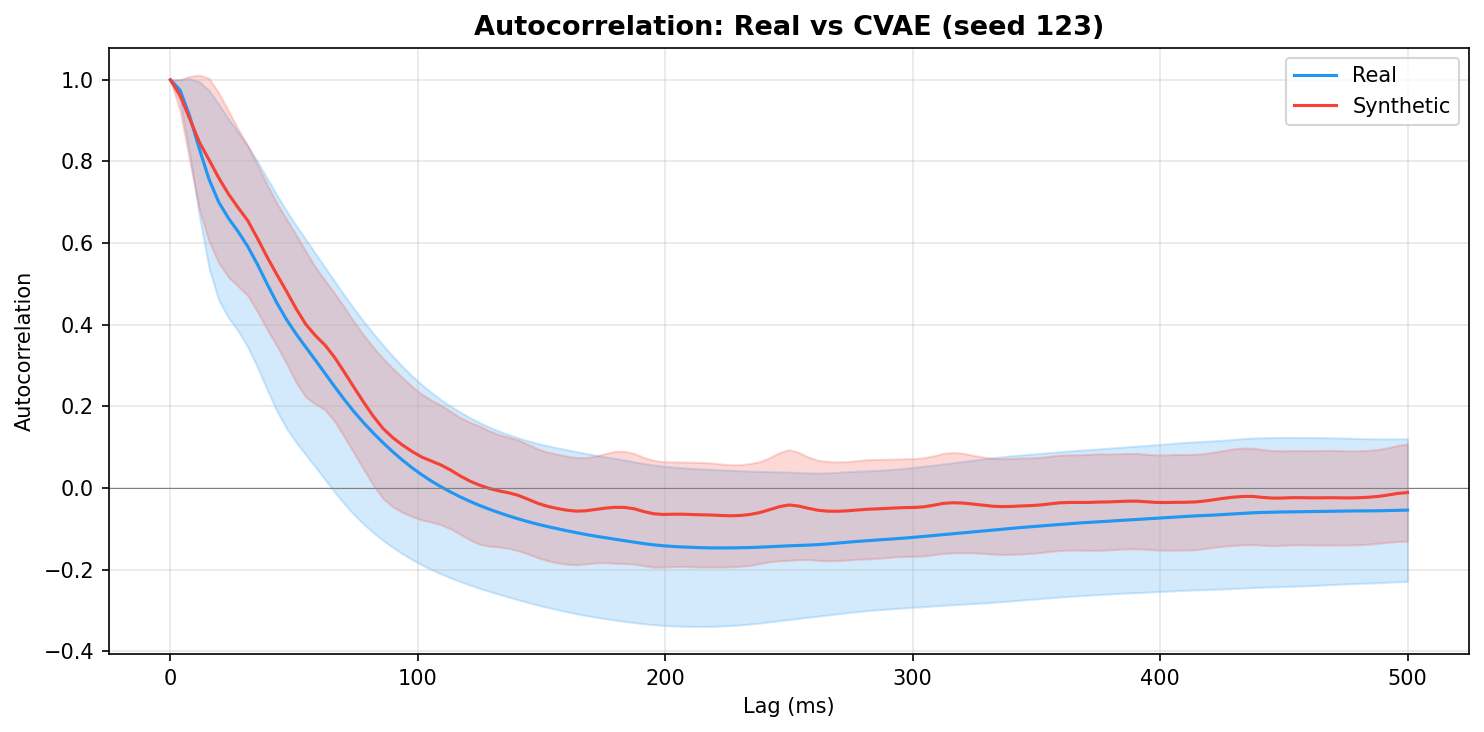

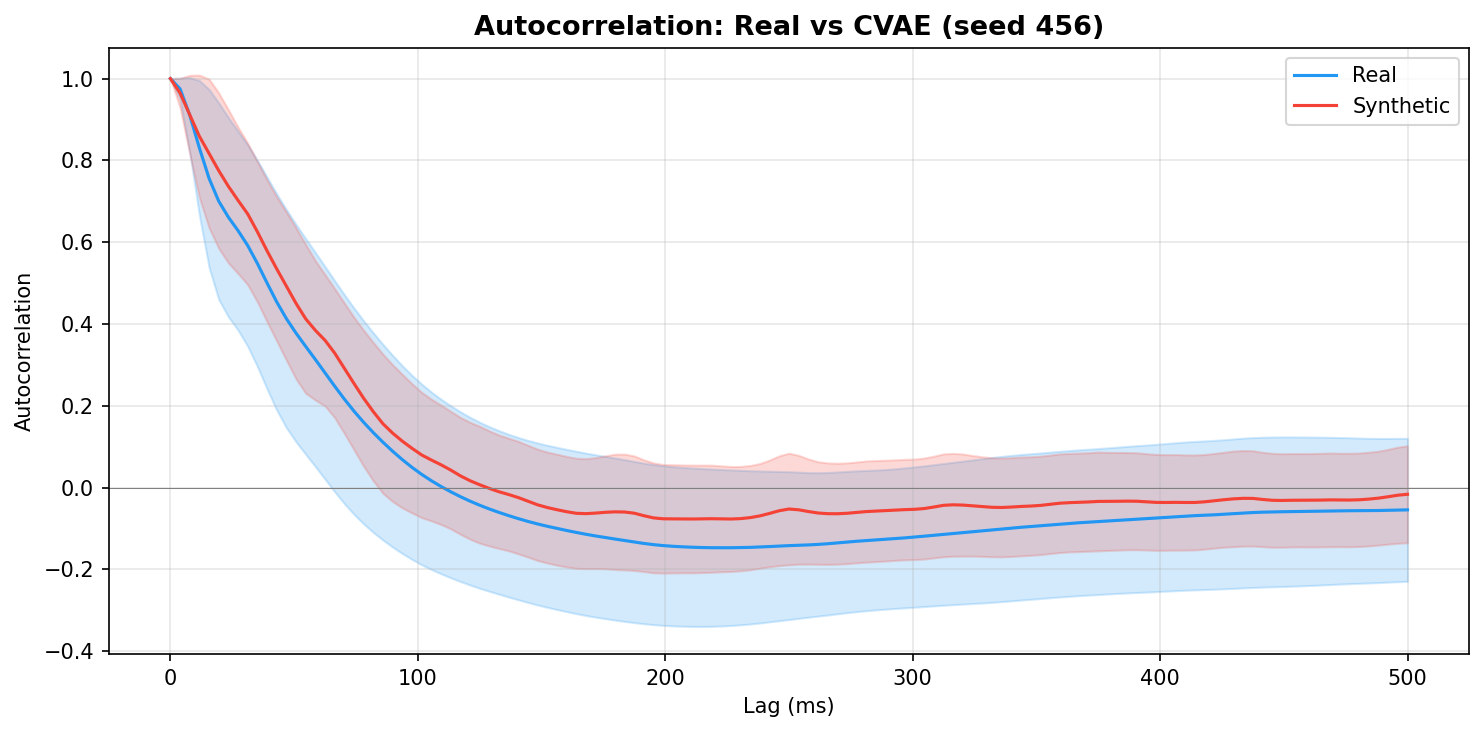

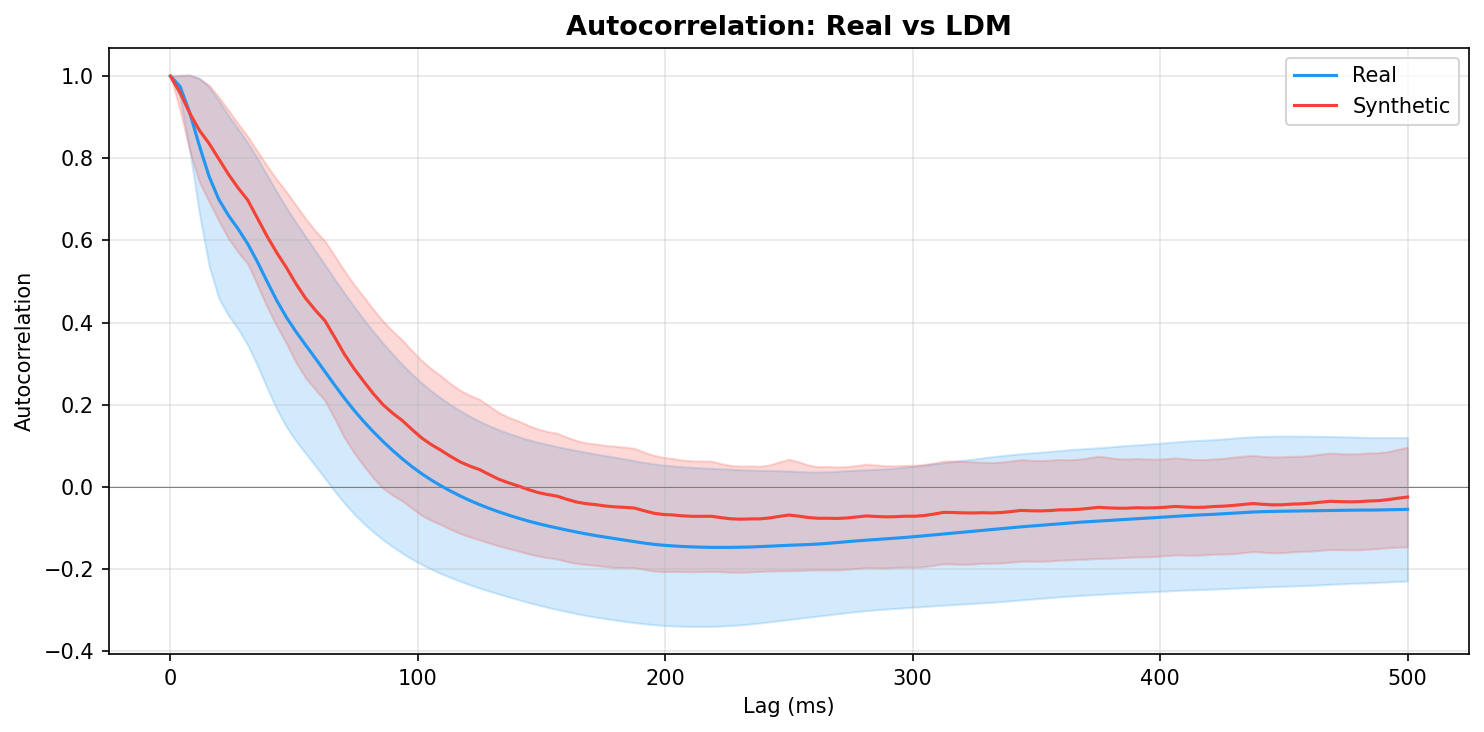

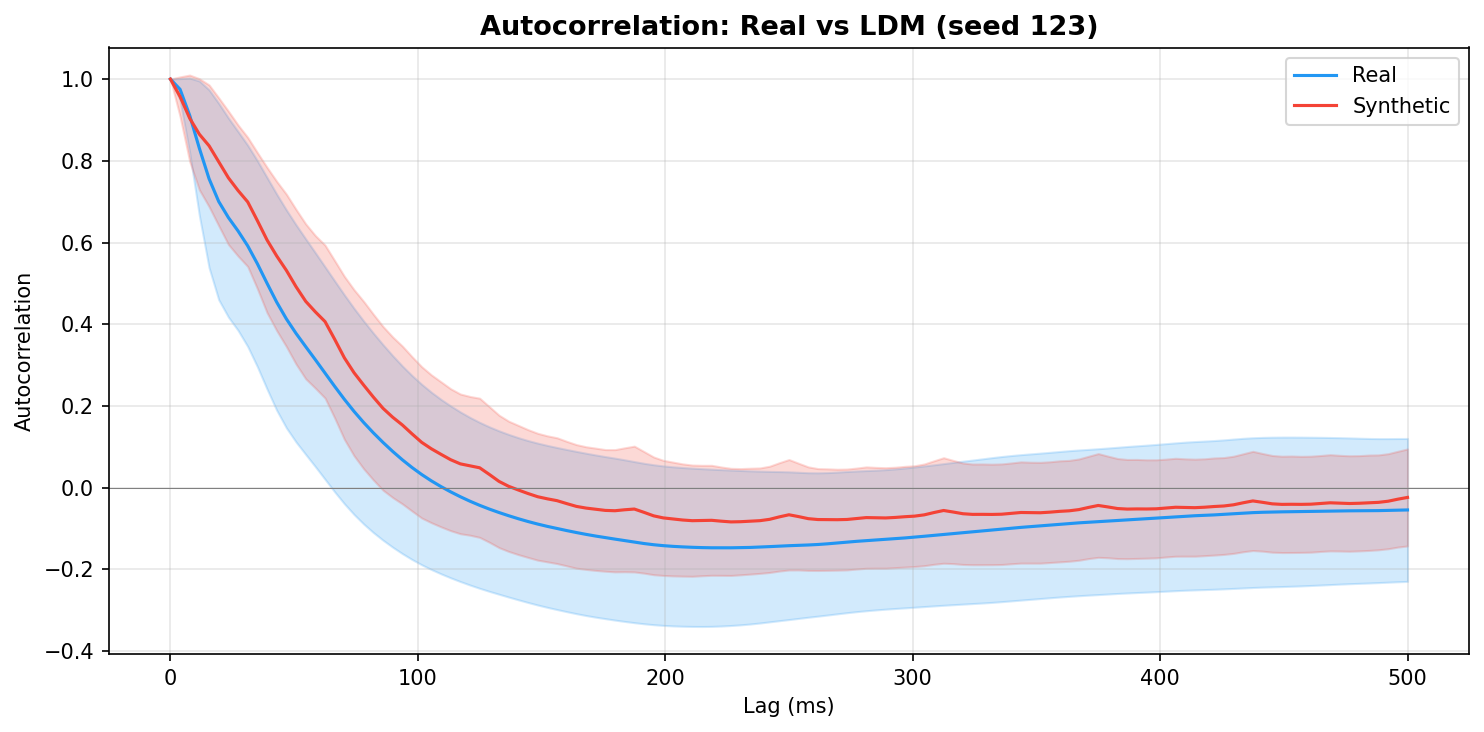

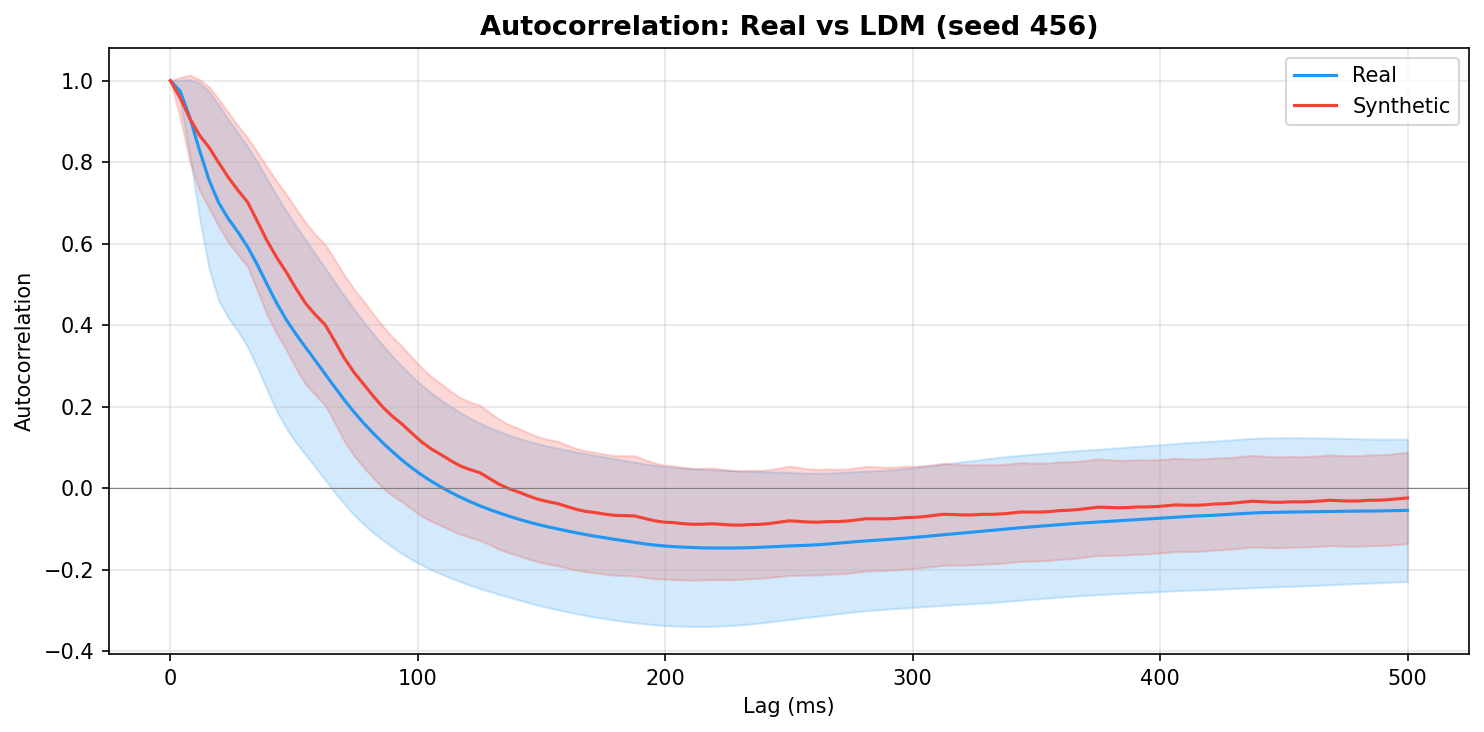

| Autocorrelation | Are temporal dependencies (how one time point relates to the next) preserved? | Lin et al., 2020 compute MSE between real and synthetic autocorrelation functions as a primary fidelity metric (Table 3), showing that temporal dependency preservation is essential for realistic time-series generation. A generator producing correct spectra but shuffled temporal structure would pass PSD but fail autocorrelation - the two are complementary. | ||||||||||||||||||||||||

| Cross-channel correlation | Are spatial relationships between EEG channels preserved? | Seyfi et al., 2022 compute MAE between real and synthetic correlation matrices "for each pair of channels in the EEG dataset" and show that preserving inter-channel correlations is essential for realistic multi-channel generation. Lange et al., 2024 compare Pearson correlation between signals as a quality metric for synthetic health sensor data. | ||||||||||||||||||||||||

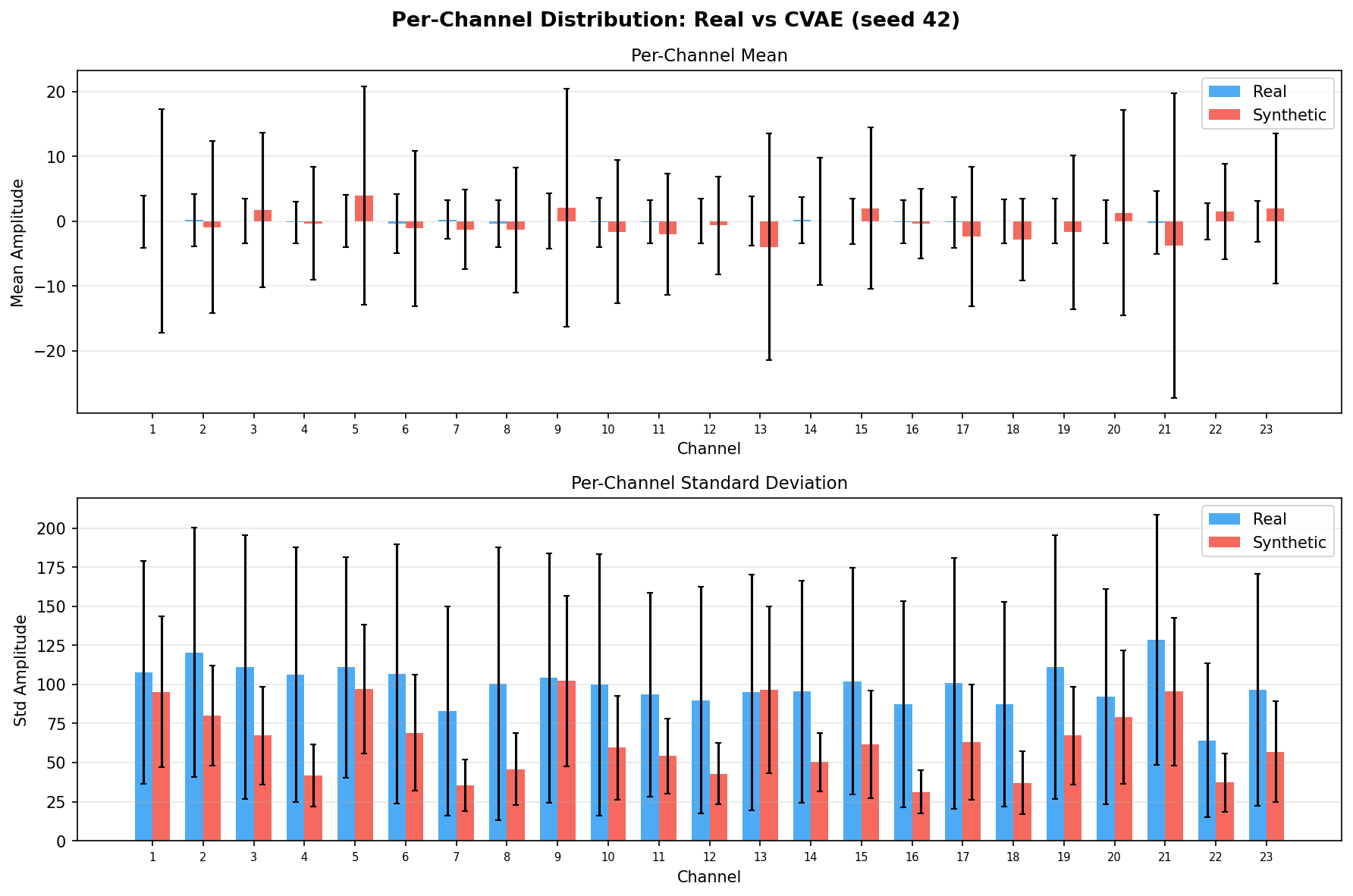

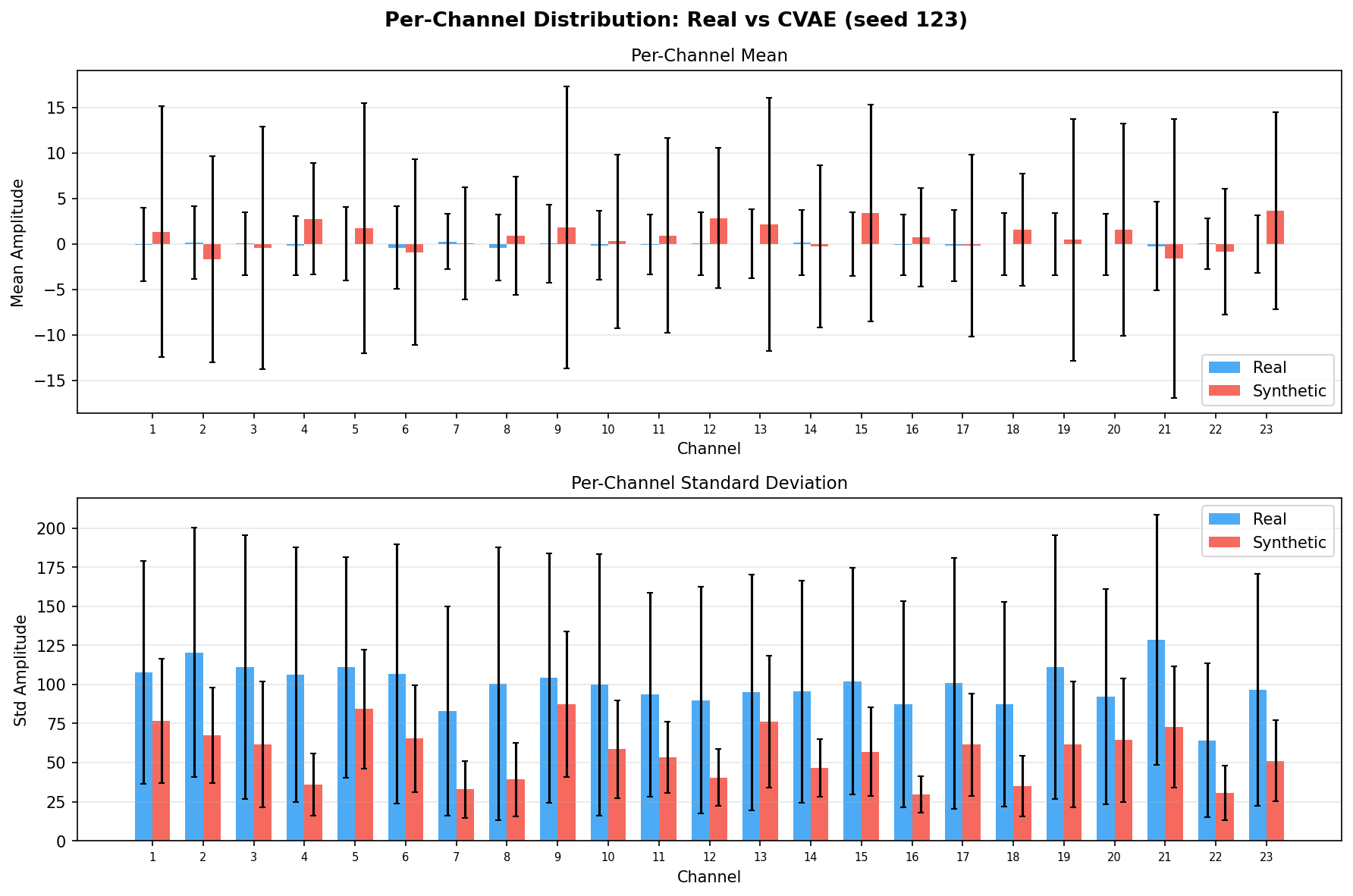

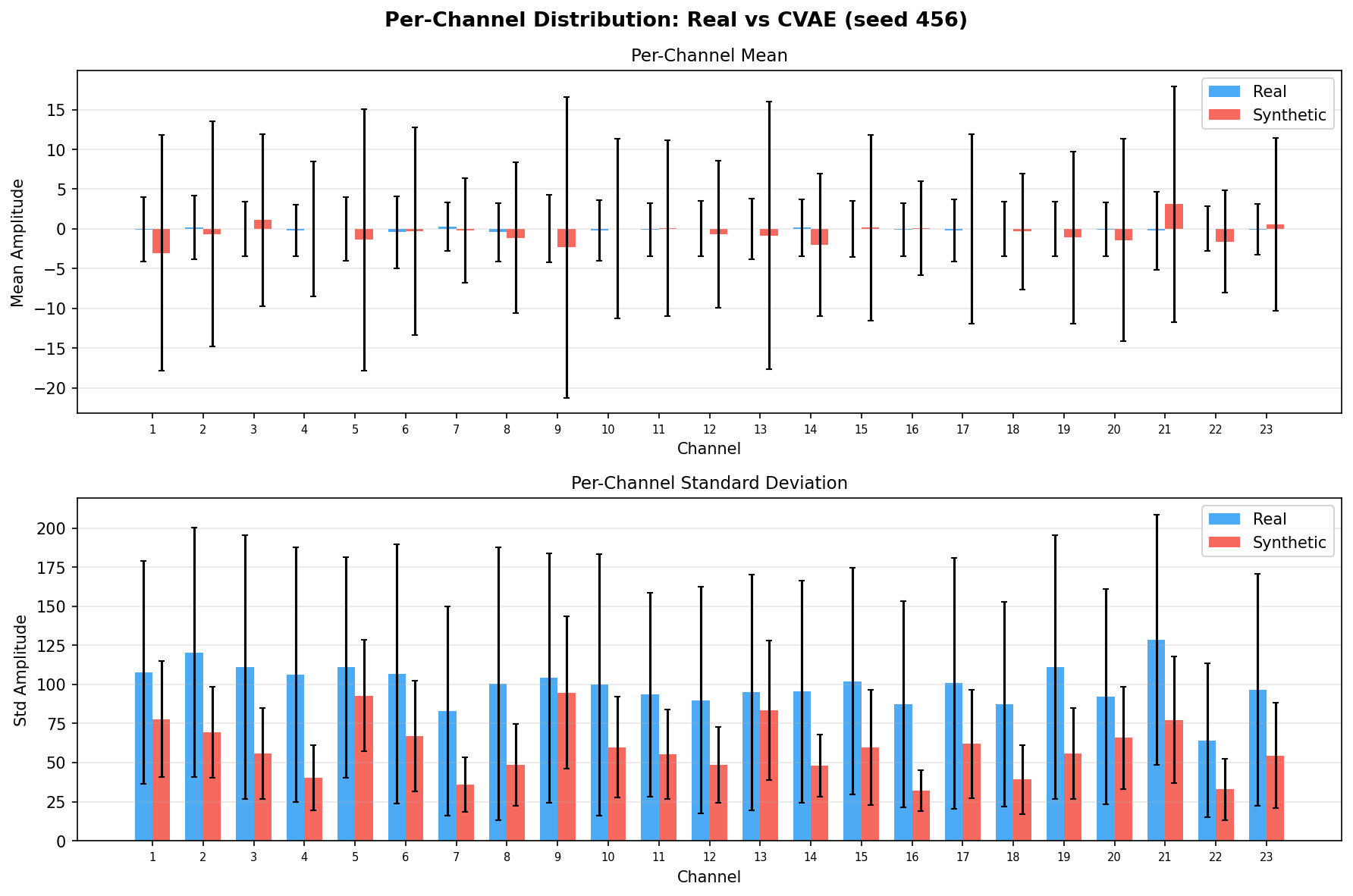

| Amplitude distributions | Are overall signal amplitudes realistic? | Lange et al., 2024 explicitly compare "the distribution density of signal values" between real and synthetic data via histograms as a core quality metric. You et al., 2025 Table 3 lists amplitude-level metrics (ABA) among generative quality measures. Histogram + Q-Q plot catch both global scale drift and distributional shape mismatch. | ||||||||||||||||||||||||

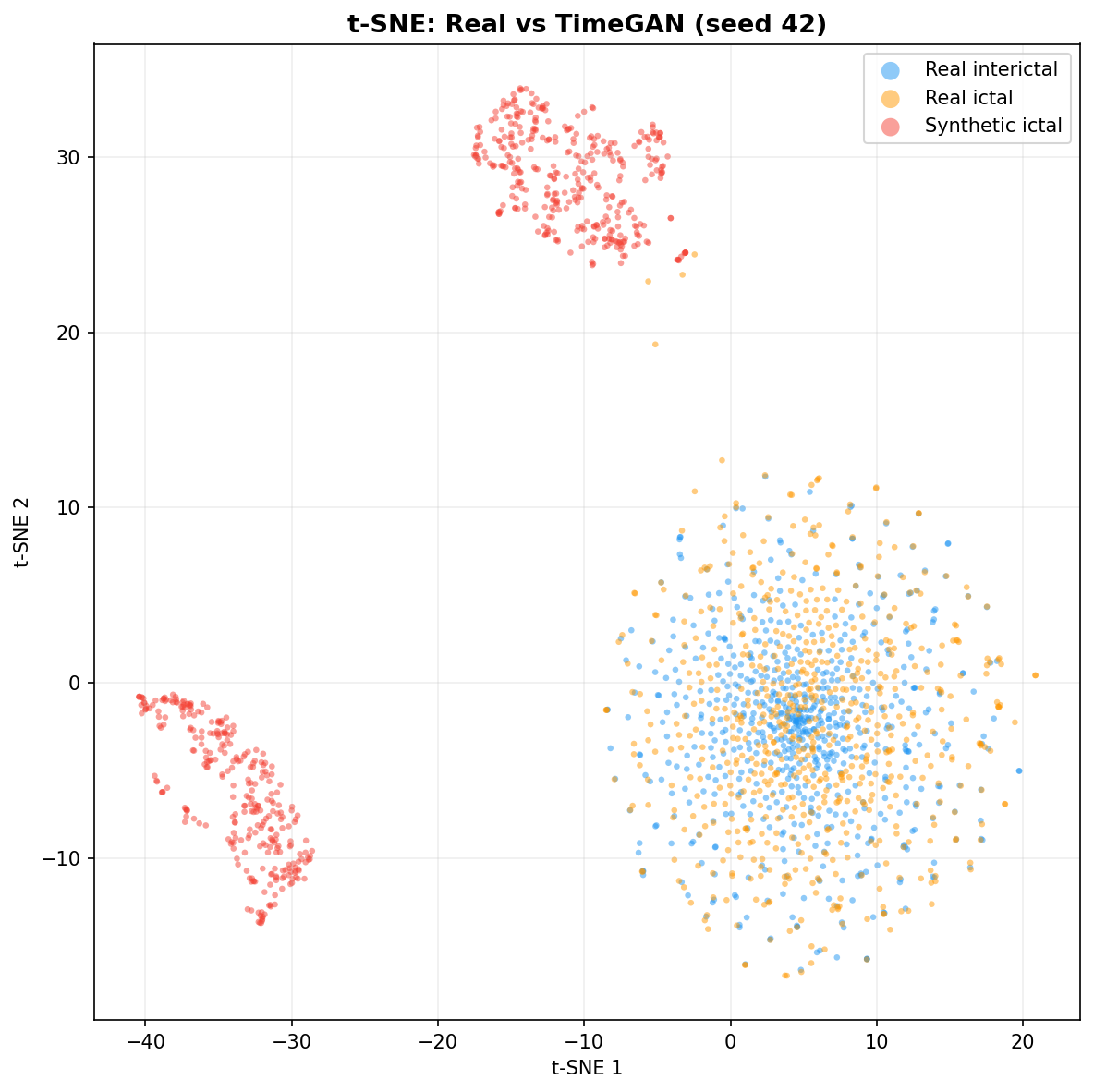

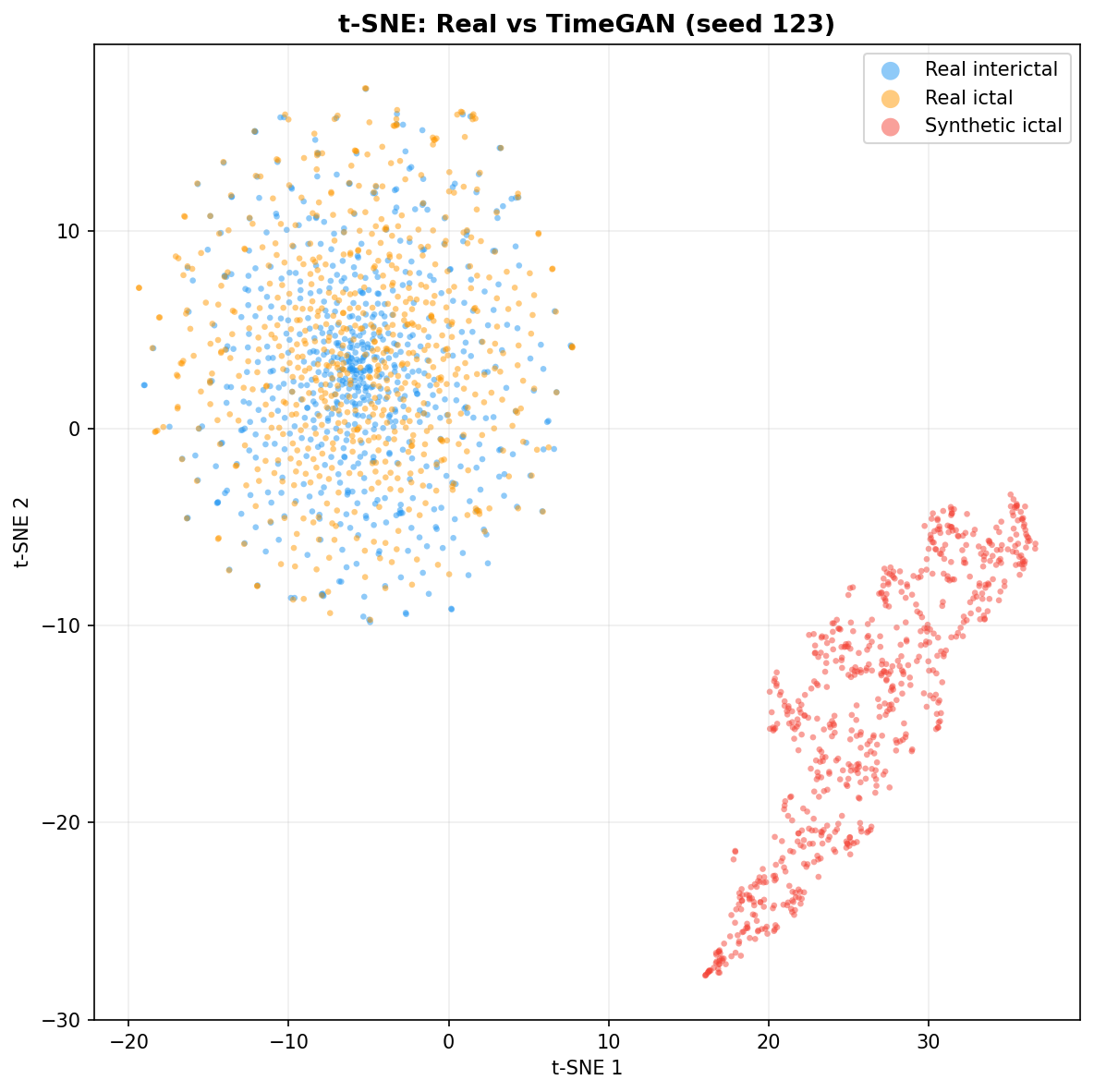

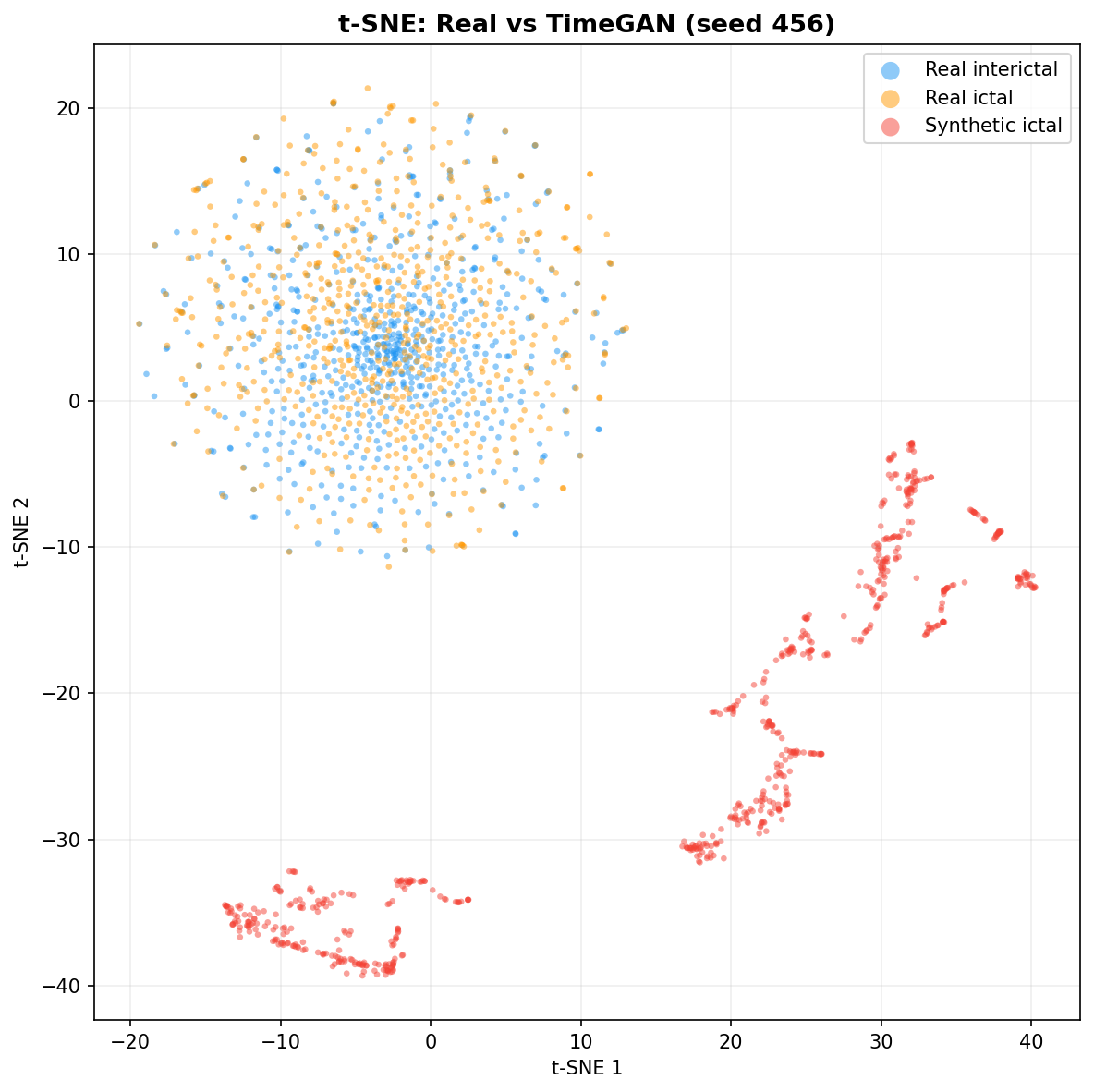

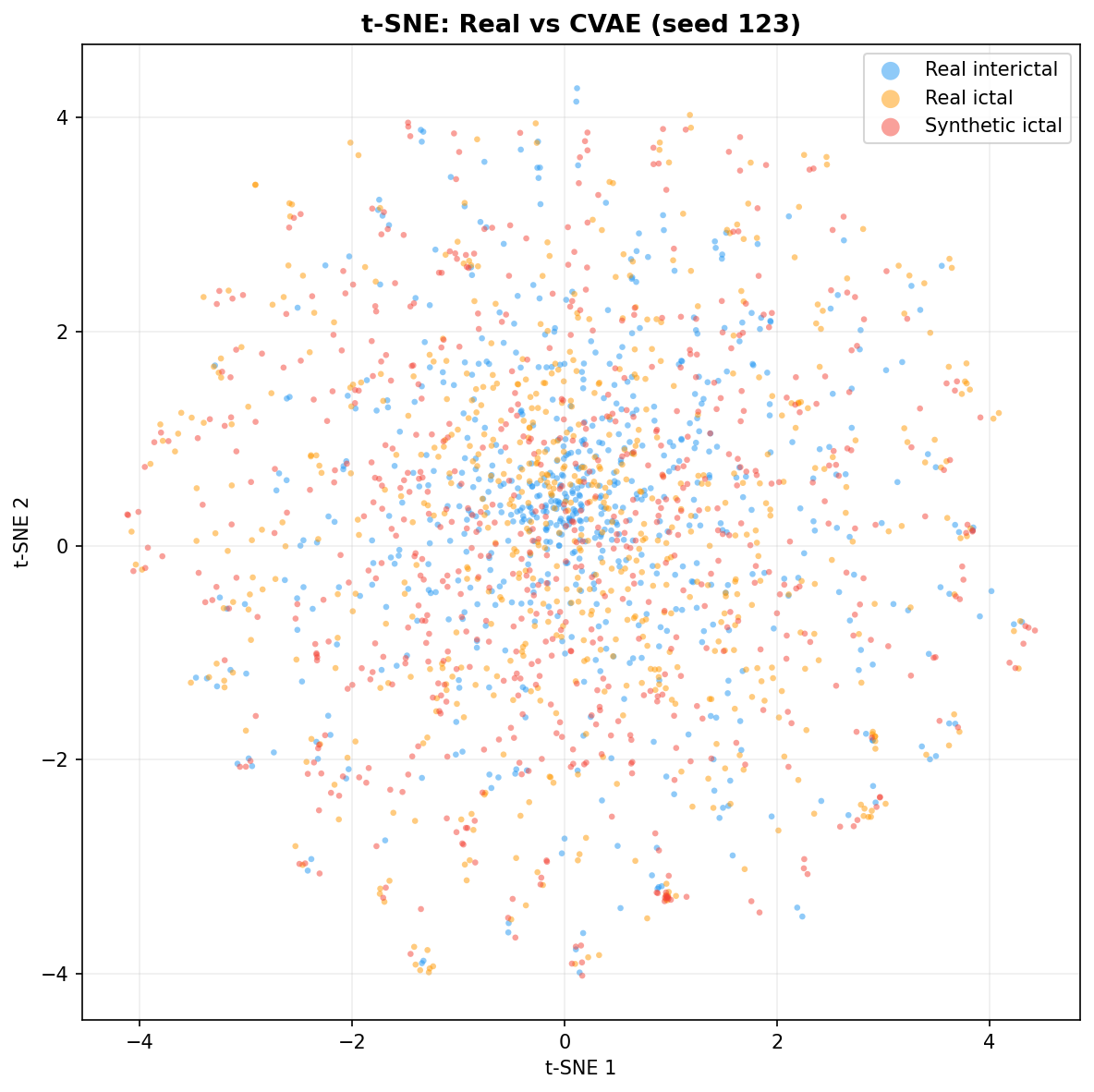

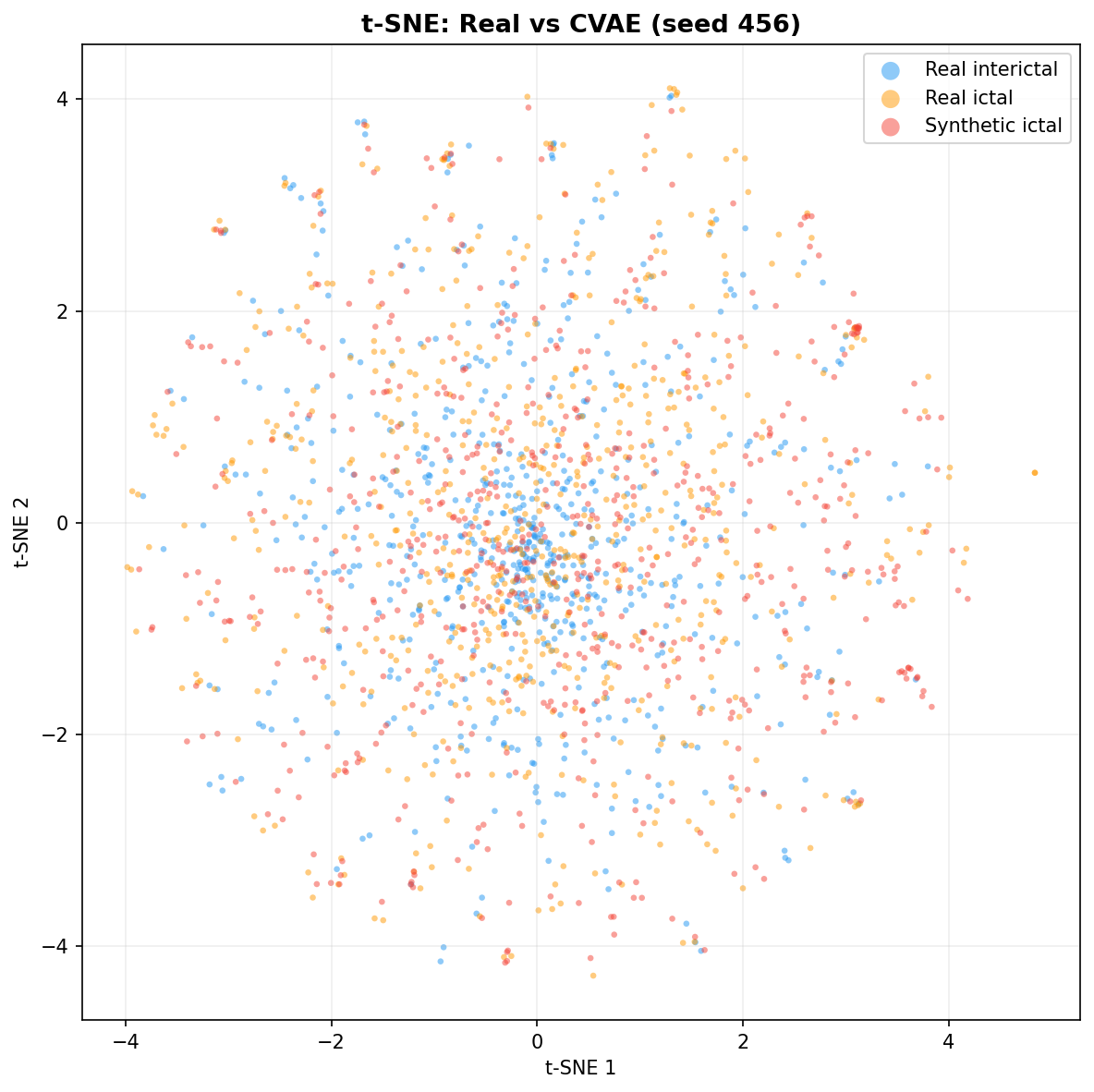

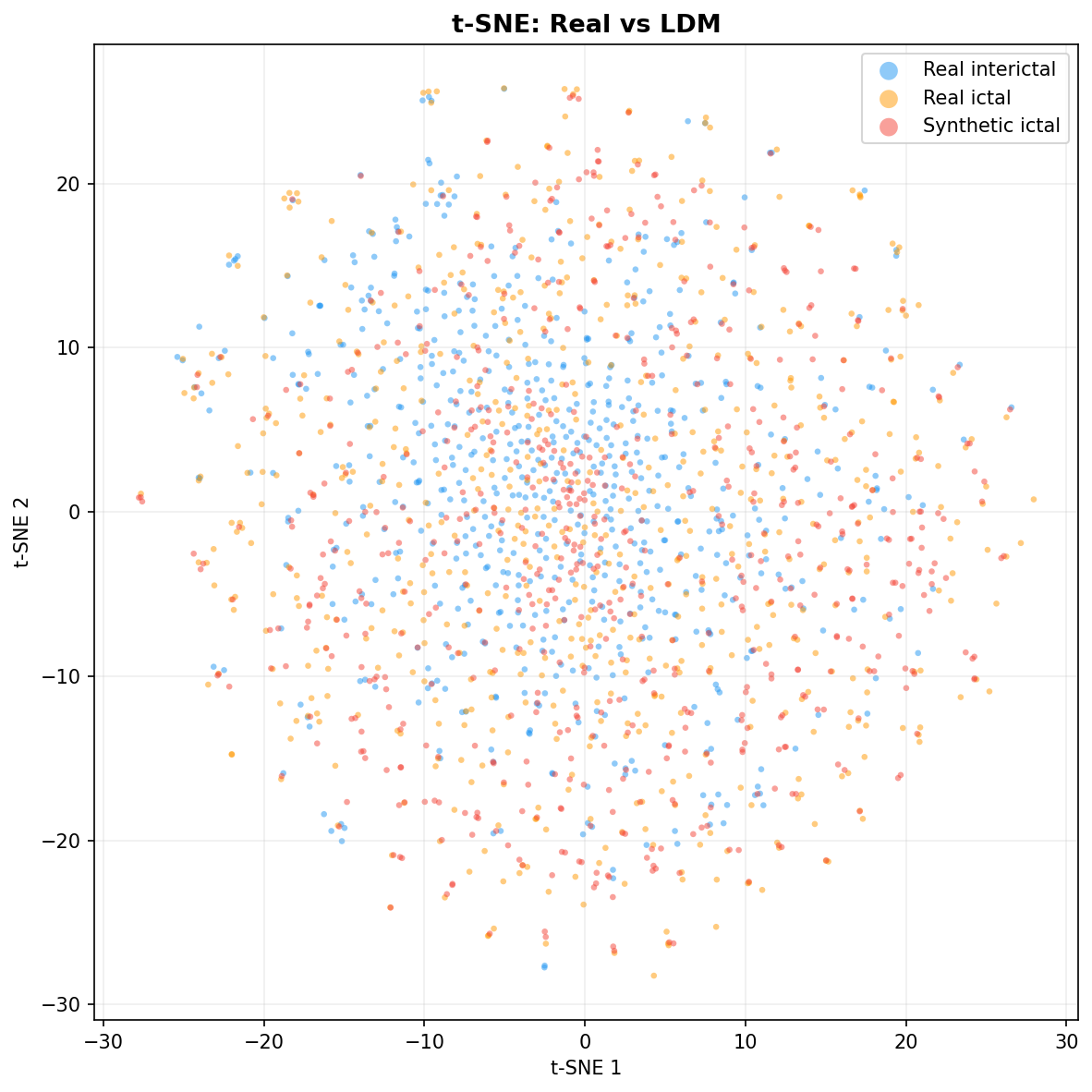

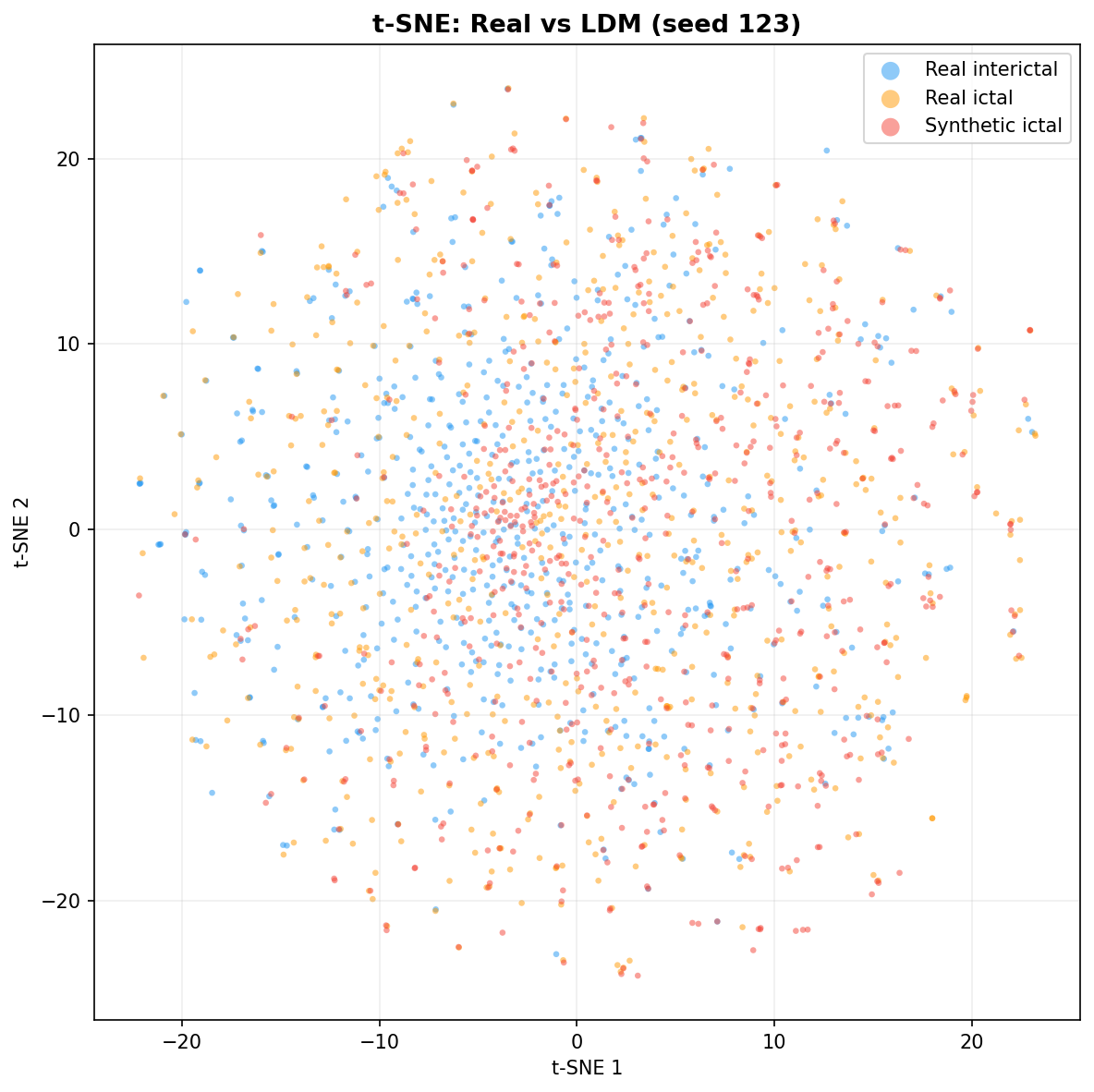

| t-SNE embedding | Do synthetic samples overlap with real data in feature space, or cluster separately? | Visual complement to C2ST. Reveals mode collapse (synthetic data clusters in one region) or mode dropping (some real patterns never generated). Not a scalar metric - for qualitative interpretation. | ||||||||||||||||||||||||

Excluded metrics and selection principlesThe literature reports dozens of fidelity metrics for synthetic data (You et al., 2025, Tables 2-3; Ibrahim et al., 2025, Section 2.3). We select metrics based on three principles, validated against adoption patterns across our 26-paper corpus (17 primary studies, 9 reviews):

The key insight: most metrics reported in EEG generation reviews (You et al., 2025; Carrle et al., 2023) originate in either paired settings (autoencoder/denoising) or image modalities. Neither applies to our unconditional class-conditional EEG generators. Our suite is designed for unpaired distributional evaluation of 1D multi-channel time-series generators, targeting the specific properties that matter for EEG: spectral shape, temporal structure, and inter-channel coupling. Notable: the dominant utility metric in EEG generation papers is accuracy (12/17 primary studies, e.g. Ahuja et al., 2024; Boukhennoufa et al., 2023; Sobhani et al., 2025; Waters et al., 2024), which is uninformative at <1% seizure prevalence. AUPRC appears in 0/26 SLR papers - however, it is established in the broader seizure detection literature (Yuan et al., 2019; Constantino et al., 2021; Manzouri et al., 2022; Yamada et al., 2025; Park et al., 2026), with theoretical justification in Saito & Rehmsmeier, 2015. Adopting it in the EEG generation context is a gap-filling contribution. |

||||||||||||||||||||||||||

Privacy/ Memorisation - Is the generator copying patients?

| Metric | What it answers | Why this metric? |

|---|---|---|

| Subject-ID linear probe accuracy | Can a classifier recover which patient the data came from? High accuracy = memorisation risk | If a generator memorises patient-specific signatures, "synthetic" data is really patient data with extra steps - defeating the privacy purpose. Motivated by You et al., 2025 and Gonzales et al., 2023 - privacy and memorisation risks are rarely evaluated alongside utility (RQ4). |

| k-NN proximity (synth→real) | How close are synthetic samples to their nearest real neighbors in embedding space? | Complements the linear probe: even if patient ID isn't explicitly recoverable, synthetic samples that sit on top of real ones may be near-copies. Operates in cosine distance on frozen detector embeddings. |

| Proximity ratio | synth-to-real distance / real-to-real distance. Below 1.0 = suspicious closeness | Normalises proximity against a baseline. Real data naturally has some self-similarity - the question is whether synthetic data is closer than real data is to itself. A thesis-specific contribution (no standard benchmark exists for EEG proximity). |

| Excluded: Membership inference | - | Tests if an adversary can tell whether a specific sample was in the training set. Requires a shadow-model training loop and assumes the attacker has access to auxiliary data from the same distribution. Our subject-ID probe addresses a different and more domain-relevant question: not "was this in training?" but "which patient did this come from?" - because in LOPO, the sensitive attribute is patient identity, not dataset membership. Re-identification is a distinct privacy risk from membership inference (Ibrahim et al., 2025, Section 2.3). Our k-NN proximity ratio complements this by detecting near-copies without requiring shadow models. |

| Excluded: Differential privacy (DP-SGD) | - | Provides formal mathematical privacy guarantees by adding calibrated noise during training. Excluded because: (1) formal DP requires a privacy budget (epsilon) chosen a priori, adding a hyperparameter orthogonal to our research questions; (2) the privacy-utility tradeoff means DP-trained generators produce lower-quality synthetic data (Ibrahim et al., 2025: "underscoring the often-present trade-off between privacy and utility"), confounding the comparison of generator architectures; (3) our research questions ask whether generators memorise, not whether we can prevent it - that is a separate engineering decision downstream of results. |

Efficiency - Is the improvement worth the compute?

| Metric | What it answers | Why this metric? |

|---|---|---|

| AUPRC gain over baseline | How much better than real-only training (E1)? | The denominator for cost-benefit. Without a gain, there is no benefit to justify any cost. Computed from aggregated LOPO results. |

| Total time (train + generate) | How many GPU-hours does the full pipeline cost? | Practical concern: a generator that takes 100h for +0.01 AUPRC is not viable in a clinical setting. Epoch times are recorded per fold during training and aggregated in E6. |

| Gain per hour | AUPRC improvement normalised by total compute time | Ranks generators by bang-for-buck. A simpler model with modest gains may be more practical than a complex one with marginal extra improvement - a finding echoed by the broader augmentation literature (see Carrle et al., 2023 on diminishing returns). Part of E6 cross-generator analysis. |

| Diminishing returns flag | Does adding more synthetic data (higher ratio) start hurting performance? | Carrle et al., 2023 found gains plateau or reverse beyond 100% (r=-0.37 between baseline accuracy and augmentation benefit). Detects "too much synthetic" degradation. |

Why all four axes? Utility alone is insufficient: a generator could improve AUPRC by memorising patients (fails privacy), produce high-scoring but spectrally unrealistic signals (fails fidelity), or require impractical compute (fails efficiency). This multi-axis approach directly addresses SLR Gaps 3 and 6 - most prior EEG augmentation work only reports utility (Boukhennoufa et al., 2023; You et al., 2025).

Implementation status: Utility and fidelity metrics run automatically per experiment. Privacy analysis (E7) and efficiency analysis (E6 post-hoc) are fully implemented but will execute once the LOPO evaluation completes for all generators - they need cross-experiment data to produce meaningful comparisons.

Research Gaps Identified in the SLR

These gaps directly motivate the experimental design and research questions:

CHB-MIT Scalp EEG Database

Boston Children's Hospital/ MIT · PhysioNet v1.0.0 (2009) · 23 unique subjects across 24 cases with intractable epilepsy

Re-Recording: chb01 = chb21

chb21 was recorded 1.5 years after chb01, from the same female subject. This is the only re-recording in the dataset. For train/test splitting, chb01 and chb21 must always stay on the same side of any patient-level split.

The original database was published in 2009. chb24 was added in December 2010 and is absent from SUBJECT-INFO (no gender or age on record). Total: 24 cases from 23 unique subjects - 5 males, 17 females, 1 unknown.

Recordings per Patient

Non-seizure and seizure files stacked per patient case

Patient Age Distribution

Ages range from 1.5 to 22 years (pediatric cohort)

Gender Distribution

17 females, 5 males; chb24 has no gender/age on record

Patient Profiles

24 cases from 23 unique subjects. chb21 highlighted as the only re-recording.

| Patient | Gender | Age | Files | Seizure | % |

|---|

Data Pipeline

From raw EDF files to normalized, windowed tensors. Covers cleaning, signal preprocessing, windowing, caching, and parameter justifications.

Phase 1: EDF Cleaning

Homogenization of raw CHB-MIT EDF files into clean, standardized EDF+ format

Cleaning Pipeline (homogenize.py)

Cleaning Results

Channels Removed

Key Challenges Solved

TARGET_MONTAGE). Missing channels zero-padded; extra channels dropped. QC rejects windows with flat (zero-padded) channels.Output: Standard 10-20 Bipolar Montage (23 channels)

The 23 bipolar channels retained after removing non-EEG channels and resolving duplicates.

Per-Patient Cleaning Summary

What the homogenization pipeline actually did to each case. Colour legend: ● stable montage ● montage split required ● non-EEG channels removed.

| Case | Montage | Channels Removed | Action taken |

|---|

Phase 2: Signal Preprocessing

Literature-grounded preprocessing pipeline applied to the homogenized EEG signals before windowing and model training

Why Preprocess?

Raw scalp EEG is full of things that aren't brain signals: powerline hum at 60 Hz, slow drifts from electrode chemistry, muscle noise from jaw clenching or eye movements, and the occasional electrode pop when something shifts. If generators train on this raw signal, they learn to reproduce artefacts alongside real neural patterns - and the synthetic data inherits those artefacts (Carrle et al., 2023). The pipeline below cleans the signal while keeping all the clinically relevant information, and it's applied consistently across all experiments so comparisons are fair.

Importantly, normalisation parameters come from training data only. This prevents information about test patients from leaking into the pipeline.

Preprocessing Pipeline (applied after homogenization, before windowing)

data/loader.py)scipy.signal.iirnotch, Q=30Powerline interference is an environmental, non-biological artefact caused by electromagnetic fields from the electrical mains supply. In the United States (where the CHB-MIT recordings were made at Boston Children’s Hospital), the mains frequency is 60 Hz. This interference couples capacitively and inductively into the electrode leads and amplifier circuits, producing a sustained sinusoidal component at 60 Hz and its harmonics (120 Hz, 180 Hz, etc.) that is superimposed on the genuine neural signal.

Sobhani et al., 2025 explicitly identify power-line interference as one of the noise sources in raw EEG: “A variety of noise and artifacts, including power-line interference, eye movements, and muscle activity, are commonly present in raw EEG recordings.”

The 60 Hz line noise falls squarely in the gamma band (30–100+ Hz). If left unremoved, it contaminates power spectral density estimates, distorts differential entropy features in that range, and - critically - may cause classifiers to learn the mains peak as a spurious feature rather than genuine neural activity. Carrle et al., 2023 note that even generative models can reproduce or fail to reproduce the 50 Hz line noise artefact, directly demonstrating that it is a persistent, recognisable feature of real recordings: “Figure 5 in the study of Bird et al. (2021) demonstrates highly smoothed versions of the 50 Hz line noise artifact as well as the absence of alpha peaks in the synthetic data produced with a GPT model.”

Our bandpass filter (0.5–40 Hz) already attenuates frequencies above 40 Hz, which would remove 60 Hz content. However, the rolloff of a 4th-order Butterworth is gradual, not brick-wall: at 60 Hz the attenuation is only ~24 dB. Powerline interference can be 10–100× stronger than neural signals, so partial attenuation may not suffice. The notch filter surgically removes a narrow band around exactly 60 Hz (and 120 Hz) with minimal disturbance to adjacent frequencies. Sobhani et al., 2025 suggest that “environmental artifacts may be avoided using a band filter since their frequency differs from the EEG signals of interest.” We apply both: notch first (surgical removal of the known contaminant), then bandpass (broader spectral shaping).

- Frequencies: 60 Hz (US mains fundamental) + 120 Hz (2nd harmonic). Higher harmonics (180, 240 Hz) fall well outside our 0.5–40 Hz bandpass and need not be explicitly notched.

- Quality factor Q=30: Produces a notch ~2 Hz wide at the −3 dB points. This is narrow enough to preserve neural gamma activity at 58 Hz and 62 Hz while fully attenuating the mains peak.

- Filter type: IIR notch (

scipy.signal.iirnotch) applied withfiltfiltfor zero-phase response, preserving the temporal alignment of seizure onsets.

scipy.signal.iirnotch(60, 30, 256) + scipy.signal.filtfilt, applied per channel. Repeated for 120 Hz.filtfilt)Raw EEG contains spectral content from DC (0 Hz) up to the Nyquist frequency (128 Hz at our 256 Hz sampling rate). Much of this is not neural. Below ~0.5 Hz, the signal is dominated by slow electrode drift - voltage changes caused by electrochemical reactions at the electrode-skin interface, sweat-related impedance fluctuations, and patient movement. Above ~40–70 Hz, the dominant source is electromyographic (EMG) contamination from scalp, facial, and neck muscles, which produces broadband high-frequency energy that can be orders of magnitude larger than neural gamma activity.

Sobhani et al., 2025 describe this directly: “The FIR filter in our context was made to allow signals between 0.3 and 70 Hz, which removes high-frequency muscular distortions and slow DC drifts while keeping the frequency range that is most important for clinical and cognitive EEG studies.”

You et al., 2025 warn unambiguously: “Without adequate preprocessing and filtering strategies, such artifacts may introduce substantial variability and degrade the reliability of feature extraction and classification.”

Their review also observes that despite claims of working with “raw” EEG, most deep learning models still depend on filtered data: “Although many deep learning models claim to work with raw EEG signals, in practice, they still heavily depend on preprocessed data, such as artifact removal and band-pass filtering, limiting their adaptability in unstructured environments.”

Without filtering, DC drift can saturate the input dynamic range of downstream models. EMG contamination above 40 Hz overwhelms neural gamma activity. Frequency-domain features (PSD, band power, differential entropy) become unreliable because they measure noise rather than brain activity. Classifiers trained on unfiltered data learn artefact patterns rather than neural patterns, producing results that do not generalise.

This range retains the five clinically relevant EEG bands (delta through low gamma) while excluding both DC drift and muscle contamination. The choice is well-supported by the SLR literature:

Our lower cutoff of 0.5 Hz (rather than 1 Hz as in Carrle et al., 2023) preserves the full delta band, which is relevant for seizure detection in paediatric patients where slow-wave abnormalities are common.

filtfilt, not FIRSobhani et al., 2025 advocate for FIR filters because of their “linear phase properties, which guarantee that the temporal structure of the EEG waveforms is maintained without introducing phase distortion.” This is valid for a causal (real-time) filter. However, we apply filtfilt (forward-backward filtering), which makes any IIR filter zero-phase - achieving the same temporal-structure preservation as FIR while requiring a much lower filter order (4th-order Butterworth vs. typically 100+ order FIR for a comparable transition band). Lower order means fewer edge artefacts and faster computation across 683 files × 23 channels.

scipy.signal.butter(N=4, Wn=[0.5, 40], btype='band', fs=256) + scipy.signal.filtfilt. Zero-phase; no temporal distortion.np.clip after filteringEven after notch and bandpass filtering, the signal may contain transient, high-amplitude artefacts: electrode pops (sudden impedance changes), large body movements, or electrical interference spikes. These events produce amplitudes far exceeding normal EEG (±100–200 µV) and even ictal activity (±300–500 µV). Leaving them unchecked distorts the variance and mean of the signal, corrupts normalization statistics, and forces downstream models to waste capacity modelling extreme outliers.

The literature presents two competing approaches to handling artefacted segments:

Clipping is a softer alternative that resolves this tension: it bounds the outlier without discarding the window. A brief electrode pop that produces a 2000 µV spike in 50 samples out of 1024 is clipped to ±800 µV, preserving the remaining 974 samples of genuine signal. The window stays in the training set, avoiding the data loss and exclusion bias that Dakshit et al., 2023 warns about.

The threshold is chosen to be well above physiological amplitudes while catching clearly non-neural outliers:

- Normal awake EEG: ±50–200 µV

- Ictal (seizure) activity: ±200–500 µV

- Our clipping threshold: ±800 µV - leaves a wide margin above seizure amplitudes

- Electrode pops/ movement artefacts: can reach ±2000–5000 µV

This means genuine neural signal (including large seizure discharges) is never clipped, while transient artefacts are bounded to a range where they cause minimal distortion to downstream statistics.

np.clip(signal, -800, 800) - applied per channel after filtering, before normalisation.EEG amplitude varies dramatically across subjects (due to skull thickness, electrode impedance, scalp conductivity), across channels (due to electrode placement and reference scheme), and across recording sessions. Without normalisation, a classifier may learn to distinguish patients by their absolute amplitude - a subject signature rather than a seizure signature. Carrle et al., 2023 and You et al., 2025 identify this as a core risk: models may unintentionally learn subject-dependent patterns instead of signal characteristics that transfer across individuals.

The literature uses both approaches, but for different reasons:

tanh output. They warn about a crucial subtlety: “For many (clinical) applications, the relative signal strength across electrodes is meaningful... These differences should therefore not be factored out by, e.g., normalizing the channels individually.”We use per-channel z-score (mean=0, std=1) because it standardises scale without bounding the range, which is important for preserving the relative amplitude of seizure events (which can be 3–5σ deviations - exactly the kind of outlier a classifier needs to detect). Min-max [−1, 1] would compress seizure spikes into the same range as baseline activity.

Normalisation parameters (mean, std per channel) are learned statistics. If computed on the full dataset (including validation and test subjects), they leak information about the test distribution into the training pipeline. All preprocessing steps that learn parameters must therefore be fit using training data only.

You et al., 2025 highlight this as a widespread problem: evaluation protocols are often underspecified, and leakage risk is high. Reported improvements can strongly depend on how data is split and how synthetic samples enter the pipeline. When subjects appear in both training and testing, or when preprocessing is fitted beyond the training set, results can look strong without reflecting true generalization.

You et al., 2025 confirm this as a known problem across the EEG generation literature, and the entire thesis evaluation framework (G2, RQ5) is built around preventing this type of leakage.

norm_params_{hash}.npz (keyed by training case list to prevent cross-fold leakage); applied as (x − μ)/ σ. Already implemented in data/loader.py.Clipping handles transient amplitude spikes, but two classes of unusable windows remain:

- Flat (zero-padded) channels. The homogenization step fills missing or removed channels with zeros to maintain the 23-channel matrix shape. A window where an entire channel is zero has no neural information in that channel and would bias the model toward learning that zero=normal.

- Saturated windows. If a large artefact pushed >25% of samples in a channel to the ±800 µV clip boundary, the window is more artefact than signal. Unlike a brief spike (clipped and contained), a sustained saturation means the true signal is irrecoverably lost for that window.

If any channel’s standard deviation < 0.01 µV across the entire window, the window is rejected. This catches zero-padded channels from homogenization and dead electrodes.

If >25% of samples in any channel are at the ±800 µV clip boundary, the window is rejected. This catches sustained artefacts where the true signal is irrecoverable.

Design Decision: No ICA-Based Artefact Removal

Independent Component Analysis decomposes multichannel EEG into statistically independent sources. Some components correspond to neural activity; others correspond to artefacts (eye blinks, muscle, cardiac). By identifying and removing artefact components and reconstructing the signal, ICA can dramatically improve signal-to-noise ratio.

Sobhani et al., 2025 provide a strong justification for ICA in their emotion-recognition pipeline: “By breaking down the EEG signals into statistically independent components, ICA makes it possible to identify and eliminate artifact-related components. ICA greatly increases the signal-to-noise ratio and strengthens the stability of features recovered using DWT.”

Carrle et al., 2023 use automated ICA via ICLabel, which classifies each component as brain, muscle, eye, heart, line noise, channel noise, or other - removing the need for manual inspection.

Despite its benefits, ICA is not appropriate for every setting. We deliberately omit it for four reasons, each grounded in the literature or the experimental protocol:

ICA computes a mixing matrix from the full recording. If this decomposition is fitted on data that includes test subjects (even indirectly, through shared statistics), it leaks information about the test distribution into training. Since all preprocessing steps that learn parameters must be fit using training data only, running ICA per-patient per-fold would be required - adding significant computational overhead (683 files × 23 LOPO folds) for marginal benefit on long-term monitoring data.

The patients were paediatric epilepsy cases undergoing long-term video-EEG monitoring in a hospital setting, often sedated or sleeping. Eye-blink artefacts (the primary target of ICA) and voluntary muscle artefacts are far less prevalent than in awake BCI or emotion-recognition paradigms. Zhao et al., 2022 and Chaibi et al., 2024 work with intracranial EEG and do not use ICA at all, relying on simpler filtering and expert segment selection.

The core thesis question is whether synthetic augmentation helps on unseen subjects. If the generator is trained on aggressively cleaned data, it synthesises signals that lack the noise characteristics of real EEG. When these synthetic samples are mixed with real (uncleaned) data for downstream evaluation, the distribution mismatch can reduce robustness rather than improve it. You et al., 2025 observe that models operating in real-world conditions must handle “high noise, dynamic variability, and low signal-to-noise ratios” - over-cleaning the training data undermines this goal.

CHB-MIT has 23 channels after homogenization. While this is above the minimum for ICA (Sobhani et al., 2025 use 8 channels), reliable component separation improves with channel count. They set n_components = 8 (equal to their channel count), and their success is partly due to the targeted 8-channel montage. With 23 channels across the full scalp, the number of mixed sources is larger and component identification is less clearcut, especially for automated methods without manual verification.

EEG Frequency Bands Retained (0.5–40 Hz)

The bandpass filter preserves five clinically relevant frequency bands. Seizure activity in scalp EEG is concentrated in theta and alpha ranges, with ictal rhythmic discharges typically manifesting as evolving theta (3–7 Hz) activity.

Design Decisions

Every parameter is either grounded in the literature (indicated by inline citations) or is an implementation decision where the literature does not prescribe a specific value (indicated as project-specific in the justification).

Framework & Tooling

filtfilt for zero-phase filtering, Welch PSD estimation. Standard DSP tools with no reason to reimplement.Data Caching & Memory Management

The dataset after preprocessing is ~43 GB. Pre-computing all overlapping windows would nearly double that (each sample appears in two windows with 50% overlap), exceeding both the 32 GB RAM and available disk. The approach below isn't just an optimisation - it's the only way to run experiments on this hardware at all.

| Decision | Choice | Justification |

|---|---|---|

| Cache format | Flat preprocessed signals, not pre-windowed arrays | With 50% overlap, each sample appears in two windows, inflating ~43 GB to 64–74 GB. Storing the flat signal instead and computing windows on the fly via signal[:, start:start+1024] eliminates this duplication. Total cache: ~35–40 GB (∼40% less). |

| Signal cache format | <case>_signals.npy + <case>_index.npz |

Per case: one uncompressed .npy file (23 × N samples, float16) for memory mapping, plus a small compressed .npz index (~100 KB) with precomputed valid window start positions, labels, patient ID, and QC rejection count. The signal files use .npy (not compressed .npz) because numpy’s mmap_mode='r' requires an uncompressed format - compressed archives must be fully decompressed into RAM before access, defeating the purpose of memory mapping. The index files are small enough (~100 KB) to load entirely, so they use compressed .npz to save disk space. |

| Synthetic window storage | Compressed .npz files |

Generator outputs (E3–E5) are saved as synthetic_ratio_<r>.npz containing windows, labels, and patient IDs. Unlike signal caches, synthetic windows are small (~4–5K windows per seed) and loaded fully into RAM at training time - no memory mapping needed. Compressed .npz is used for reproducibility (the exact same windows are reused across detector training runs) and auditability (files can be inspected in notebooks for quality checks). |

| Memory strategy | Memory-mapped I/O (mmap_mode='r') |

Cannot load 35–40 GB into 32 GB RAM. Memory mapping lets the OS page cache manage which signal pages are resident. Python heap stays under ~10 MB (index arrays only). Each __getitem__ reads ~46 KB from disk via the page cache. |

| Numeric precision | float16 on disk, float32 at access time | Halves disk and mmap footprint. EEG values after clipping are in [−800, +800] µV - well within float16 range (up to 2048.0 exact). Quantization error (~0.01–0.1 µV) is below the EEG noise floor (~1–5 µV). Cast to float32 in __getitem__ before normalization and training. |

| QC timing | Precomputed at cache-build time | QC costs ~303 µs/window. With ~1.4M windows × ~10 epochs, on-the-fly QC would add ~70 min/experiment. Precomputing during the one-time cache build amortizes this entirely. QC runs on preprocessed signals before normalization (thresholds are in µV). |

| Normalization timing | On the fly in __getitem__ |

Normalization params depend on the training set of each LOPO fold. Pre-normalizing would require 23 copies of the cache. Instead, caches store raw µV values and normalization is applied per window (~37 µs overhead). Total per-window cost: ~60–70 µs - negligible vs ~1–2 ms GPU compute per batch element. |

| Cross-file windows | Prevented (windows respect file boundaries) | The flat signal concatenates multiple EDF files per case. Windows are enumerated within each file independently, so no window spans a recording boundary (which could be hours or days apart). Data loss: at most 1023 samples (~4s) per file. |

| Batch sampling | Case-aware (grouped by patient) | With 24 memory-mapped files, pure random shuffling thrashes the OS page cache (each case is 1–4 GB). CaseAwareSampler groups windows by case within each epoch - case order and within-case order are both shuffled, so every window is seen once per epoch in a valid permutation, but consecutive batches hit the same mmap file. This maximizes page cache hits without affecting training dynamics. |

| Mmap cache size | LRU, max 4 open files | Keeping all 24 mmaps open wastes page table entries under memory pressure. An LRU cache of 4 open files provides headroom for batch boundaries while keeping the virtual memory footprint bounded. Combined with case-aware sampling, the active case is nearly always cached. |

Hardware context: Target machine has 32 GB RAM, ~98 GB disk (shared with OS and Python env), and an RTX 3080 Ti (12 GB VRAM). A naive pre-windowed loader consumed ~125 GB RAM and was OOM-killed on first training attempt. The flat-signal approach reduces disk to ~35–40 GB and RAM to ~10 MB.

Generator & oversampling memory: CVAE and LDM training keep data tensors on CPU and move per-batch to GPU (12 GB VRAM cannot hold the full ictal set + model + optimizer). SMOTE/ADASYN subsample interictal windows to 5× ictal count before oversampling (reduced from 10× after OOM failures in LOPO) - without this, the flattened feature matrix would exceed available RAM.

Preprocessing Parameters

Canonical definitions and literature backing: Notch filter, Bandpass filter, Amplitude clipping, Normalization.

| Parameter | Value | Justification |

|---|---|---|

| Notch filter | 60 Hz + 120 Hz, Q=30 | US mains frequency - CHB-MIT recorded at Boston Children's Hospital. Q=30 gives ~2 Hz notch width, narrow enough to remove interference without eating into the EEG signal. Standard across EEG pipelines (You et al., 2025). |

| Bandpass | 0.5–40 Hz | Carrle et al., 2023 used 1–40 Hz; lower cutoff reduced to 0.5 Hz to preserve full delta band, relevant for pediatric seizure detection where slow-wave abnormalities are common. No useful neural signal above 40 Hz in scalp EEG after filtering. |

| Filter type | 4th-order Butterworth, zero-phase (filtfilt) |

Sobhani et al., 2025 advocates FIR for linear phase, but filtfilt makes IIR zero-phase at lower computational cost. Tradeoff documented in thesis Section 4.3. Zero-phase is essential to avoid shifting seizure onset timing. |

| Amplitude clipping | ±800 µV | Normal EEG: ±50–200 µV. Ictal: ±200–500 µV. Electrode pops: ±2000+ µV. 800 keeps seizures intact while bounding artifacts. Softer than outright rejection - avoids exclusion bias (Dakshit et al., 2023). |

| Normalization | Per-channel z-score (train-only) | Z-score is the most common normalization for EEG classification pipelines (You et al., 2025). Parameters hash-keyed per LOPO fold to prevent leakage - thesis Section 1.4 mandates this. |

Windowing Parameters

See also: Window QC criteria and frequency bands retained.

| Parameter | Value | Justification |

|---|---|---|

| Window size | 4s = 1024 samples | Carrle et al., 2023. Zhao et al., 2022 used 10s, but 4s gives more windows (important for class balance). Long enough to capture seizure rhythmic patterns (2–10s evolution). |

| Overlap | 50% (512-sample step) | Prevents seizures on window boundaries from being missed. 50% is a standard tradeoff between coverage and redundancy in EEG sliding-window analysis. |

| Ictal threshold | ≥50% of window in seizure | Standard in the literature (Carrle et al., 2023; Zhao et al., 2022). Avoids ambiguous windows with tiny seizure fragments. |

| QC: flat channel | std < 0.01 µV | Catches zero-padded channels from homogenization and dead electrodes. Zhao et al., 2022 had clinical experts select artefact-free segments; this automates that for scalability. |

| QC: excessive clipping | >25% at ±800 µV boundary | If more than a quarter of a channel sits at the clip boundary, the window is dominated by artifact, not brain signal. Carrle et al., 2023 rejects windows with extreme amplitude stats. |

Generative Models for EEG Synthesis

A literature-grounded comparison of GAN, VAE, and Diffusion model families for synthetic ictal EEG generation. RQ1

Motivation: Why Generative Models?

In the CHB-MIT dataset, seizure windows make up roughly 0.4% of total signal time. Classical resampling (e.g. SMOTE) just interpolates between existing samples in feature space - it can't reproduce the temporal structure or spectral patterns that make real seizures look like real seizures. Deep generative models actually learn the underlying data distribution and can produce entirely new ictal segments that preserve the multi-channel dynamics, giving the detector more realistic examples to learn from.

TimeGAN adds a supervised loss that forces the generator to respect step-wise temporal dynamics - meaning each generated time point has to follow plausibly from the previous one, not just look reasonable in isolation. This solves the main weakness of vanilla GANs on time-series. It jointly trains five sub-networks (embedder, recovery, generator, discriminator, supervisor) in a shared latent space, making it the strongest GAN-family baseline for multi-channel EEG synthesis.

See Design Decisions for full parameter justifications.

A Conditional VAE (CVAE) extends the standard VAE by conditioning on a label (patient ID + class) so it can generate seizures for a specific patient on demand. The encoder compresses each 23x1024 window down to a small 128-dimensional latent vector, and the decoder rebuilds the full signal from that compressed representation. Both use 1-D convolutional layers. KL annealing prevents posterior collapse (where the model ignores the latent code and produces near-identical outputs).

Encoder/decoder reused by the LDM (E5). See Design Decisions for full parameter justifications.

Why 1-D Conv here, when TimeGAN uses GRU? The two generators have different design goals. TimeGAN uses GRU because its architecture explicitly models step-wise temporal transitions (the supervisor network enforces autoregressive dynamics). The CVAE has no such requirement - it encodes/decodes a fixed-length window in one pass. For that task, convolutional layers are faster to train, fully parallelisable, and more stable than recurrent alternatives. Carrle et al., 2023 note that CNNs are “well suited for processing biological data” due to their hierarchical structure. The tradeoff: GRU captures sequential dynamics naturally but is slow and prone to mode collapse in adversarial training; 1-D Conv captures spatial/spectral patterns efficiently but relies on the latent space (not architecture) for temporal coherence.

A Latent Diffusion Model takes the CVAE's compressed representation (the latent space) and runs the DDPM diffusion process there instead of on the full 23,552-dimensional signal. This is massively faster - denoising a 128-value latent vector is much cheaper than denoising the raw signal. It reuses the same encoder/decoder as the CVAE, so if the two models produce different quality outputs, that difference can only come from how they generate in latent space (direct sampling vs iterative denoising), not from a better encoder. The denoiser is a 1-D UNet with additive embeddings for time-step, class, and patient conditioning.

Operates in the CVAE latent space - same encoder/decoder as E4, so quality differences isolate the generation method. See Design Decisions for full parameter justifications.

Why LDM over plain DDPM? Full-resolution DDPM on 256 Hz × 23-channel windows is prohibitively slow at inference. The shared VAE latent space also enables reuse of the encoder/decoder across the CVAE and LDM, keeping the comparison controlled.

Head-to-Head Comparison

Qualitative pre-experiment assessments based on published findings in the reviewed literature, not empirical results from this thesis. Each criterion is assessed specifically in the context of multi-channel ictal EEG synthesis. Legend below explains what each criterion measures.

| Criterion (see legend) | TimeGAN | CVAE | LDM |

|---|---|---|---|

| Sample quality | High | Moderate | Very High |

| Sample diversity | Variable | Moderate | High |

| Training stability | Unstable | Stable | Stable |

| Inference speed | Fast | Fast | Slow |

| Temporal coherence | High | Moderate | High |

| Patient conditioning | Yes | Yes (label) | Yes (guided) |

| EEG literature depth | Most mature | Moderate | Growing fast |

| Mode collapse risk | High | Low | Low |

All models are evaluated using the evaluation strategy defined in the methodology.

Model Suitability Radar

Qualitative scores (1-10) for suitability to ictal EEG synthesis, derived from the literature reviewed in this thesis. These are not empirical benchmarks - they are pre-experiment assessments based on published findings, used to motivate the choice of all three models (each excels in different axes).

How scores were assigned

Sample quality & diversity: Yoon et al., 2019 show TimeGAN outperforms standard GANs on discriminative and predictive scores. Diffusion models consistently produce higher-fidelity samples than GANs/VAEs across domains (You et al., 2025). VAEs trade sharpness for stable training (Carrle et al., 2023).

Training stability: GAN adversarial training is inherently unstable (Boukhennoufa et al., 2023 address mode collapse explicitly). VAEs and diffusion use fixed loss objectives - no adversarial balancing required.

Inference speed: GANs and VAEs generate in a single forward pass. Diffusion requires iterative denoising (50-1000 steps), making it orders of magnitude slower at inference (Rouzrokh et al., 2025).

Temporal coherence: TimeGAN's supervised loss explicitly enforces step-wise temporal dynamics (Yoon et al., 2019). LDM achieves temporal coherence through iterative refinement. CVAE relies on the latent space alone, with no explicit temporal objective.

Literature depth: TimeGAN (2019) has the most EEG-specific applications. Diffusion for EEG is newer but growing rapidly (You et al., 2025; Silva et al., 2025). CVAE for EEG has moderate coverage (Pezoulas et al., 2024).

Selection Principles

The three generators form a deliberate progression from established to novel, each representing a distinct generative paradigm identified in the SLR corpus (26 papers: 17 primary studies, 9 reviews).

Excluded models and rationale

The SLR corpus contains several EEG-specific generative models. We exclude them for principled reasons, not oversight:

| Model | Source | Why excluded |

|---|---|---|

| WGAN-GP | You et al., 2025 Table 8 (Wei 2019, CHB-MIT) | TimeGAN extends the GAN paradigm with a supervised temporal embedding loss and autoencoder reconstruction loss specifically for time-series. WGAN-GP uses MLP/CNN architectures that don't model temporal dependencies explicitly - making TimeGAN the more appropriate GAN representative for sequential EEG data. Testing WGAN-GP alongside TimeGAN would compare two GAN variants rather than two generative paradigms, which is not our research question. |

| DCGAN/ DCWGAN | You et al., 2025 Table 8 (Rasheed 2021, Xu 2022, CHB-MIT) | Trains independent per-channel generators then stitches outputs together - cannot model cross-channel correlations by design. Seyfi et al., 2022 showed that preserving inter-channel correlations is essential for realistic multi-channel EEG. Our generators operate on the full 23-channel window jointly. |

| EpilepsyGAN (cWGAN) | You et al., 2025 Table 8 (Pascual 2021) | An interictal-to-ictal translation model (U-Net autoencoder conditioned on interictal input). Our setup generates from noise - unconditional/class-conditional, not input-output translation. Different generative task altogether. |

| DiffEEG | You et al., 2025 Section 7 (Shu, CHB-MIT) | A DDPM trained directly on EEG signals for seizure prediction augmentation. Our LDM (E5) implements the same diffusion paradigm but in a compressed latent space (128 dims vs raw signal), following the hybrid VAE+diffusion approach that You et al., 2025 explicitly recommend over raw-signal diffusion for "improving computational efficiency." Additionally, DiffEEG targets prediction (preictal) while we target detection (ictal). |

| CR-VAE | You et al., 2025 Section 5.1.4 (Li, intracranial EEG) | Recurrent multi-head decoder designed for intracranial EEG (very different signal characteristics from scalp EEG). Uses causal structure learning which requires longer recordings than our 4-second windows. Our CVAE uses 1D-Conv which is better suited to fixed-length windows at 256 Hz. |

| COSCI-GAN | Seyfi et al., 2022 | Explicitly preserves cross-channel correlations via a decomposition into shared + channel-specific components. An EEG-specific inductive bias that would confound our comparison: if COSCI-GAN outperforms TimeGAN, is it because the decomposition architecture is better, or because it was given extra prior knowledge about channel structure? We instead evaluate cross-channel preservation as a post-hoc metric across all three general-purpose generators, measuring the outcome without hard-coding the mechanism. |

| SynSigGAN | You et al., 2025 (Hazra) | BiGridLSTM generator for privacy-preserving EEG synthesis (Siena Scalp EEG, not epilepsy). Evaluated exclusively with paired metrics (PCC=0.997, RMSE, MAE, PRD) measuring similarity to specific real signals - a PCC of 0.997 suggests near-copying rather than diverse generation. Single-signal architecture that doesn't extend to 23-channel multivariate windows without substantial modification. |

| GPT/ Transformer-based | Carrle et al., 2023 (Bird 2021, Niu 2021) | Autoregressive generation (predict next sample given all previous). Our 4-second windows at 256 Hz = 1024 samples per channel x 23 channels = 23,552-length sequence. Self-attention is O(n2) in sequence length - computationally prohibitive at this scale without significant architectural shortcuts that would compromise the comparison. Only 2/27 studies in Carrle et al., 2023 used GPT for EEG generation, vs 24 using GAN - minimal established practice. |

Overarching principle: we select general-purpose architectures (GAN, VAE, diffusion) rather than EEG-specific variants because: (1) our research questions ask whether generator families differ in utility/fidelity, not whether a hand-tuned EEG architecture beats a generic one; (2) general architectures are reproducible without domain-specific priors (correlation decompositions, causal graphs, TFR transforms); (3) a fair comparison requires models at comparable complexity - adding EEG-specific inductive biases would confound the architecture comparison with prior-knowledge advantage.

Architecture Design Decisions

Full parameter justifications for each architecture. Every parameter is either literature-grounded or justified as project-specific.

Detector (1D-CNN)

Intentionally lightweight - the detector is a controlled variable, not the research question. Carrle et al., 2023 used a fixed classifier across all augmentation conditions for the same reason: performance differences can only come from the training data, not the model. See Gen. Models for how the detector interfaces with the three generators.

Why a 1D-CNN instead of a recurrent network? Recurrent neural networks (RNNs) and their gated variants (LSTM, GRU) are a natural candidate for time-series data, but CNNs have become the dominant choice for fixed-window EEG classification. In a review of 90 DL-for-EEG studies, Craik et al., 2019 found CNNs were the dominant architecture (43% of studies vs 10% for RNNs), with seizure detection “essentially split between using either CNN’s or RNN’s” and both achieving near-perfect accuracy on shared datasets. Cho & Jang, 2020 directly compared CNN, RNN, and FCNN on 5-second EEG windows for seizure detection and found CNN outperformed RNN across all input modalities (AUC 0.989–0.993 vs 0.985–0.989), concluding that “CNN can be the most suitable network structure for automated seizure detection” because it “can effectively learn a general spatially-invariant representation of seizure patterns.” They also noted that RNNs benefit from longer sequences, whereas CNNs excel on shorter, fixed-length inputs. In seizure detection, recordings are routinely segmented into short fixed-length windows (this thesis uses 4 s/ 1024 samples) because the classification task is window-level: each segment is independently labelled ictal or interictal. Full recordings can span hours, but the clinically relevant patterns - spike-and-wave complexes, rhythmic discharges, amplitude changes - are local events that unfold within seconds, making short windows both a standard practice in the literature and a natural fit for convolutional filters that detect local patterns regardless of position (sliding-window segmentation). For this thesis, the choice is further motivated by practical constraints: a 49K-parameter 1D-CNN is tractable for 23 LOPO folds × 3 seeds, and convolutions over these fixed-length windows are fully parallelisable on GPU, unlike the sequential step-by-step computation required by RNNs.

| Parameter | Value | Justification |

|---|---|---|

| Architecture | 3 Conv1d blocks + 2 Linear |

49K params. Small enough to train quickly across 23 LOPO folds × 3 seeds but deep enough to learn temporal EEG patterns. No attention or recurrence - keeps it simple to isolate the augmentation effect. |

| Kernel sizes | k=7, k=5, k=3 (stride=2) | First layer k=7 (~27ms at 256 Hz) captures slightly longer temporal patterns. Sizes shrink as receptive field grows with depth. Stride=2 halves spatial dim each layer (1024 → 512 → 256 → 128 → pool to 1). |

| Optimizer | Adam, lr=1e-3 | Default optimizer in deep learning EEG literature. 1e-3 is the standard starting learning rate for Adam. |

| Loss | Class-weighted cross-entropy | Weights inversely proportional to class frequency. Zhao et al., 2022 found class-weighted loss competitive with data augmentation in some cases. |

| Early stopping | Validation AUPRC, patience=10, max 100 epochs | Monitors the primary metric (AUPRC) rather than loss, ensuring model selection optimises what we actually care about. Patience=10 balances convergence time against overfitting. |

| Batch size | 64 | Balance between gradient noise (too small) and memory (too large). Standard for EEG classification. |

Dropout |

0.3 (conv), 0.5 (FC head) | Prevents overfitting with heavy class imbalance. Higher dropout in the FC head because it has fewer parameters and is more prone to memorization. |

TimeGAN (Yoon et al., 2019)

Architecture overview and literature context: TimeGAN model card.

| Parameter | Value | Justification |

|---|---|---|

| Input reshape | (23, 1024) → (64, 368) | GRUs struggle with 1024-step sequences (vanishing gradients, slow). 64 timesteps of 16-sample segments at 256 Hz = 62.5ms - fine-grained enough for EEG temporal patterns. Standard in TimeGAN implementations. |

| Hidden dim | 128 | Matches detector embedding size and CVAE latent dim. Keeps generator proportional to detector. |

GRU layers |

3 (main), 2 (supervisor) | Follows original Yoon et al., 2019. Supervisor is simpler because it only predicts one step ahead. |

| Phase 3 loss weights | 10× for supervised, moment, reconstruction | From Yoon et al., 2019. Adversarial loss is volatile; stabilizing losses need higher weight to prevent the generator from chasing discriminator noise. |

| Epochs per phase | 600 | Starting point. Yoon et al., 2019 train until convergence without specifying a number. Adjustable based on loss curves. |

Conditional VAE

Architecture overview and literature context: CVAE model card.

| Parameter | Value | Justification |

|---|---|---|

| Latent dim | 128 | Matches detector embedding size. Large enough for 23-channel EEG variability, small enough to train without huge datasets. Common choice in VAE-based signal generation. |

| Conditioning | Class label: extra channel (enc) + concat to latent (dec) | Standard CVAE approach. Simpler than FiLM or cross-attention, with no proven benefit from more complex conditioning for scalar labels. |

| Beta warmup | 0 → 1 over 50 epochs | KL annealing prevents posterior collapse (see ELBO). The 50-epoch ramp is a project choice - adjustable if KL collapses or reconstructions are blurry. |

| Activation | LeakyReLU(0.2) |

Standard for generators/autoencoders. Regular ReLU can cause dead neurons in decoders where gradients flow backward through many layers. |

| Encoder | 4 Conv1d layers (stride-2) + pool |

Minimum depth to compress 1024 → 1 spatially. Channel counts double each layer (standard conv autoencoder practice). Encoder is reused by the LDM. |

| Training epochs | 500 | No early stopping for the generator - epoch count is the primary convergence control. 500 with 50-epoch KL annealing gives 450 epochs at full regularisation. Loss continues declining gradually through the full run (recon loss drops ~20% between epoch 200 and 500). |

| Learning rate | 5e-4 (Adam, eps=1e-7) + 5-epoch linear warmup from 5e-5 | Reduced from 1e-3 after epoch-1 NaN divergence. The warmup ramps LR from lr/10 to lr over 5 epochs, protecting the critical first steps when random weights + random batch ordering can overflow the decoder. ReduceLROnPlateau (patience=10, factor=0.5, min_lr=1e-5) activates after warmup completes. |

| Numerical stability | log_var clamp [−20, 20] + grad clip (1.0) + NaN guards |

Without clamping, exp(0.5 × log_var) in the reparameterization trick can overflow to inf for certain initializations, cascading to NaN across all weights. Gradient clipping prevents a single bad batch from making outsized weight updates. Training raises immediately on NaN/Inf loss; generation refuses to run if model weights contain NaN. All are standard VAE practice. |

Latent Diffusion Model

Architecture overview and literature context: LDM model card.

| Parameter | Value | Justification |

|---|---|---|

| Noise schedule | Cosine, T=1000 | Cosine schedule works better than linear for small data; T=1000 is the standard DDPM default (see glossary for references). |

| Latent reshape | 128 → (16, 8) | UNet needs a spatial dimension. 16 channels × 8 positions is the most natural factoring giving the UNet enough spatial extent for 3 down/up blocks. |

| UNet depth | 3 blocks (64 → 128 → 256) | 3 levels on length-8 gives lengths 4 → 2 → 1 at bottleneck. Cannot go deeper. Base channels=64 is moderate capacity. |

| DDIM steps | 50 | 50–100 DDIM steps approximate 1000 DDPM steps with minimal quality loss. |

| Conditioning | Additive embedding (time + class + patient) | Cross-attention (the standard LDM approach) is designed for complex conditioning like text sequences. For scalar labels, additive embedding is standard in DDPM and much cheaper. Deliberate departure, documented. |

| Learning rate | 1e-4 (AdamW) | Lower than detector/CVAE. Diffusion models are sensitive to lr; 1e-4 is the standard DDPM learning rate. |

| CVAE encoder | Frozen (always) | Standard LDM design. Fine-tuning the encoder during diffusion training would destabilize the decoder, since the decoder was trained on a specific latent distribution. |

| Training epochs | 500 | Trains only the UNet denoiser (encoder/decoder are frozen from E4). No early stopping - epoch count is the primary convergence control. |

| Numerical stability | ReduceLROnPlateau + NaN guard |

Same scheduler approach as CVAE (patience=10, factor=0.5, min_lr=1e-6). Training raises immediately on NaN/Inf loss. Applied proactively since the LDM shares the same µV-scale data pipeline. |

Evaluation & Experimental Protocol

All three generators are evaluated identically: AUPRC as primary metric, 2 synthetic ratios (50/ 100%), 3 seeds, LOPO cross-validation, Wilcoxon signed-rank for significance. Fidelity is assessed via PSD + KL per band and C2ST; utility via TSTR. The detector is frozen across E1-E5 so any performance change is attributable to the data alone. Ratios limited to 50% and 100% based on Carrle et al., 2023 finding that gains plateau or reverse beyond 100%.

Full metric rationale, excluded metrics, and literature justifications: Methodology - Evaluation Strategy.

Roadmap & Experiment Plan

Experiment plan. Seven experiments mapped to the thesis research questions, running 30 Mar – 26 Jul 2026. Sequential single-machine execution: TimeGAN first (independent), then CVAE, then LDM (reuses CVAE encoder).

Experiment Plan Overview

The plan evaluates synthetic EEG data from three generator families (TimeGAN, CVAE, LDM). Each generator's output is first evaluated directly as data (spectral, temporal, spatial fidelity) and then tested for downstream utility via a frozen detector on unseen patients. The object of study is the generated data, not the classifier.

All experiments follow the evaluation strategy. Additionally:

Training Pipeline (E3-E5)

Each generator experiment has two sequential phases. The generator and detector are trained independently - the generator's only role is to produce synthetic data for the detector to learn from.

Under LOPO, both phases repeat for every fold (23 folds, one patient held out per fold) and every seed (3 seeds). In each fold, the generator only learns from that fold's training patients, and the detector is evaluated on the held-out patient. This means the generator never sees data from the test patient - preventing leakage at both the generation and detection stages.

.npz file per ratio)The ratio controls how many synthetic windows are added to the training set - it does not replace real data. A ratio of 100% means "generate as many synthetic seizures as there are real ones," so the detector ends up with both. For example, if a fold has 200 real seizure windows and the ratio is 100%, the AUGM detector trains on 200 real + 200 synthetic = 400 seizure windows total. At 200%, that becomes 200 real + 400 synthetic = 600.

Both AUGM and TSTR follow the full LOPO structure: 23 folds x 3 seeds, with one patient held out per fold. The AUGM runs happen first (all folds/seeds at both ratios), then TSTR runs as a separate pass over the same 23 folds x 3 seeds, reusing the saved 100% files. In total, each fold produces 3 detector results (2 AUGM + 1 TSTR), giving 207 detector training runs per generator experiment. Comparing TSTR to AUGM at 100% isolates the contribution of synthetic data: both use the same synthetic windows, but AUGM also has real seizures in the mix. If TSTR performance approaches AUGM at 100%, the generator has captured seizure-discriminative structure rather than just acting as a regularizer.

Why These Three Generators?

Each experiment fills a specific gap identified in the SLR corpus. For the full selection rationale, excluded models, and design principles, see the Models page.

You et al., 2025 (Table 8) shows all prior CHB-MIT augmentation used WGAN-GP or DCGAN. TimeGAN (Yoon et al., 2019) extends the GAN paradigm with a supervised temporal embedding loss - the GAN-family representative. Corpus: GAN in 18/26 papers; TimeGAN in 4/26.

Carrle et al., 2023 found that "VAE were only used for comparison... and always performed worse" - but those were unconditional MLPs. Our CVAE uses 1D-Conv, class conditioning, and a 128-dim latent space. Tests whether architecture and conditioning change the outcome. Encoder/decoder shared with E5. Corpus: CVAE in 4/26 papers.

You et al., 2025 (Section 8) recommend "combining VAE and diffusion models to perform diffusion in a low-dimensional space." Our LDM implements exactly this: DDPM denoising in the CVAE's 128-dim latent space (encode, denoise, decode) rather than on the raw 23x1024 signal. Corpus: latent diffusion in 3/26 (0 primary EEG studies). First application to epilepsy EEG.

Problems to Solve

Seizure windows are <0.4% of total signal time. Standard classifiers predict majority class almost exclusively. Without mitigation, no meaningful detection model can be built.

Seizure EEG morphology varies substantially between patients. Models trained on one patient generalise poorly to others. The chb01/21 longitudinal pair offers a rare opportunity to study how the same patient's seizure morphology evolves over time.

EEG is non-stationary at multiple time-scales: within a recording, across sessions, and across the montage changes seen in 10 patients. Generative models must capture temporal dynamics, not just spectral distributions.

Seizure activity spreads across the brain, showing up as coordinated patterns across the 23 channels. A generative model can't just make each channel look right individually - it has to preserve the relationships between channels too.

If patient identity is shared between train/test sets, evaluation becomes optimistic. With 24 cases and chb01/chb21 being the same subject, any patient-level cross-validation split must keep both cases together. Synthetic data generated from chb01 must not appear in a test set that also evaluates chb21.

There's no single number that tells you if synthetic EEG is "good enough." Matching the power spectrum is necessary but not enough - the temporal dynamics and spatial patterns also need to be realistic. The most meaningful test: can a detector trained on only synthetic data still detect real seizures (TSTR)?

Planned Experiments

Seven experiments mapped to the thesis research questions.

A fixed 1D-CNN seizure detector trained on real data with class-weighted loss. No augmentation. This is the A0 anchor - every other experiment is compared to this.

The bar that generators must clear. If cheap interpolation already solves the imbalance problem, there's no reason to train expensive generative models. Both methods flatten each 23x1024 window into a 23,552-value vector and interpolate sample-by-sample - destroying temporal coherence and spectral structure in the process. This is exactly why E3-E5 exist.

First generative model. TimeGAN (Yoon et al., 2019) models temporal dynamics via supervised embedding-recovery. Three-stage evaluation per fold: (1) generate synthetic ictal windows, (2) assess data fidelity directly (spectral, temporal, spatial), (3) measure downstream utility via the frozen detector.

Second generative model. Conditional VAE with 1D-Conv encoder/decoder, 128-dim latent space. Same three-stage evaluation: generate, assess fidelity, measure utility. The encoder/decoder is shared with E5 (LDM) so any quality difference between them can only come from the generation method (direct sampling vs iterative denoising).

Third generative model. Runs the diffusion process in the CVAE's compressed latent space - massively faster than raw-signal diffusion. Same three-stage evaluation. Reuses the CVAE encoder/decoder from E4, isolating the generation method. Best single-split results (+29% AUPRC over baseline, lowest cross-seed variance).

No new training - synthesizes E1–E5 results. Utility ranking with Wilcoxon signed-rank tests. Ratio sensitivity curves. Per-patient impact analysis (who benefits, who doesn’t). Fidelity ranking: KL divergence on PSD, discriminative score, TSTR performance. Cost-benefit (GPU-hours per generator).

A detector might get good scores not because it learned what seizures look like, but because it learned to recognise which patient is which. A linear probe on the detector's post-pooling embeddings tests how easily patient identity can be read. Run on the E1 detector (real only) and E3-E5 detectors (augmented) - does augmentation make patient identity easier or harder to infer? Complemented by a proximity check: k-NN distance from synthetic to real samples in embedding space detects sample-level memorisation (individual window copying rather than subject-level patterns).

Temporal Planning

Detailed week-by-week plan from current position to thesis submission. Each phase has concrete deliverables and decision points.

Phase Overview (30 Mar – 26 Jul 2026, updated 6 May with measured timings)

Sequential execution on a single machine (RTX 3080 Ti 12GB). All single-split experiments (E1-E5) complete. Full LOPO relaunched 5 May 2026 after OOM/disk-full fixes (E2 SMOTE reduced interictal subsample 10x→5x; E3-E5 now generate+train per fold to fit in 98 GB disk). E1 complete (4 May), E2-E5 running. Measured detector time: ~90 min/fold (mean per seed). Generator times (measured in single-split, estimated ~1.2x in LOPO due to larger training set): TimeGAN ~20 min/fold, CVAE ~50 min/fold, LDM ~68 min/fold. Total LOPO estimate: 5–6 weeks (completing mid Jun 2026). Thesis writing ongoing since Jan 2026 (midterm submitted 31 Jan).

Does augmentation reduce or amplify subject-specific patterns? Uses a linear probe on the frozen detector’s embeddings.

Broader Future Directions

🔭 Foundation/ Large-Scale Models

Pre-training a large generative model on all CHB-MIT patients and fine-tuning patient-specifically. Potentially extending to other EEG corpora (TUEG, Siena) to improve generalisability.

👀 Explainability (XAI)

Using SHAP, LIME, or gradient-based attribution on the downstream classifier to verify that decision-relevant features in synthetic samples align with known ictal EEG biomarkers (theta/ gamma bursts, spike-wave morphology).

🔑 Privacy-Preserving Synthesis

EEG has been shown to contain biometric identifiers. Investigating whether generative models can produce patient-anonymous synthetic data while retaining clinical utility (differential privacy, membership inference tests).

📈 Seizure Prediction

Extending from detection (ictal vs. interictal) to prediction (pre-ictal identification). Synthetic pre-ictal EEG generation would provide augmentation for the rarer pre-ictal class and could feed a prediction pipeline.

Current Status

Implementation progress. Last updated: 8 May 2026. See Results for experimental data and Roadmap for the full experiment plan.

What's Done

What's Left

Full LOPO evaluation in progress

23 folds × 3 seeds = 69 runs per experiment per ratio. E1 complete (AUPRC 0.394 ± 0.023); E2-E5 running (estimated mid Jun 2026). See Results for all experimental data.

Decisions Made During Experiments

Design decisions driven by constraints encountered during the LOPO evaluation.

The CVAE's reparameterization step requires exp(log_var), which is numerically unbounded and can diverge across seeds with different initialization. Standard VAE stability measures applied: log_var clamping at [-20, 20], gradient clipping (max_norm=1.0), learning rate 5e-4 with 5-epoch linear warmup. These are well-established practices for VAE training, and the 3-seed protocol confirmed consistent convergence.

A single workstation (32 GB RAM, 98 GB disk) running 23 LOPO folds imposes two constraints: (1) SMOTE/ ADASYN interictal subsampling is capped at 5x the minority count per fold (higher ratios exceed available RAM when materialized); (2) generators process one fold at a time (generate, train, discard synthetic data) rather than pre-generating all folds, to stay within disk budget. Neither constraint affects the algorithms themselves - SMOTE still operates on full minority k-NN, and generators train on the same data per fold.

Pure TSTR (all synthetic training data, as in Bing et al., 2022) is infeasible: our generators only produce ictal windows, and matching the real interictal training set size (~1.28M windows per fold) exceeds disk. Since the generators are single-class (seizure only), TSTR naturally scopes to the class they produce: synthetic ictal + real interictal, no real ictal in training. This isolates minority-class generation quality: if the detector performs well using only synthetic seizures, the generator has learned clinically useful ictal patterns. Pascual et al., 2019 use the same approach - generating synthetic seizure EEG to train detectors for unseen patients.

Experimental Results

LOPO and single-split results for all experiments. See Roadmap for experiment definitions, Models for architecture details, and runtime decisions for issues encountered during execution. Last updated: 8 May 2026.

E1 Baseline - Full LOPO Results (23 folds × 3 seeds)

Completed 4 May 2026. The definitive E1 anchor - all augmented experiments (E2–E5) will be compared against these numbers.

Cross-seed summary (mean of per-seed means ± std across 3 seeds, 69 runs).

| Metric | Seed 42 | Seed 123 | Seed 456 | Mean ± Std |

|---|---|---|---|---|

| AUPRC | 0.3892 | 0.3690 | 0.4242 | 0.3941 ± 0.0228 |

| AUROC | 0.8276 | 0.8406 | 0.8632 | 0.8438 ± 0.0147 |

| F1 | 0.4260 | 0.4141 | 0.4599 | 0.4333 ± 0.0194 |

| Sens. @ 95% Spec. | 0.6307 | 0.6459 | 0.6609 | 0.6459 ± 0.0123 |

| Detector training time (avg/ fold) | ~90 min | ~99 min | ~87 min | ~92 min (~106 h total) |

Per-fold AUPRC breakdown (click to expand)

| Fold | Test Subject | AUPRC (mean ± std) | Note |

|---|---|---|---|

| 00 | chb01+chb21 | 0.658 ± 0.017 | |

| 01 | chb02 | 0.767 ± 0.138 | |

| 02 | chb03 | 0.646 ± 0.074 | |

| 03 | chb04 | 0.219 ± 0.131 | |

| 04 | chb05 | 0.474 ± 0.104 | |

| 05 | chb06 | 0.001 ± 0.000 | Near-zero |

| 06 | chb07 | 0.729 ± 0.175 | |

| 07 | chb08 | 0.218 ± 0.053 | |

| 08 | chb09 | 0.895 ± 0.033 | |

| 09 | chb10 | 0.815 ± 0.020 | |

| 10 | chb11 | 0.925 ± 0.049 | Best fold |

| 11 | chb12 | 0.064 ± 0.033 | |

| 12 | chb13 | 0.057 ± 0.024 | |

| 13 | chb14 | 0.002 ± 0.000 | Near-zero |

| 14 | chb15 | 0.247 ± 0.038 | |

| 15 | chb16 | 0.012 ± 0.006 | Near-zero |

| 16 | chb17 | 0.159 ± 0.050 | |

| 17 | chb18 | 0.216 ± 0.247 | High variance |

| 18 | chb19 | 0.711 ± 0.088 | |

| 19 | chb20 | 0.011 ± 0.007 | Near-zero |

| 20 | chb22 | 0.651 ± 0.179 | |

| 21 | chb23 | 0.347 ± 0.152 | |

| 22 | chb24 | 0.242 ± 0.102 |

LOPO AUPRC (0.394) is substantially higher than single-split (0.177). This is expected: LOPO averages across all patients including easy ones (chb09, chb10, chb11 > 0.8), while single-split tests on a fixed set of 4 patients that happen to be harder.

Massive inter-patient variability (std 0.33 within each seed) - some patients (chb06, chb14, chb16, chb20) are near-undetectable while others (chb09, chb10, chb11) are nearly perfect. This is the core motivation for augmentation: can synthetic data help the hard patients without hurting the easy ones?

Low cross-seed variance (±0.023) means the detector is stable across random initializations - performance differences between experiments will be attributable to the training data, not random chance.

E2 Non-Synthetic Controls - LOPO Results (23 folds)

Seed 42 complete (8 May 2026). Seed 123 in progress (6/23 folds). Seed 456 pending.

Seed 42 results (23 folds). Cross-seed summary will be added when all 3 seeds complete.

| Metric | SMOTE (seed 42) | ADASYN (seed 42) | E1 Baseline (cross-seed) |

|---|---|---|---|

| AUPRC | 0.1468 | 0.1773 | 0.3941 |

| AUROC | 0.6017 | 0.6379 | 0.8438 |

| F1 | 0.2242 | 0.2544 | 0.4333 |

| Sens. @ 95% Spec. | 0.3456 | 0.3806 | 0.6459 |

Per-fold AUPRC breakdown - SMOTE seed 42 (click to expand)

| Fold | Test Subject | AUPRC (SMOTE) | AUPRC (ADASYN) | Note |

|---|---|---|---|---|

| 00 | chb01+chb21 | 0.2390 | 0.5436 | |

| 01 | chb02 | 0.1925 | 0.0455 | |

| 02 | chb03 | 0.0373 | 0.4612 | |

| 03 | chb04 | 0.1863 | 0.1269 | |

| 04 | chb05 | 0.0059 | 0.0108 | Near-zero |

| 05 | chb06 | 0.0006 | 0.0004 | Near-zero |

| 06 | chb07 | 0.3817 | 0.1534 | |

| 07 | chb08 | 0.0847 | 0.1292 | |

| 08 | chb09 | 0.4524 | 0.4880 | |

| 09 | chb10 | 0.1801 | 0.6903 | ADASYN best |

| 10 | chb11 | 0.1231 | 0.3094 | |

| 11 | chb12 | 0.0082 | 0.0261 | Near-zero |

| 12 | chb13 | 0.0282 | 0.0178 | |

| 13 | chb14 | 0.0035 | 0.0036 | Near-zero |

| 14 | chb15 | 0.0075 | 0.0076 | Near-zero |

| 15 | chb16 | 0.0011 | 0.0012 | Near-zero |

| 16 | chb17 | 0.0863 | 0.0670 | |

| 17 | chb18 | 0.0354 | 0.2715 | |

| 18 | chb19 | 0.6150 | 0.4587 | |

| 19 | chb20 | 0.0025 | 0.0025 | Near-zero |

| 20 | chb22 | 0.5831 | 0.0208 | High divergence |

| 21 | chb23 | 0.0641 | 0.0029 | |

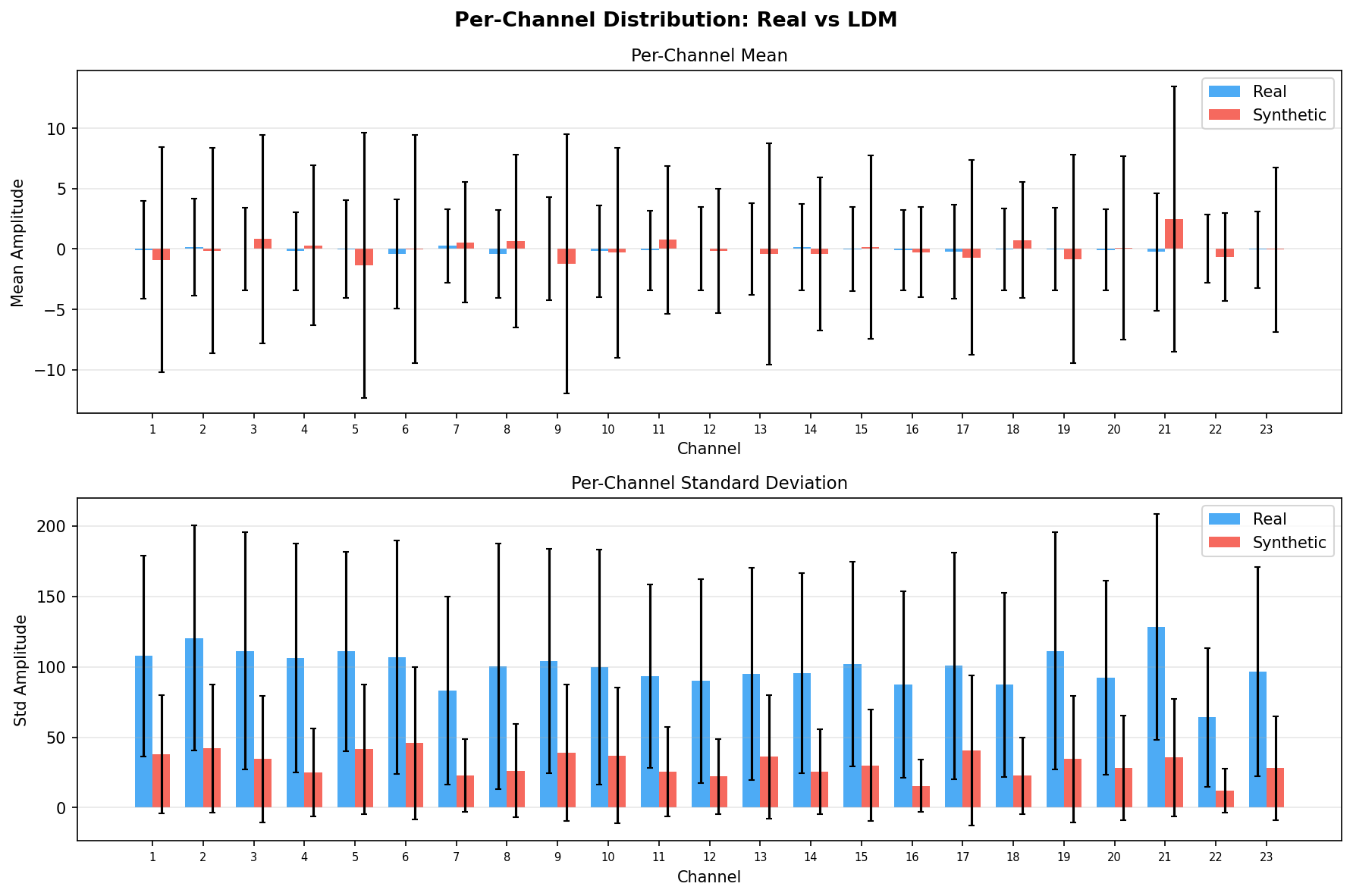

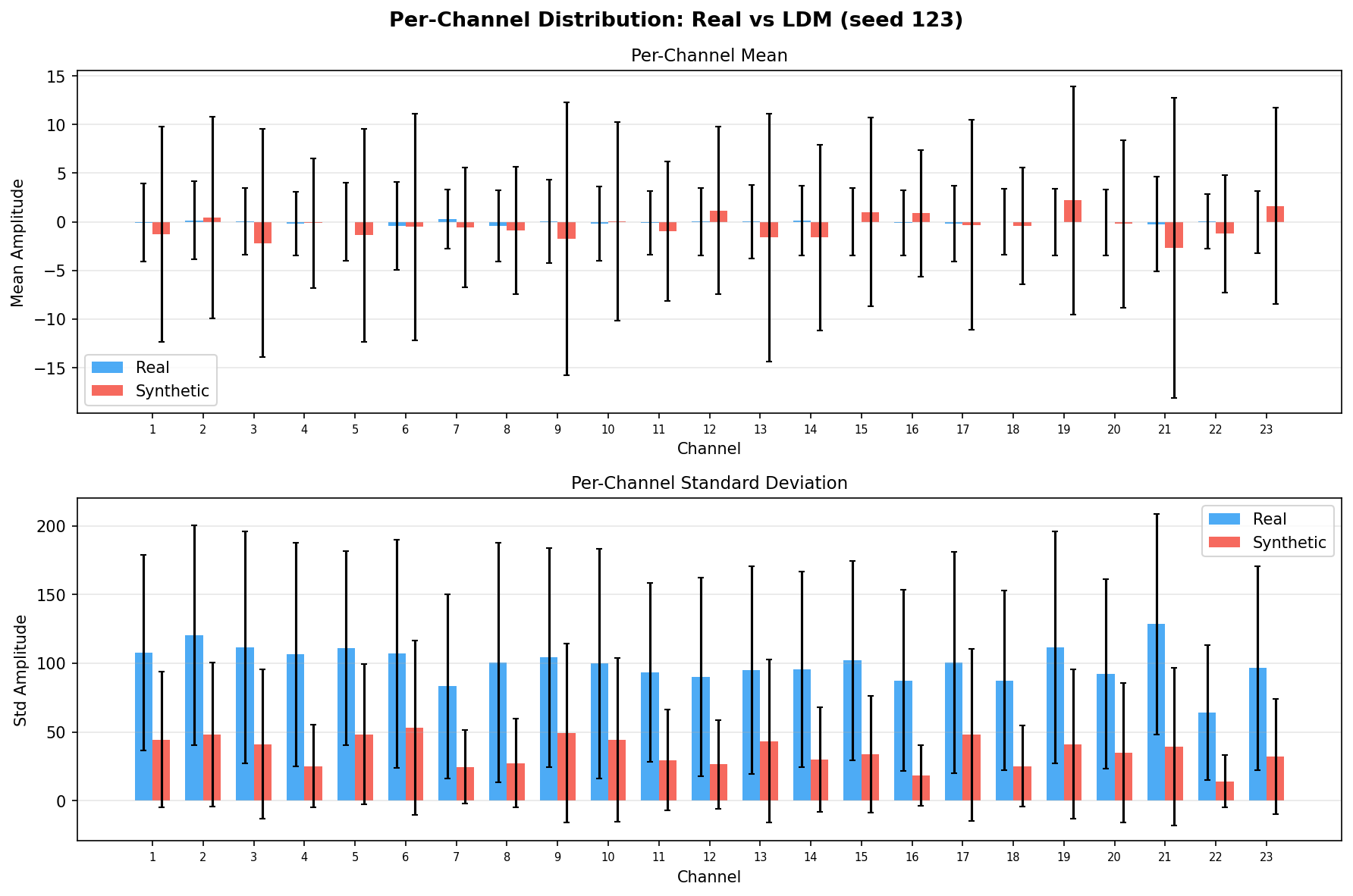

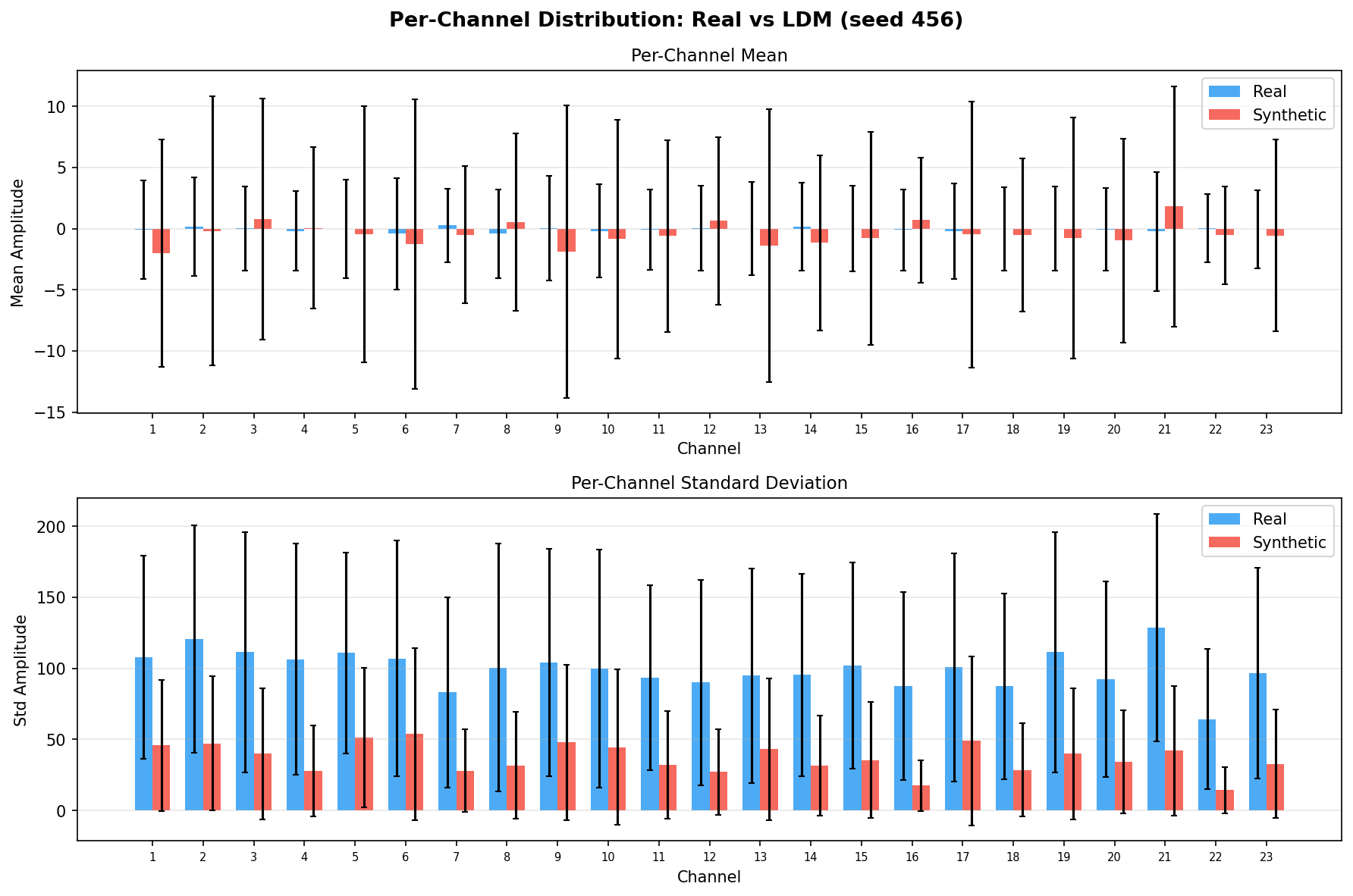

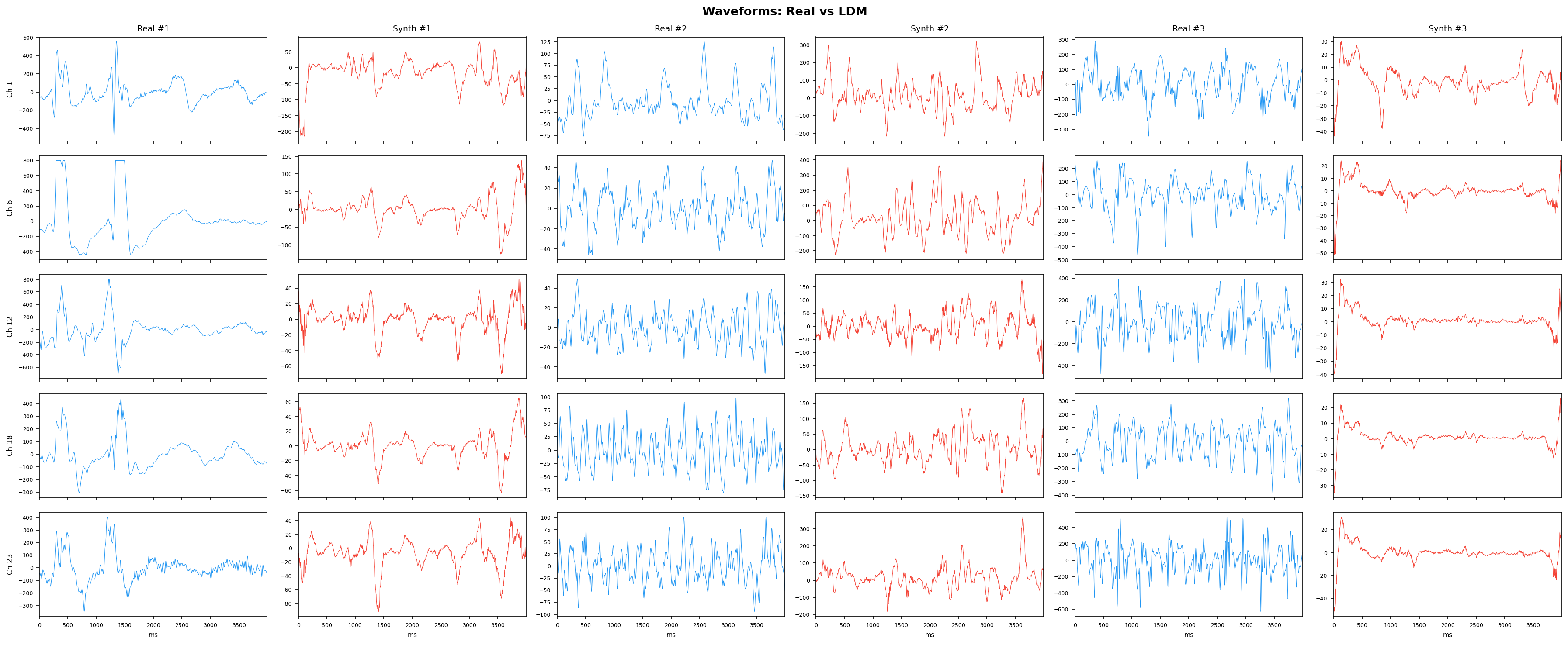

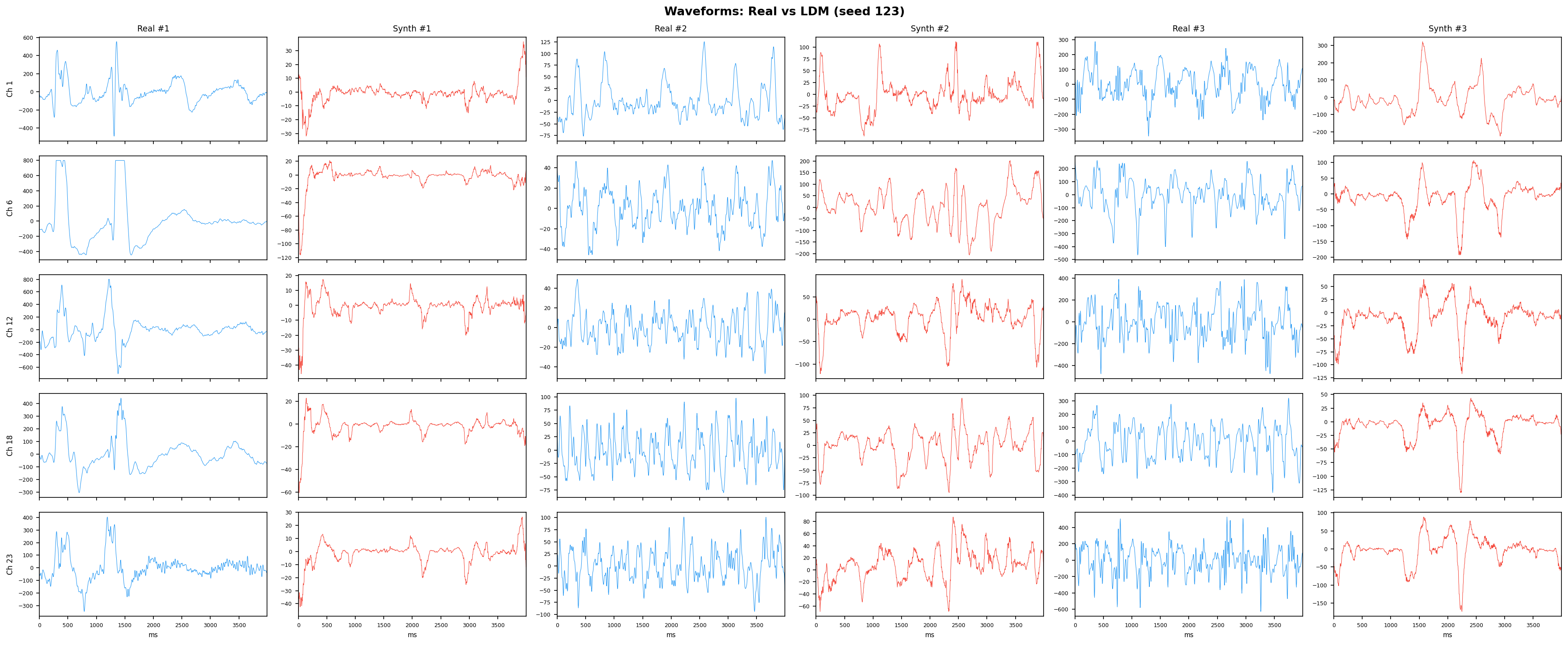

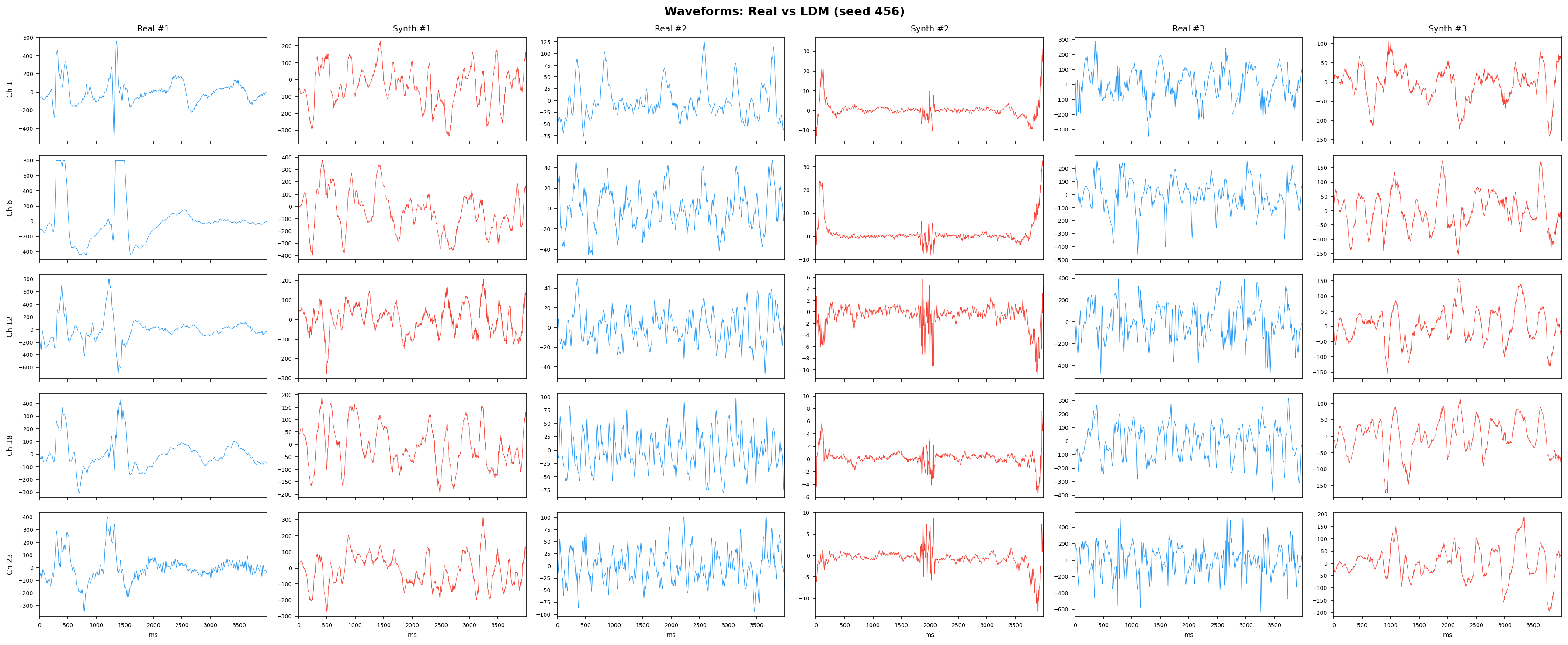

| 22 | chb24 | 0.0591 | 0.2396 |